Free Microsoft AZ-305 Practice Test Questions MCQs

Stop wondering if you're ready. Our Microsoft AZ-305 practice test is designed to identify your exact knowledge gaps. Validate your skills with Designing Microsoft Azure Infrastructure Solutions questions that mirror the real exam's format and difficulty. Build a personalized study plan based on your free AZ-305 exam questions mcqs performance, focusing your effort where it matters most.

Targeted practice like this helps candidates feel significantly more prepared for Designing Microsoft Azure Infrastructure Solutions exam day.

22800+ already prepared

Updated On : 7-Apr-2026280 Questions

Designing Microsoft Azure Infrastructure Solutions

4.9/5.0

Topic 5: Misc. Questions

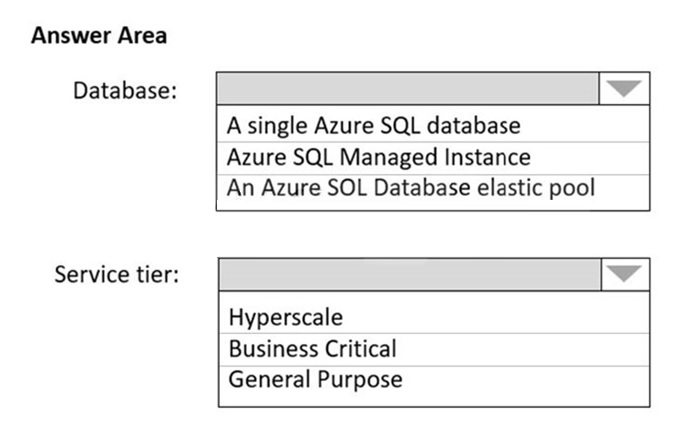

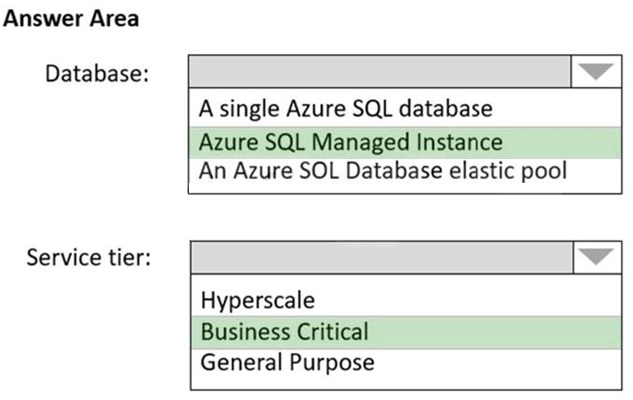

How should the migrated databases DB1 and DB2 be implemented in Azure?

I notice that the image content you've provided describes the available Azure SQL deployment options and service tiers, but it does not include the specific requirements or context for databases DB1 and DB2. To answer the question "How should the migrated databases DB1 and DB2 be implemented in Azure?" I need the details of the scenario.

Please provide the full question, which typically includes:

The specific technical and business requirements for each database (e.g., "requires a 99.995% SLA," "must support in-place transparent data encryption," "has unpredictable usage patterns").

Any constraints, such as the need for minimal administrative overhead, specific networking features, or compatibility with a certain SQL Server version.

The differences between DB1 and DB2 that would justify choosing different configurations.

Once you provide the complete question text, I will be able to give you a detailed answer following your requested format.

For now, I can offer a general explanation of the choices presented in your image:

Database Options:

Single Database:

A standalone, fully managed database in Azure.

Elastic Pool:

A collection of single databases with a shared set of resources (e.g., CPU, memory), ideal for multi-tenant applications or databases with unpredictable usage to optimize cost.

Managed Instance:

A fully managed instance of SQL Server with near-100% feature parity with on-premises SQL Server, best for lift-and-shift migrations requiring instance-level features.

Service Tiers:

General Purpose:

A budget-oriented tier that separates compute and storage, suitable for most business workloads.

Business Critical:

A high-performance tier that uses local SSD storage for low-latency and high resilience, often used for applications requiring high IO and fail-fast recovery.

Hyperscale:

A tier for very large databases (up to 100 TB) that scales compute and storage independently, designed for rapid scaling and fast backups/restores.

Please share the full question, and I will provide the specific, targeted analysis you need.

You are designing a high-availability solution for an Azure SQL deployment. You need to recommend an Azure SQL deployment option that minimizes how long it takes to perform a database backup. Which deployment option should you recommend of the following?

A. Business Critical

B. General Purpose

C. Hyperscale

D. Serverless

Summary

The question focuses on minimizing backup duration, which is a function of the underlying storage architecture and backup process. Traditional service tiers perform backups by copying data, which can be slow for large databases. The Hyperscale tier uses a unique snapshot-based backup mechanism on its distributed, tiered storage. This allows backups to complete almost instantly, regardless of database size, because it does not involve physically copying the live data files.

Correct Option

C. Hyperscale

The Hyperscale service tier is specifically engineered for massive databases and addresses the challenge of slow backups. It uses snapshots of the data pages on its distributed, tiered storage system. This process is nearly instantaneous because it avoids the traditional method of physically copying data. The backup time remains constant and fast, even as the database grows into the terabyte or petabyte range, making it the definitive choice for minimizing backup duration.

Incorrect Options

A. Business Critical

While Business Critical offers high performance and resilience through local SSD storage and high availability replicas, its backup process is still based on the traditional copy method. For very large databases, this can result in significant backup times, as the entire data set must be read and copied to a backup storage location. It does not provide the near-instantaneous snapshot backups of the Hyperscale tier.

B. General Purpose

General Purpose uses remote storage (Azure Premium Storage) and separates compute from storage. Its backup process also relies on copying data, which can be I/O intensive and time-consuming for large databases. It is designed for budget-oriented, general workloads and does not include any specialized features to accelerate the backup process like Hyperscale does.

D. Serverless

Serverless is a compute tier for the General Purpose service tier, not a separate deployment option. It automates the scaling of compute resources based on workload demand. However, it inherits the standard backup mechanism of the General Purpose tier, which is based on data copying. Therefore, it does not offer any improvement in backup speed compared to the provisioned General Purpose tier.

Reference

Microsoft Learn, "Hyperscale service tier - Azure SQL Database": https://learn.microsoft.com/en-us/azure/azure-sql/database/service-tier-hyperscale - This document explains the architecture, including its snapshot-based backup and restore capabilities.

You have an Azure subscription named Sub1 that is linked to an Azure AD tenant named contoso.com.

You plan to implement two ASP.NET Core apps named App1 and App2 that will be deployed to 100 virtual machines in Sub1. Users will sign in to App1 and App2 by using their contoso.com credentials.

App1 requires read permissions to access the calendar of the signed-m user. App2 requires write permissions to access the calendar of the signed-in user.

You need to recommend an authentication and authorization solution for the apps. The solution must meet the following requirements:

• Use the principle of least privilege.

• Minimize administrative effort

What should you include in the recommendation? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one pent.

Summary:

The scenario describes two apps (App1 and App2) that need to access the signed-in user's calendar with different permission levels (Read vs. Write). The key requirements are using the least privilege and minimizing administrative effort. Since the apps run on Azure VMs and need to act on behalf of the user, the optimal solution is to use a managed identity for secure, credential-free authentication to Azure AD. For authorization, since the apps need to access user data (their calendar) and not manage Azure resources, Microsoft Graph API permissions (Delegated) should be used. This allows the apps to request only the specific permissions they need for the user's data.

Correct Options:

Authentication: A user-assigned managed identity

A user-assigned managed identity can be created as a standalone Azure resource and then assigned to multiple Azure resources, like all 100 VMs. This is far more efficient for minimizing administrative effort than using a system-assigned identity (which is unique to each VM) or an app registration secret (which requires manual credential management). It provides a secure, automated identity without storing any secrets in the app code.

Authorization: Delegated permissions

Delegated permissions are used when an app acts on behalf of a signed-in user. The app can only perform actions that the user themselves has permission to do. This perfectly aligns with the least privilege principle: App1 can be granted Calendars.Read and App2 Calendars.ReadWrite, and a user who only has read access to their own calendar cannot grant App2 write access. This is more secure for user-data scenarios than application permissions, which are highly privileged and grant the app access to all calendars in the tenant, regardless of the user.

Incorrect Options

Authentication: Application registration in Azure AD

While an app registration is necessary to define the app's identity and permissions, using it directly for authentication (e.g., with a client secret) is less secure and requires more administrative effort. You would have to manage and securely rotate these secrets across all 100 application instances, which violates the "minimize administrative effort" requirement.

Authentication: A system-assigned managed identity

A system-assigned managed identity is enabled directly on an individual Azure resource (like a single VM). Using it for 100 VMs would result in 100 separate identities. This creates significant administrative overhead to configure and assign permissions for each one, contradicting the goal to minimize effort.

Authorization: Azure role-based access control (Azure RBAC)

Azure RBAC is used to control access to manage Azure resources (like VMs, storage accounts, or subscriptions). It is not used to grant permissions to access data within a user's Microsoft 365 calendar. For data-plane operations in Microsoft Graph, API permissions (Delegated or Application) are the correct mechanism.

Authorization: Application permissions

Application permissions grant the app itself the rights to access calendars, without a signed-in user. This would allow the app to access any calendar in the tenant, which is a massive privilege escalation far beyond the "read/write to the signed-in user's calendar" requirement. This blatantly violates the principle of least privilege.

Reference:

Microsoft Learn, "Managed identities for Azure resources": https://learn.microsoft.com/en-us/azure/active-directory/managed-identities-azure-resources/overview

Microsoft Learn, "Permissions and consent in the Microsoft identity platform": https://learn.microsoft.com/en-us/azure/active-directory/develop/v2-permissions-and-consent

You have 12 Azure subscriptions and three projects. Each project uses resources across multiple subscriptions.

You need to use Microsoft Cost Management to monitor costs on a per project basis. The solution must minimize administrate effort.

Which two components should you include in the solution? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

A. budgets

B. resource tags

C. custom role-based access control (RBAQ roles

D. management groups

E. Azure boards

B. resource tags

Summary:

The goal is to track costs per project across multiple subscriptions with minimal effort. Microsoft Cost Management can group and analyze costs based on Azure resource tags. By applying a consistent tag (e.g., "Project") to all resources in all 12 subscriptions, you can easily filter and report costs by project. Budgets can then be created that are scoped to these tags to monitor spending and generate alerts for each individual project, providing a centralized and automated monitoring solution.

Correct Options:

A. budgets:

Budgets in Cost Management are used to proactively monitor and alert on spending. After costs are categorized using tags, you can create budgets that are scoped to a specific tag value (e.g., "Project=ProjectA"). This allows you to set financial thresholds and receive notifications for each project individually, ensuring cost oversight without manual tracking.

B. resource tags:

Resource tags are key-value pairs (e.g., "Project: Alpha") applied to Azure resources. They are the fundamental component for categorizing costs by project in this scenario. Once applied, Cost Management can group all costs based on the tag, regardless of which subscription the resource resides in. This provides a unified, cross-subscription view of spending for each project and minimizes the administrative effort of creating complex reporting structures.

Incorrect Options:

C. custom role-based access control (RBAC) roles:

Custom RBAC roles are used to define fine-grained permissions for managing Azure resources. They control who can do what but do not have any inherent functionality for grouping or tracking costs. While you might use them to control who can apply tags, they are not a component for cost monitoring itself.

D. management groups:

Management groups are used to efficiently manage access, policies, and compliance across multiple subscriptions. While you can view costs for a management group, they are a hierarchical governance structure, not a project-based categorization tool. Organizing 12 subscriptions by three projects would be inflexible and not minimize effort if subscriptions are shared across projects.

E. Azure boards:

Azure Boards is a service in Azure DevOps for planning, tracking, and discussing work. It is a project management and agile planning tool and is completely unrelated to financial tracking and cost management in Azure.

Reference:

Microsoft Learn, "Use tags to organize your Azure costs": https://learn.microsoft.com/en-us/azure/cost-management-billing/costs/cost-analysis-common-uses#group-and-filter-by-resource-tags

Microsoft Learn, "Create and manage Azure budgets": https://learn.microsoft.com/en-us/azure/cost-management-billing/costs/tutorial-acm-create-budgets

You have to deploy an Azure SQL database named db1 for your company. The databases must meet the following security requirements.

When IT help desk supervisors query a database table named customers, they must be able to see the full number of each credit card.

When IT help desk operators query a database table named customers, they must only see the last four digits of each credit card number.

A column named Credit Card rating in the customers table must never appear in plain text in the database system. Only client applications must be able to decrypt the information that is stored in this column.

Which of the following can be implemented for the Credit Card rating column security requirement?

A. Always Encrypted

B. Azure Advanced Threat Protection

C. Transparent Data Encryption

D. Dynamic Data Masking

Summary

The requirement for the "Credit Card rating" column is that it must never appear in plaintext within the database system, and only client applications should be able to decrypt it. This is a classic use case for client-side encryption, where the database engine itself never handles the unencrypted data or the encryption keys. This ensures that even highly privileged database administrators cannot see the sensitive data, as decryption only happens within the trusted client application.

Correct Option

A. Always Encrypted

Always Encrypted is specifically designed for this scenario. It ensures that sensitive data is encrypted inside the client application before being sent to the database. The Azure SQL Database service only ever stores and interacts with the encrypted data, never the plaintext values or the encryption keys. This meets the requirement that the column "must never appear in plain text in the database system," as decryption is exclusively performed by the authorized client application using a key stored in a trusted key store like Azure Key Vault.

Incorrect Options

B. Azure Advanced Threat Protection (now Microsoft Defender for SQL)

This is a security monitoring and threat detection service. It helps identify anomalous activities and potential attacks, such as SQL injection or access from suspicious locations. It does not provide a mechanism for encrypting specific columns of data. Its purpose is alerting, not data encryption.

C. Transparent Data Encryption (TDE)

TDE performs encryption at rest for the entire database, including its data and log files. It protects against threats involving the theft of physical storage media. However, the SQL Database engine still decrypts the data into memory when it is queried. This means the data appears in plaintext within the database system, which violates the specific requirement stated for the "Credit Card rating" column.

D. Dynamic Data Masking

Dynamic Data Masking is a data obfuscation feature that hides sensitive data in the query results based on the user's permissions. It does not encrypt the data at rest. The underlying data in the database is still stored in plaintext, which directly contradicts the requirement for the column to never appear in plaintext within the database system. DDM is the technology that would be used to fulfill the requirement for the help desk operators seeing only the last four digits of the credit card number.

Reference

Microsoft Learn, "Always Encrypted": https://learn.microsoft.com/en-us/sql/relational-databases/security/encryption/always-encrypted-database-engine - This document explains how Always Encrypted separates data owners from data managers by allowing clients to encrypt sensitive data inside client applications without sharing the encryption keys with the database engine.

You have data files in Azure Blob Storage.

You plan to transform the files and move them to Azure Data Lake Storage.

You need to transform the data by using mapping data flow.

Which service should you use?

A. Azure Data Box Gateway

B. Azure Databricks

C. Azure Data Factory

D. Azure Storage Sync

Summary

The requirement is to transform data files using a specific feature called mapping data flow. Mapping data flow is a visually designed data transformation feature that is native to Azure Data Factory (ADF). It allows you to build code-free or code-friendly ETL (Extract, Transform, Load) logic that ADF executes on a managed Spark cluster. This directly fulfills the need to transform data from Azure Blob Storage and move it to Azure Data Lake Storage, all within a single, integrated service.

Correct Option

C. Azure Data Factory

Azure Data Factory is Azure's cloud-based data integration service. Its core purpose is to orchestrate and execute data movement and transformation workflows. The mapping data flow feature is a dedicated component of ADF, providing a graphical interface to build complex data transformation logic without writing Spark code. ADF can natively connect to both Azure Blob Storage as a source and Azure Data Lake Storage as a sink, making it the definitive choice for this task.

Incorrect Options

A. Azure Data Box Gateway

Azure Data Box Gateway is a virtual appliance used for offline data ingestion into Azure. It is designed for physically moving large amounts of data over the network into Azure Blob Storage or Azure Files. It does not possess any data transformation capabilities and cannot execute mapping data flows.

B. Azure Databricks

While Azure Databricks is a powerful analytics platform based on Apache Spark and is excellent for complex data transformation, it does not have a native feature called "mapping data flow." Mapping data flow is a specific product feature of Azure Data Factory. You could perform the transformation in Databricks by writing Spark code (PySpark, Scala, etc.), but the question specifically asks for the service that uses "mapping data flow."

D. Azure Storage Sync

Azure Storage Sync is a service used to centralize file shares in Azure Files and enable cloud tiering and multi-site synchronization with Windows Server file servers. It is for file replication and storage management, not for data transformation. It has no ETL or data processing capabilities.

Reference

Microsoft Learn, "Mapping Data Flow in Azure Data Factory": https://learn.microsoft.com/en-us/azure/data-factory/concepts-data-flow-overview - This document explains that mapping data flows provide a way to design data transformation logic graphically in ADF, which is then executed as Spark jobs without the need to manage clusters or write code.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are designing an Azure solution for a company that has four departments. Each department will deploy several Azure app services and Azure SQL databases.

You need to recommend a solution to report the costs for each department to deploy the app services and the databases. The solution must provide a consolidated view for cost reporting that displays cost broken down by department.

Solution: Create a separate resource group for each department. Place the resources for each department in its respective resource group.

Does this meet the goal?

A. Yes

B. No

Explanation:

Resource groups are management containers, not a native cost-reporting or chargeback mechanism. While grouping a department's resources together physically is good practice, it does not, by itself, create a consolidated cost report broken down by that department. Cost analysis requires a cost-related identifier like a Tag or a Billing Scope (e.g., a department-specific subscription).

Correct Option:

B. No

The core issue is that this solution uses resource groups for organization, not for cost reporting.

While you can filter costs by resource group in Cost Analysis, this is a technical filter. The design lacks the critical step of tagging all resources in each group with a consistent tag (e.g., Department=Finance) to enable a reliable, consolidated financial view across subscriptions or management groups.

Incorrect Option:

A. Yes

This answer incorrectly assumes that physically grouping resources is sufficient for financial reporting. Without a policy to enforce cost-related metadata (tags) or a dedicated billing structure per department, costs cannot be accurately or easily reported on by department, especially if resources exist across multiple subscriptions.

Reference:

Microsoft Learn: Use tags to organize your Azure resources and management hierarchy - Tags are the primary method for consolidating costs across resources for reporting purposes.

Microsoft Learn: Analyze costs with cost analysis - Demonstrates that grouping/filtering in the Cost Management tool is done by Tag, Resource Group, etc.

You have to design a Data Engineering solution for your company. The company currently has an Azure subscription. They also have application data hosted in a database on a Microsoft SQL Server hosted in their on-premises data center server. They want to implement the following requirements Transfer transactional data from the on-premises SQL server onto a data warehouse in Azure. Data needs to be transferred every day in the night as a scheduled job.

A managed Spark cluster needs to be in place for data engineers to perform analysis on the data stored in the SQL data warehouse. Here the data engineers should have the ability to develop notebooks in Scale, R and Python.

They also need to have a data lake store in place for the ingestion of data from multiple data sources Which of the following would the use for hosting the data warehouse in Azure?

A. Azure Data Factory

B. Azure Databricks

C. Azure Data Lake Gen2 Storage accounts

D. Azure Synapse Analytics

Summary

The question asks for the service to host the data warehouse in Azure. A data warehouse is a specialized relational database designed for analytical queries and reporting on large volumes of data. While Azure Data Lake is for raw data storage and Azure Databricks is for data processing, the service specifically built as a cloud-native, large-scale data warehouse is Azure Synapse Analytics (formerly SQL Data Warehouse). It is a core component of the modern data warehouse pattern in Azure, capable of running both traditional SQL-based data warehousing workloads and integrated Spark analytics.

Correct Option

D. Azure Synapse Analytics

Azure Synapse Analytics is a limitless analytics service that brings together enterprise data warehousing and Big Data analytics. It provides a dedicated SQL pool engine specifically designed for running high-performance, petabyte-scale data warehousing workloads. It directly fulfills the requirement to host the data warehouse where transactional data from the on-premises SQL Server will be loaded. Furthermore, it natively integrates with managed Spark pools (allowing notebooks in Scala, R, and Python) and uses Azure Data Lake Storage Gen2 as its underlying storage, meeting all the other stated needs in a unified platform.

Incorrect Options

A. Azure Data Factory

Azure Data Factory is an orchestration and data integration service. It is the correct tool for building the scheduled job to transfer data every night from the on-premises SQL Server, but it is not a data storage or warehousing service itself. It moves and transforms data between source and destination systems but does not host a data warehouse.

B. Azure Databricks

Azure Databricks is a managed Apache Spark platform used for data engineering, data science, and machine learning. It is the ideal environment for the data engineers to perform analysis using notebooks. However, it is a data processing engine, not a data warehouse. While it can query data, it does not provide the same optimized, SQL-based, massively parallel processing (MPP) architecture that a dedicated data warehouse like Synapse Analytics offers.

C. Azure Data Lake Gen2 Storage accounts

Azure Data Lake Storage Gen2 is the foundational data lake storage service in Azure. It is designed for storing vast amounts of raw data in its native format and is a critical part of the solution for ingesting data from multiple sources. However, it is a file system, not a relational data warehouse. While Synapse Analytics uses it for storage, the data warehouse service itself that provides the SQL query engine is Synapse Analytics, not the storage account.

Reference

Microsoft Learn, "What is Azure Synapse Analytics?": https://learn.microsoft.com/en-us/azure/synapse-analytics/overview-what-is - This document explains that Azure Synapse Analytics is an enterprise analytics service that accelerates time to insight across data warehouses and Big Data systems, combining SQL and Spark technologies.

A company has an existing web application that runs on virtual machines (VMs) in Azure.

You need to ensure that the application is protected from SQL injection attempts and uses a layer-7 load balancer. The solution must minimize disruption to the code for the existing web application.

What should you recommend? To answer, drag the appropriate values to the correct items.

Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Summary

The requirement is to protect from SQL injection (a web attack) and use a Layer-7 load balancer with minimal code disruption. Azure Application Gateway is a web traffic load balancer that operates at OSI Layer 7 (HTTP/HTTPS). It has an integrated, pre-configured Web Application Firewall (WAF) SKU that protects web applications from common vulnerabilities like SQL injection without requiring any changes to the application code itself. Deploying it in front of the VMs fulfills both core requirements seamlessly.

Correct Option Justification

Azure service: Azure Application Gateway

Azure Application Gateway is a Layer-7 load balancer. It is the only service listed that natively provides both advanced HTTP/S routing (like URL-based routing) and an integrated Web Application Firewall (WAF) in a single product. Deploying it in front of the existing VMs acts as a secure entry point, inspecting all incoming web traffic for threats like SQL injection before it reaches the application, thus meeting both security and load balancing requirements without code changes.

Feature: Web Application Firewall (WAF)

Web Application Firewall (WAF) is a feature of Application Gateway (WAF_v2 SKU) that provides centralized, platform-level protection for your web applications from common exploits and vulnerabilities. It uses rule sets from the OWASP core rule set to automatically defend against attacks like SQL injection and cross-site scripting. Enabling WAF on the Application Gateway is the direct method to fulfill the requirement to "protect the application from SQL injection attempts."

Incorrect Option Explanations

Azure Load Balancer:

This is a Layer-4 (TCP/UDP) load balancer. It is excellent for distributing non-HTTP traffic or for high-performance forwarding but lacks the ability to inspect HTTP content for SQL injection attacks. It does not have a WAF feature.

Azure Traffic Manager:

This is a DNS-based traffic load balancer that works at the DNS level. It directs users to the closest or healthiest endpoint globally but does not terminate the connection or inspect the HTTP traffic for attacks. It is ineffective for protecting against SQL injection.

SSL offloading:

This is a feature of Application Gateway, not a service. While Application Gateway can perform SSL offloading, the primary requirement is protection from SQL injection, which is achieved by the WAF feature.

URL-based content routing: This is another feature of Application Gateway. It allows routing traffic to different backend pools based on the URL path. While useful, it is not the feature that provides security against SQL injection.

Reference

Microsoft Learn, "Web Application Firewall on Azure Application Gateway": https://learn.microsoft.com/en-us/azure/web-application-firewall/ag/ag-overview

Microsoft Learn, "Azure Application Gateway overview": https://learn.microsoft.com/en-us/azure/application-gateway/overview

You plan to deploy an app that will use an Azure Storage account.

You need to deploy the storage account. The solution must meet the following requirements:

• Store the data of multiple users.

• Encrypt each user's data by using a separate key.

• Encrypt all the data in the storage account by using Microsoft keys or customer-managed keys.

What should you deploy?

A.

files in a general purpose v2 storage account.

B.

blobs in an Azure Data Lake Storage Gen2 account.

C.

files in a premium file share storage account.

D.

blobs in a general purpose v2 storage account

blobs in a general purpose v2 storage account

Summary

The core requirement is to store data for multiple users and encrypt each user's data with a separate key, while also allowing the entire storage account to be encrypted with Microsoft or customer-managed keys. This points to a need for object-level encryption with unique keys per security principal (user). Azure Blob Storage supports this natively through a feature called client-provided encryption keys or, more commonly for this scenario, by using Azure Key Vault and user-specific access to different encryption keys. While Azure Data Lake Storage Gen2 is built on Blob Storage, the standard Blob Storage in a GPv2 account is the fundamental service that provides the granular, per-user key management capability described.

Correct Option

D. blobs in a general purpose v2 storage account

A General-Purpose v2 (GPv2) storage account is the standard and most feature-complete account kind for storing blob data. It supports Service Encryption at Rest using either Microsoft-managed keys or customer-managed keys in Azure Key Vault for the entire account. Crucially, it also provides the granularity needed to encrypt a specific user's data with a separate key. This can be achieved by having each user provide their own encryption key on the request (client-provided key) or by designing an application that uses a unique Key Vault key for each user, which the application then uses to encrypt that user's blobs before uploading.

Incorrect Options

A. files in a general purpose v2 storage account & C. files in a premium file share storage account

Azure Files (both standard and premium tiers) is designed for SMB file shares, presenting a single, unified share that multiple users access. While the entire share is encrypted, it does not support the granular, per-user encryption key requirement. All data in the share is encrypted with the same underlying key (Microsoft-managed or customer-managed). You cannot assign a unique encryption key to files belonging to a specific user within the same share.

B. blobs in an Azure Data Lake Storage Gen2 account

This is a strong distractor. Azure Data Lake Storage Gen2 is a set of capabilities for big data analytics built on top of a GPv2 storage account with a hierarchical namespace enabled. It inherits the same encryption capabilities as the base Blob Storage. However, the question is asking for the fundamental service to deploy. The core capability to encrypt data with separate keys per user is a feature of the underlying Blob Storage service. While you could implement this with ADLS Gen2, the most direct and fundamental answer is a standard GPv2 account for blobs, as ADLS Gen2 is a specific configuration of it for analytics workloads.

Reference

Microsoft Learn, "Provide an encryption key in a request to Blob storage": https://learn.microsoft.com/en-us/rest/api/storageservices/encryption-customer-provided-keys - This documents the client-provided key feature, which allows specifying a unique key per request, enabling the per-user encryption scenario.

| Page 1 out of 28 Pages |

Designing Microsoft Azure Infrastructure Solutions Practice Exam Questions

These AZ-305 practice exam with explanations help candidates learn how to design scalable and secure Azure infrastructure solutions. Topics include architecture design, governance, networking, identity, and disaster recovery. Each explanation provides insight into design decisions and best practices, helping learners understand complex scenarios. This approach strengthens problem-solving and architectural thinking. By practicing these questions, candidates can improve their ability to design enterprise-grade solutions and confidently prepare for the certification exam.AZ-305: What This Exam Is About

AZ-305 (Designing Microsoft Azure Infrastructure Solutions) is a design-focused exam. You’re evaluated on how well you can choose the right architecture—secure, resilient, cost-aware, and aligned with requirements—more than on clicking through the portal.

The Design Skills You’ll Need

Translating business needs into technical requirements and constraints

Picking the best compute approach (VMs, containers, PaaS) for workload goals

Designing identity and security: least privilege, segmentation, governance

Networking architecture: hub-spoke, private connectivity, DNS strategy

Storage and data choices: performance tiers, redundancy, DR approach

Reliability + performance: availability, scaling, caching, monitoring

Cost management: trade-offs, sizing, reservations, lifecycle planning

How to Study Without Getting Lost

Stop trying to “cover everything.” Instead, practice design thinking:

Identify requirements (availability, latency, compliance, budget).

Spot constraints (region, legacy dependencies, data residency).

Propose two options, then justify the best one with trade-offs.

Common Traps Candidates Hit

Choosing services you know, not what the scenario demands

Ignoring governance (Policy, management groups, landing zone thinking)

Over-engineering: complex answers often lose to simpler, secure designs

Missing DR/RPO/RTO details hidden in the question

Practice That Moves the Score

AZ-305 test questions are wordy and scenario-heavy—timed practice matters. Full-length Designing Microsoft Azure Infrastructure Solutions practice test can help you get comfortable with design-style wording, improve elimination skills, and expose the weak areas you keep overlooking.

Success Stories From Our Clients

Preparation for Microsoft Certified: Azure Solutions Architect Expert (AZ-305) felt far more manageable with MSmcqs.com. The practice test questions focused on architecture design, governance, networking, and security scenarios similar to the real exam.

Daniel Hughes | United Kingdom