Topic 5: Misc. Questions

You have multiple on-premises locations. The locations host loT endpoints that generate real-time telemetry data. You have an Azure subscription. You need to process the telemetry data and provide real-time insights. The solution must minimize development effort. What should you use?

A. Azure Data Factory

B. Azure Data Lake Analytics

C. Log Analytics

D. Azure Stream Analytics

Explanation:

Why this is correct

Real-time processing: Azure Stream Analytics (ASA) is a fully managed, serverless stream-processing service designed for low-latency, real-time analytics on telemetry. It runs continuous queries over event streams and can detect patterns, anomalies, and conditions as data arrives.

Minimal development effort: ASA uses a SQL-like query language with built-in windowing, aggregation, and temporal functions, so you can implement real-time rules and detection logic quickly without building and managing custom streaming infrastructure.

Integration with IoT ingestion services and sinks: ASA natively reads from Event Hubs or IoT Hub (common ingestion endpoints for on-premises IoT gateways) and can output to many sinks (Power BI, SQL Database, Cosmos DB, Service Bus, Functions, etc.) to trigger actions or store results.

Why the other options are not suitable

A. Azure Data Factory: Designed for orchestrating and running batch ETL/data movement pipelines; not intended for low-latency, continuous stream processing.

B. Azure Data Lake Analytics: A batch analytics service (U-SQL) for large offline jobs; not for real-time telemetry processing.

C. Log Analytics: A log storage and query service (Azure Monitor Logs) for analysis and alerting; it’s not a stream-processing engine and does not perform continuous, low-latency aggregations or pattern detection on live streams.

Implementation sketch

Ingest telemetry from on-prem IoT endpoints into IoT Hub or Event Hubs.

Create an Azure Stream Analytics job that uses the hub as input, implement detection logic with tumbling/sliding windows or pattern matching, and configure outputs to the appropriate sink (e.g., Service Bus/Function to trigger removal, SQL/Cosmos DB for storage, Power BI for dashboards).

Monitor and scale ASA streaming units as needed.

Your on-premises network contains an Active Directory Domain Services (AD DS) domain.

The domain contains a server named Server1. Server1 contains an app named App1 that

uses AD DS authentication. Remote users access App1 by using a VPN connection to the

on-premises network.

You have a Microsoft Entra tenant that syncs with the AD DS domain by using Microsoft

Entra Connect.

You need to ensure that the remote users can access App1 without using a VPN. The

solution must meet the following requirements:

• Ensure that the users authenticate by using Azure Multi-Factor Authentication (MFA).

• Minimize administrative effort.

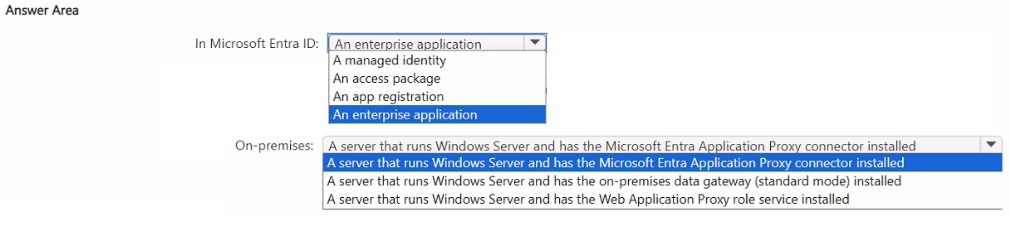

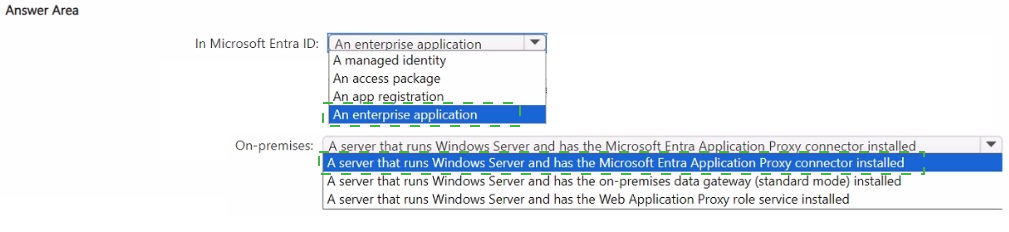

What should you include in the solution? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

Explanation:

This is a classic Microsoft Entra Application Proxy scenario.

Why this combination?

1. Enterprise Application (in Microsoft Entra ID)

You register/publish App1 as an Enterprise Application in the Microsoft Entra admin center.

This enables you to:

Assign users and groups

Configure Single Sign-On (SSO) with Kerberos/NTLM delegation (since App1 uses AD DS authentication)

Apply Conditional Access policies to enforce Azure MFA

This is the correct object type used with Application Proxy.

2. Microsoft Entra Application Proxy Connector (on-premises)

Install the lightweight Application Proxy connector on Server1 (or another Windows server in the domain).

The connector creates an outbound connection to the Microsoft Entra Application Proxy service in the cloud.

External users can then access App1 securely over the internet without a VPN.

No inbound firewall ports need to be opened.

Benefits

Meets both requirements: MFA (via Conditional Access) + no VPN.

Minimizes administrative effort — no need to rewrite the app, move it to Azure, or manage complex networking.

Why the other options are incorrect

Managed Identity / App Registration — Used for application permissions, not for publishing on-premises web apps.

On-premises Data Gateway — Used for Power Platform / Logic Apps connectivity, not for web app publishing.

Web Application Proxy role — This is the older Windows Server role (being replaced by Entra Application Proxy).

This is one of the most common AZ-305 topics related to secure hybrid application access.

You have an on-premises datacenter named Site1 that contains multiple servers.

You have an Azure subscription that contains a virtual network named VNet1. VNet1

contains multiple virtual machines. VNet1 uses the default Azure DNS service. Site1

connects to VNet1 by using a Site-to-Site (S2S) VPN.

You need to recommend a DNS solution that will forward DNS queries from the virtual

machines that target the on-premises DNS namespace to the on-premises DNS servers.

The solution must minimize administrative effort and costs.

What should you include in the recommendation?

A. Private DNS zone

B. Azure Firewall DNS Proxy

C. an Azure virtual machine that runs the DNS service

D. Private DNS resolver

Explanation:

The requirement is to forward DNS queries from Azure VMs targeting the on-premises DNS namespace to on-premises DNS servers, with minimal effort and cost. Here's why Private DNS resolver is the best fit:

Private DNS Resolver

Private DNS resolver is a fully managed Azure service specifically designed for hybrid DNS scenarios. You can create an inbound endpoint for resolving VNet-local queries and an outbound endpoint with forwarding rule sets that direct specific on-premises domain queries to your on-premises DNS servers. Once configured, Azure DNS automatically uses the resolver's outbound endpoint for the specified domain. This requires no VM to manage and is a managed PaaS service, minimizing administrative effort.

Private DNS Zone

Private DNS zone only provides name resolution within that zone; it does not provide forwarding to external DNS servers.

Azure Firewall DNS Proxy

Azure Firewall DNS Proxy can forward DNS queries but requires deploying and managing an Azure Firewall instance with DNS proxy settings. It is a more expensive and complex solution compared to the lightweight Private DNS resolver.

Azure Virtual Machine Running DNS Service

An Azure virtual machine that runs the DNS service requires deploying, maintaining, patching, and securing an IaaS VM, which demands more administrative effort than a managed service.

Conclusion

The Private DNS resolver directly fulfills the forwarding need, is natively integrated with Azure DNS, and keeps costs and management low.

References

What is Azure DNS Private Resolver?

Quickstart: Create an Azure DNS Private Resolver

You have an Azure subscription that contains 100 virtual machines in the North Europe Azure region. You replicate the virtual machines to the West Europe region by using Azure Site Recovery. You plan to perform disaster recovery testing once a month. The testing will be performed during an eight-hour period, during which the virtual machines will be accessed, and their functionality validated. The virtual machines will be shut down when testing is not being performed. You need to estimate the costs of the Site Recovery solution per month. Which costs should the estimate include?

A. the virtual machine compute costs for only eight hours, the virtual machine storage costs for the entire month, and the Site Recovery license costs per protected virtual machine for the entire month

B. the virtual machine compute costs for the entire month, the virtual machine storage costs for all the hours each month, and the Site Recovery license costs per protected virtual machine for the entire month

C. the virtual machine compute costs for only eight hours, the virtual machine storage costs for only eight hours, and the Site Recovery license costs per protected virtual machine for only eight hours

D. the virtual machine compute costs for only eight hours, the virtual machine storage costs for only eight hours, and the Site Recovery license costs per protected virtual machine for the entire month

Explanation:

When using Azure Site Recovery for virtual machine replication, the cost structure is divided into three primary components:

Site Recovery License Costs (Per Month)

Even when you are not actively testing or failing over, Azure charges a license fee for each protected virtual machine to maintain the replication engine and orchestration. For Azure VMs, this is typically billed per protected instance per month.

Virtual Machine Storage Costs (Per Month)

To ensure a low Recovery Time Objective (RTO), your data must be continuously replicated to the target region (West Europe). You are billed for the storage (managed disks) used by the replica VMs for the entire month, regardless of whether the VMs are powered on.

Virtual Machine Compute Costs (During Testing)

Azure only charges for compute resources (CPU/RAM) when the virtual machines are running. Since the requirement specifies that the VMs will be shut down when testing is not being performed, you only incur compute charges for the eight hours of validation time each month.

Why Other Options are Incorrect

Option B

Option B is incorrect because it includes compute costs for the entire month, which ignores the fact that the VMs are shut down for the vast majority of the time.

Option C

Option C is incorrect because it underestimates both storage and licensing. You must pay for the replica storage all month to keep the data synchronized, and the license is a monthly protection fee.

Option D

Option D is incorrect because it only accounts for eight hours of storage. Replication requires the storage to exist 24/7 to receive data changes from the primary region.

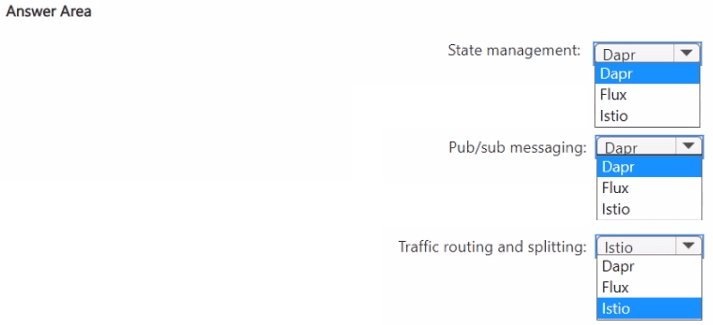

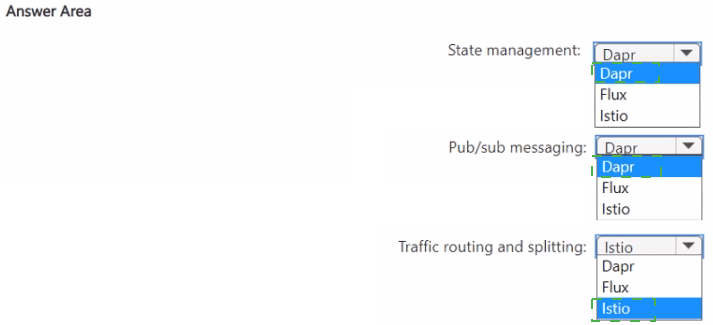

You plan to deploy multiple containerized microservice-based apps to Azure Kubernetes

Service (AKS).

You need to recommend a solution that implements the following functions:

• State management

• Pub/sub messaging

• Traffic routing and splitting

The solution must minimize administrative effort.

What should you include in the recommendation for each function? To answer, select the

appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

To recommend a solution for your Azure Kubernetes Service (AKS) microservices deployment that minimizes administrative effort, you should use a combination of Dapr and Istio.

1. State Management: Dapr

Functionality: Dapr (Distributed Application Runtime) provides a dedicated building block for state management. It allows microservices to store and retrieve state from various supported stores (like Redis, Cosmos DB, or Azure SQL) using a simple key/value API.

Administrative Effort: It abstracts the complexity of SDKs and connection management from the application code, allowing developers to switch state stores without changing code.

2. Pub/Sub Messaging: Dapr

Functionality: Dapr also includes a building block for Publish & Subscribe messaging. It enables event-driven architectures where services can communicate asynchronously.

Administrative Effort: Like state management, Dapr provides a uniform API for pub/sub. This means you can use Azure Service Bus, Event Hubs, or Redis as the underlying broker while the application remains decoupled from the specific infrastructure implementation.

3. Traffic Routing and Splitting: Istio

Functionality: Istio is a powerful service mesh designed specifically for managing the network layer of Kubernetes clusters. It excels at traffic routing (directing traffic based on headers, paths, etc.) and traffic splitting (shifting percentages of traffic between different versions of a service for Canary or Blue/Green deployments).

Administrative Effort: In AKS, you can use the Istio-based service mesh add-on, which is a managed offering. This significantly reduces administrative overhead by handling the installation, upgrades, and lifecycle management of the mesh for you.

Understanding the Architecture

Flux (the third option in the menu) is a tool for GitOps and continuous delivery, which is used for synchronizing cluster state with a Git repository, but it does not provide application-level state management or traffic splitting.

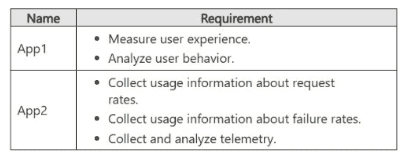

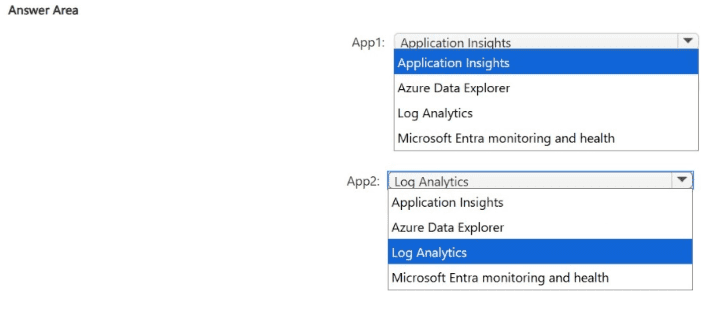

You plan to develop two apps that will be hosted in Azure. The apps must meet the

monitoring requirements shown in the following table.

What should you include in the recommendation for each app? To answer, select the

appropriate options in the answer area.

NOTE Each correct selection is worth one point.

Explanation:

Recommendation: App1 → Application Insights. App2 → Log Analytics. Application Insights is optimized for measuring user experience and analyzing user behavior; Log Analytics (Azure Monitor Logs) is the right store and analysis surface for request/failure rates and general telemetry aggregation.

Recommendation Table

App → Selected service

App1 → Application Insights

App2 → Log Analytics (Azure Monitor Logs)

Why App1 should use Application Insights

Purpose fit: Application Insights is an application performance monitoring (APM) service that collects client and server telemetry (page views, sessions, custom events, traces, dependencies) and provides user experience and behavior analysis features such as funnels, user flows, cohorts, and session metrics.

Low instrumentation effort: SDKs and the Azure Monitor OpenTelemetry distro make it straightforward to instrument web and mobile apps to capture user interactions and performance with minimal code changes.

Built-in UX analytics: Use Application Insights workbooks and usage experiences to analyze feature adoption, drop-offs, and session quality without moving data to another store.

Why App2 should use Log Analytics

Telemetry aggregation and analysis: Azure Monitor Logs (Log Analytics workspace) is designed to collect, retain, and analyze telemetry at scale (requests, failures, custom logs) and supports Kusto Query Language (KQL) for flexible analysis and alerting.

Request and failure metrics: You can ingest application logs, platform diagnostics, and custom metrics into a Log Analytics workspace and run queries to compute request rates, failure rates, SLOs, and create alerts/dashboards.

Centralized operations: Log Analytics is the canonical store for cross-resource telemetry and is ideal when you need long-term retention, complex queries, or to correlate telemetry across multiple apps or services.

Implementation notes and tradeoffs

Integration pattern: Instrument App1 with Application Insights SDK (client + server) to capture UX events; Application Insights stores telemetry in a workspace-backed resource and exposes usage analytics. App2 should send logs/metrics to a Log Analytics workspace (or route Application Insights data to the workspace if you need unified queries).

Correlation: If you need cross-app correlation (traces spanning App1 and App2), configure both to use the same Log Analytics workspace or enable diagnostic settings to route Application Insights data into the workspace.

Costs: Application Insights is optimized for application telemetry and UX analysis; Log Analytics pricing depends on ingestion and retention—use sampling and table plans to control costs.

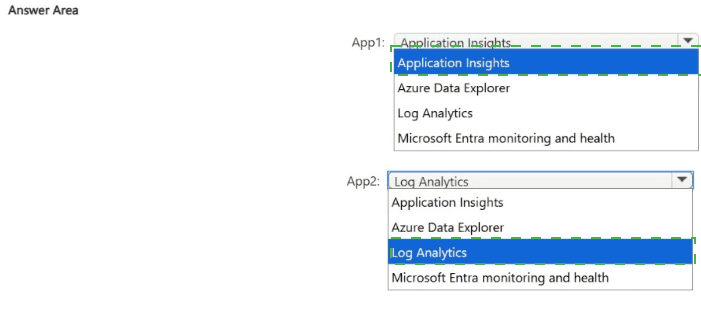

You have an on-premises datacenter and an Azure subscription. The environment contains

100 databases of various types, including Azure SQL Database, Azure SQL Managed

Instances, Azure Cosmos DB, Microsoft SQL Server, MySQL and Oracle. Multiple apps

access the databases.

You need to recommend a data solution. The solution must meet the following

requirements

• Provide a single searchable catalog of the data in all the databases. The catalog must

contain metadata of the data. The solution must minimize storage requirements.

• Provide a central data lake that contains specific data from multiple databases. The

solution must include a big data engine that can create machine learning models.

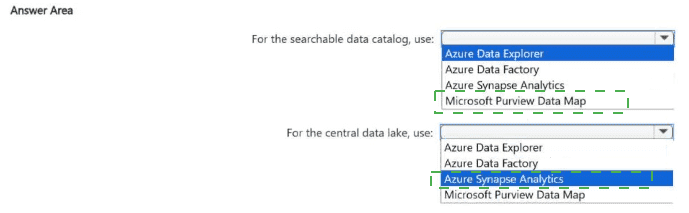

Explanation:

For the hybrid environment and data requirements described, the following Azure services should be included in your recommendation:

1. For the searchable data catalog, use: Microsoft Purview Data Map

Single Searchable Catalog: Microsoft Purview is specifically designed to provide a unified data governance solution. Its Data Map automatically captures and indexes metadata from a wide variety of sources, including on-premises SQL Server and Oracle, as well as Azure-native services like Azure SQL and Cosmos DB.

Metadata Focus: It stores only the metadata (schemas, lineage, classifications) rather than the data itself. This directly addresses the requirement to minimize storage requirements, as you aren't duplicating the actual database content.

Discovery: Users can use the Purview Data Catalog to search and discover data assets across the entire 100-database estate using a central interface.

2. For the central data lake, use: Azure Synapse Analytics

Central Data Lake Integration: Azure Synapse Analytics provides a unified workspace that integrates seamlessly with Azure Data Lake Storage Gen2. It can ingest data from multiple databases using built-in pipelines.

Big Data Engine: Synapse includes multiple compute engines, most notably Apache Spark pools. This is the standard "big data engine" for processing massive datasets at scale.

Machine Learning Models: Synapse Spark pools support common ML libraries (Spark MLlib, Scikit-learn, etc.). Furthermore, Synapse has deep native integration with Azure Machine Learning, allowing you to train, register, and deploy models directly from the Synapse environment.

You have a landing zone that contains multiple Azure subscriptions.

You need to automate the deployment of resources to the subscriptions. The solution must

meet the following requirements:

• Support parameters.

• Support versioning and source control.

Which two options can you use? Each correct answer presents a complete solution.

NOTE Each correct selection is worth one point.

A. Azure Resource Manager (ARM) templates

B. Azure Policy

C. Azure Automation

D. Bleep files

E. Azure Functions

D. Bleep files

Explanation:

Why these are correct

A. Azure Resource Manager (ARM) templates

Azure Resource Manager templates are the foundation of Infrastructure as Code (IaC) in Azure:

Support parameters → allows reusable and flexible deployments

JSON-based → easy to store in source control (Git)

Fully support versioning

Native deployment method across multiple subscriptions

D. Bicep files

Bicep is a higher-level abstraction over ARM templates:

Supports parameters and modular design

Designed specifically for clean, readable IaC

Fully compatible with version control systems

Compiles down to ARM templates → same deployment capabilities

👉 In modern Azure design, Bicep is often preferred over raw ARM templates due to simplicity.

Why the other options are incorrect

B. Azure Policy

Azure Policy is for:

Enforcing compliance and rules (e.g., allowed locations, SKUs)

Not for deploying full solutions with parameterized templates

C. Azure Automation

Azure Automation:

Used for runbooks and operational tasks

Can trigger deployments, but not a primary IaC tool with versioned templates

E. Azure Functions

Azure Functions:

Used for event-driven code execution

Not designed for declarative infrastructure deployment with parameterization

Key concept to remember

For automated, repeatable deployments across subscriptions with:

Parameterization

Version control

Infrastructure as Code

👉 Use:

ARM templates (JSON)

Bicep (modern, recommended)

Quick summary

ARM templates → Native IaC, parameterized, versionable ✅

Bicep → Cleaner IaC, same capabilities as ARM ✅

Azure Policy → Governance, not deployment ❌

Automation / Functions → Execution tools, not IaC ❌

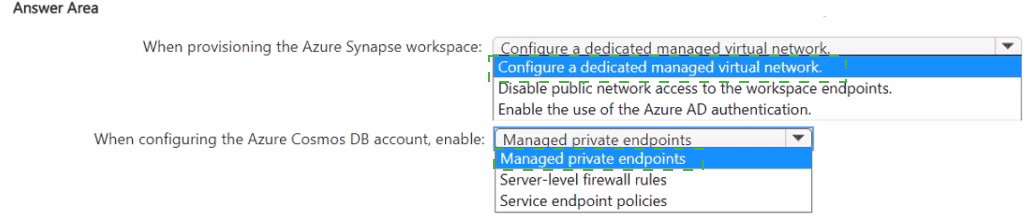

You need to recommend a solution to integrate Azure Cosmos DB and Azure Synapse.

The solution must meet the following requirements:

• Traffic from an Azure Synapse workspace to the Azure Cosmos D8 account must be sent

via the Microsoft backbone network.

• Traffic from the Azure Synapse workspace to the Azure Cosmos DB account must NOT

be routed over the internet.

• Implementation effort must be minimized.

What should you include in the recommendation? To answer, select the appropriate

options in the answer area.

NOTE: Each correct selection is worth one point.

">

Explanation:

To integrate Azure Cosmos DB and Azure Synapse while ensuring all traffic remains on the Microsoft backbone network and never traverses the public internet, you should use the following configuration:

1. When provisioning the Azure Synapse workspace: Configure a dedicated managed virtual network

Network Isolation: By enabling a Managed Virtual Network (VNet), Azure Synapse isolates the compute resources used for integration (like Spark pools or Integration Runtimes) within a network managed by the Synapse service itself.

Backbone Communication: This is a prerequisite for creating private links from Synapse to other Azure services, ensuring that data movement occurs entirely within the Azure network infrastructure rather than over public endpoints.

2. When configuring the Azure Cosmos DB account, enable: Managed private endpoints

Private Link Technology: A Managed Private Endpoint creates a private IP address within the Synapse Managed VNet that maps directly to your Azure Cosmos DB account.

Security & Routing: This ensures that all communication between the Synapse workspace and Cosmos DB is routed over Azure Private Link. Because the endpoint is "Managed" by Synapse, it minimizes implementation effort by handling the network plumbing automatically within Synapse Studio.

Requirement Match: This satisfies the requirement that traffic must NOT be routed over the internet and must stay on the Microsoft backbone network.

Reference

Microsoft Documentation: Managed private endpoints for Azure Synapse

Microsoft Documentation: Azure Synapse Link for Azure Cosmos DB

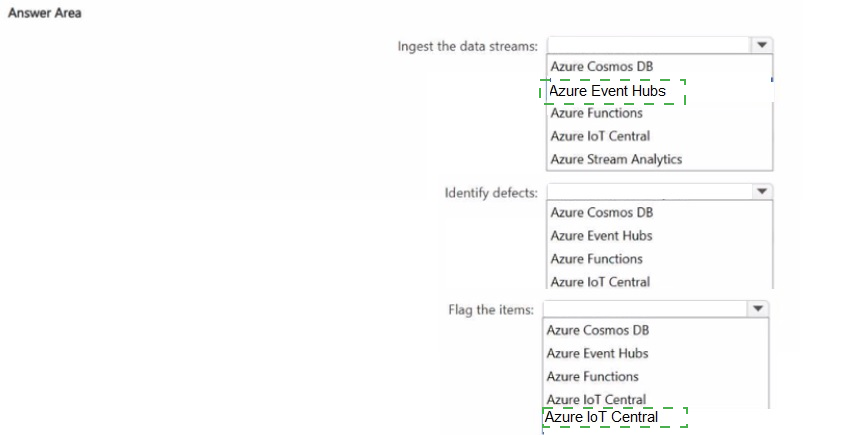

You have a production line that is monitored by using loT devices.

You are building a project that will process data streams from the loT devices.

You need to recommend a pipeline solution that will perform the following activities:

• Ingest the data streams from the loT devices.

• Analyze the data and identify manufacturing defects among items on the production line.

• When a manufacturing defect is identified, flag the item for removal from the production

line.

What should you include in the recommendation for each activity? To answer, select the

appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

">

Explanation:

Azure Event Hubs (ingest)

Event Hubs is a high-throughput, fully managed telemetry ingestion service designed to receive millions of events per second from devices and apps. It is the right choice to reliably ingest streaming telemetry from many IoT sensors on a production line with minimal operational overhead.

Azure Stream Analytics (identify defects)

Stream Analytics performs real-time stream processing with built-in windowing and pattern detection. It can run continuous queries (tumbling/sliding windows, anomaly detection functions, or call out to ML models) to analyze telemetry and detect defective items as data arrives. It is a low-admin, cost-efficient choice for real-time detection.

Azure IoT Central (flag items)

IoT Central is a managed IoT application platform that provides device management and command/control capabilities. When Stream Analytics detects a defect, it can output an action (for example via a webhook or Azure Function) that triggers IoT Central to send a command or update device state so the item can be removed from the production line. Using IoT Central minimizes custom device-management code and simplifies issuing removal commands.

Implementation sketch

Devices send telemetry to Event Hubs (or route device telemetry into Event Hubs from your IoT gateway).

Create a Stream Analytics job that reads from Event Hubs, applies windowed aggregations / anomaly detection or calls an ML endpoint, and outputs detection events.

On detection, Stream Analytics outputs to a webhook or Azure Function that calls IoT Central (or directly to IoT Central if supported) to issue the removal command or update device state.

| Page 2 out of 36 Pages |