Free Microsoft DP-700 Practice Test Questions MCQs

Stop wondering if you're ready. Our Microsoft DP-700 practice test is designed to identify your exact knowledge gaps. Validate your skills with Implementing Data Engineering Solutions Using Microsoft Fabric questions that mirror the real exam's format and difficulty. Build a personalized study plan based on your free DP-700 exam questions mcqs performance, focusing your effort where it matters most.

Targeted practice like this helps candidates feel significantly more prepared for Implementing Data Engineering Solutions Using Microsoft Fabric exam day.

21090+ already prepared

Updated On : 7-Apr-2026109 Questions

Implementing Data Engineering Solutions Using Microsoft Fabric

4.9/5.0

Topic 1: Contoso, Ltd

| Page 1 out of 11 Pages |

Implementing Data Engineering Solutions Using Microsoft Fabric Practice Exam Questions

These DP-700 practice questions with detailed explanations help candidates learn data engineering concepts within Microsoft Fabric. Topics include data ingestion, transformation, pipelines, and performance optimization. Each question is followed by a clear explanation that helps learners understand how data workflows are designed and implemented. This approach supports deeper learning rather than memorization. By practicing regularly, candidates can improve their technical understanding, identify knowledge gaps, and gain confidence in building data solutions using Microsoft Fabric while preparing effectively for the certification exam.Pre-Exam Guide: DP-700 – Microsoft Fabric Data Engineering

Core Exam Focus

The DP-700 validates your ability to design, implement, and operationalize data engineering solutions using Microsoft Fabric. This is Microsoft’s modern, unified platform—not just another Azure service. You must understand end-to-end workflows, from data ingestion to transformation, orchestration, and monitoring within the Fabric ecosystem.

Key Fabric Concepts to Master

OneLake & Lakehouses: Understand OneLake as Fabric’s centralized, unified storage layer (based on Azure Data Lake Storage Gen2). Know how to create and manage Lakehouses (default storage is in Delta Parquet format) and understand their relationship with SQL Endpoints and semantic models.

Notebooks & Spark: You must be proficient in using Fabric Notebooks (Python, PySpark, Spark SQL) for large-scale data transformations. Know how to configure Spark sessions and optimize performance.

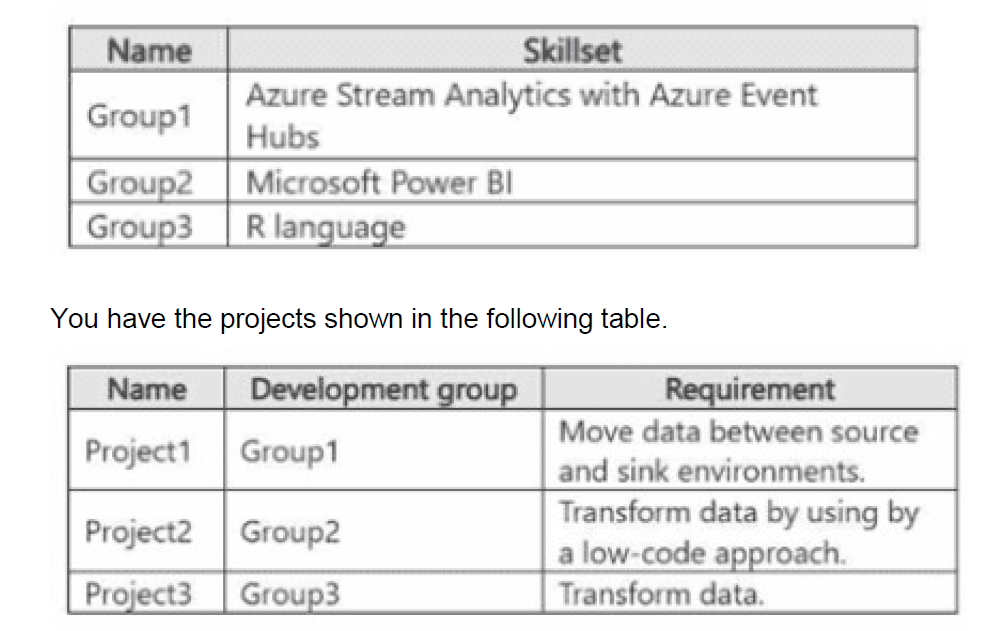

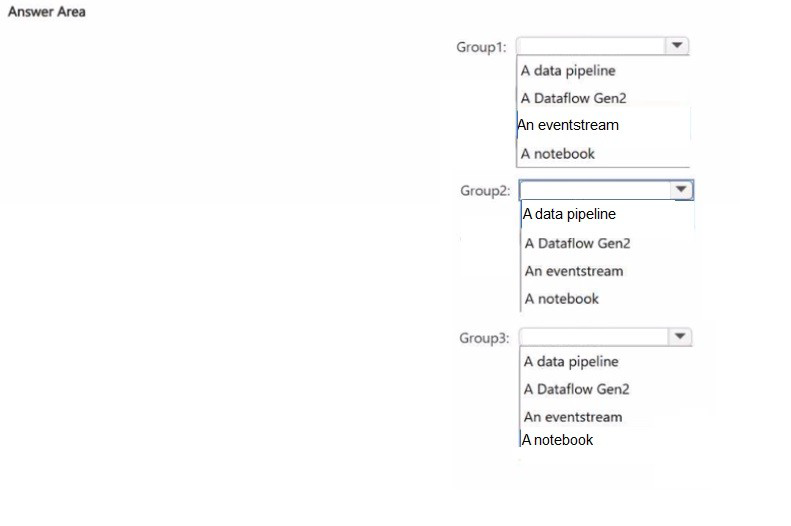

Data Pipelines & Dataflows: Be able to build and schedule data pipelines using the Fabric pipeline tool. Know when to use Dataflow Gen2 (low-code transformations) vs. Spark notebooks (code-first).

Orchestration with Data Factory: Understand how to create and monitor workflows to sequence and manage activities (pipelines, notebooks, Spark jobs) within Fabric.

Monitoring & Observability: Familiarize yourself with Fabric Monitoring Hub to track pipeline runs, Spark application performance, and troubleshoot failures.

Critical Skills to Demonstrate

Data Ingestion: Load data from various sources (files, Azure SQL, streaming) into Lakehouses or Data Warehouses.

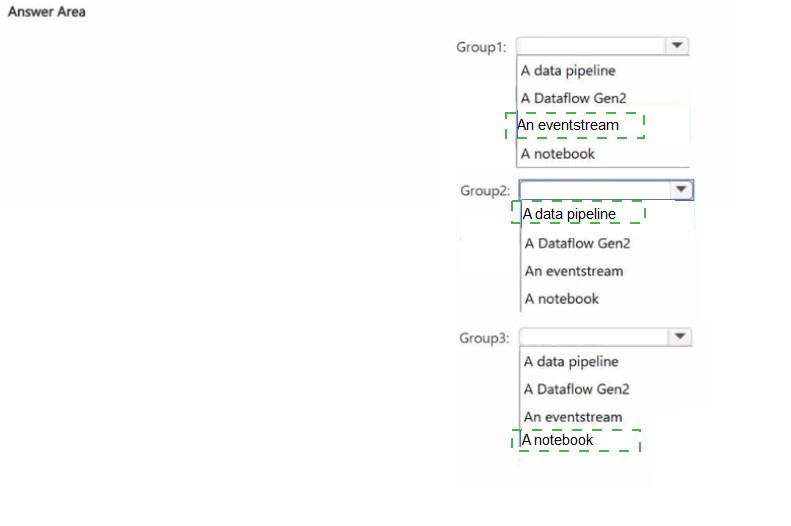

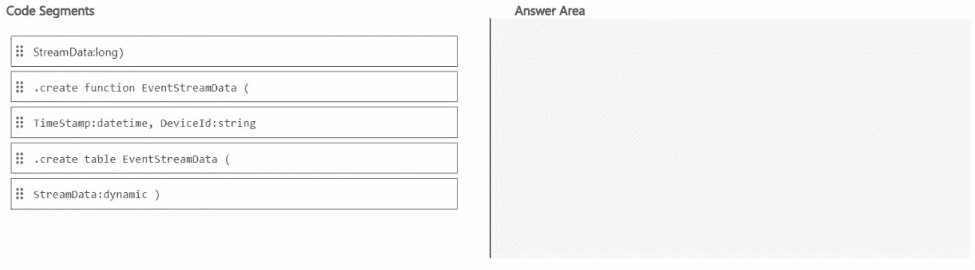

Transformation: Use PySpark/SQL to clean, join, and aggregate data. Know how to work with Delta tables (time travel, OPTIMIZE, VACUUM).

Data Warehousing: Understand the Fabric Data Warehouse (native T-SQL experience, separation from Lakehouse).

Security & Governance: Implement shortcuts for data access without duplication, manage workspace roles, and understand data lineage.

Preparation Strategy

Hands-On Practice is Mandatory: Use a Fabric Trial Capacity to build real solutions. The exam is scenario-heavy and assumes practical experience.

Know the Terminology: Distinguish between Fabric items (Lakehouse, Warehouse, Notebook) and their specific purposes.

Focus on Integration: The Implementing Data Engineering Solutions Using Microsoft Fabric exam tests how these components work together—e.g., loading data via pipeline into a Lakehouse, transforming with a notebook, and serving via a Warehouse.

The DP-700 demands a platform-level understanding rather than isolated tool knowledge. Your ability to navigate Fabric’s unified architecture and choose the right component for each job is key to passing. Prioritize hands-on labs over theory.

Real Stories From Real Customers

Preparing for Microsoft Certified: Fabric Data Engineer Associate became efficient with MSmcqs. DP-700 practice test clearly explained data pipelines, transformation, and analytics workflows.

Isabella Romano | Italy