Topic 3: Misc. Questions Set

You need to recommend a solution for handling old files. The solution must meet the technical requirements. What should you include in the recommendation?

A. a data pipeline that includes a Copy data activity

B. a notebook that runs the VACUUM command

C. a notebook that runs the OPTIMIZE command

D. a data pipeline that includes a Delete data activity

Explanation:

The question asks for a solution to handle old files in a Microsoft Fabric lakehouse (typically within the medallion architecture) that meets implied technical requirements such as data retention, storage cleanup, and compliance.

In Fabric (which uses Delta Lake format for lakehouses), old files accumulate over time due to:

ACID transaction logs

UPDATE, DELETE, MERGE operations creating new parquet files

INSERT overwrites

To physically remove old, unreferenced files (not just logically delete data), you must run the VACUUM command.

VACUUM removes files older than a retention threshold (default 7 days) that are no longer referenced in the Delta transaction log.

A notebook is the appropriate tool because VACUUM is a Spark SQL command that runs in a Spark engine (Fabric notebook or Spark job definition).

The notebook can be scheduled to run periodically (e.g., weekly) to clean up old files.

Why the other options are incorrect

A. a data pipeline that includes a Copy data activity

Copy activity is for ingesting or moving data, not for deleting old files from a lakehouse.

It does not address file retention.

C. a notebook that runs the OPTIMIZE command

OPTIMIZE compacts small files into larger ones and improves query performance, but it does not delete old, unreferenced files.

Old files remain until VACUUM is run.

This does not meet the requirement for handling old files from a retention and cleanup perspective.

D. a data pipeline that includes a Delete data activity

Fabric pipelines have a Delete activity for deleting files from lakehouse or ADLS Gen2 storage, but this is a manual deletion approach.

It does not understand Delta Lake transaction log state.

Using it risks corrupting the Delta table or leaving orphaned files.

VACUUM is the safe and supported method for cleaning old files in Delta tables.

Reference

Microsoft Learn: VACUUM command in Delta Lake (Fabric)

Microsoft Learn: Remove old files with VACUUM

You need to schedule the population of the medallion layers to meet the technical requirements. What should you do?

A. Schedule a data pipeline that calls other data pipelines.

B. Schedule a notebook.

C. Schedule an Apache Spark job.

D. Schedule multiple data pipelines.

Explanation:

In Microsoft Fabric, the medallion architecture (Bronze → Silver → Gold layers) typically involves multiple processing stages:

Bronze layer: Raw ingestion (often using Copy Data activities, ForEach loops for multiple sources, etc.).

Silver layer: Data cleaning, validation, deduplication, and enrichment.

Gold layer: Business-level modeling, aggregation, and preparation for consumption (e.g., by semantic models or reports).

The technical requirements in this scenario (as referenced in standard DP-700 questions) usually include:

Sequential execution (Silver must wait for Bronze to complete; Gold must wait for Silver).

Error handling and notifications (e.g., email on failure).

Parallel execution where possible (e.g., ingesting multiple sources into Bronze simultaneously).

Reliable orchestration and scheduling.

Why A is the best approach

A parent (master) data pipeline can orchestrate the entire process by calling child pipelines (one for Bronze, one for Silver, one for Gold, or further broken down).

Data pipelines in Fabric support:

Execute Pipeline activity — to call other pipelines sequentially or with conditions.

Built-in error handling (e.g., Failure path with Send Email activity or Webhook).

Parallel branches (ForEach, parallel execution of activities).

Native scheduling (triggers) and monitoring.

This design promotes modularity, reusability, and maintainability — each child pipeline focuses on one layer or task.

Why the other options are not ideal

B. Schedule a notebook

Notebooks (PySpark/SQL) are excellent for complex transformations within a layer, but they are less effective for high-level orchestration across multiple layers, error notifications, and calling separate processes.

Scheduling a single notebook for the entire medallion flow would make the code monolithic and harder to debug.

C. Schedule an Apache Spark job

Spark job definitions are good for heavy compute workloads, but they lack the rich orchestration features (sequencing, branching, notifications) that data pipelines provide.

D. Schedule multiple data pipelines

This would require managing separate schedules/triggers for each pipeline.

It makes it difficult to enforce strict sequencing (e.g., ensuring Silver doesn't start before Bronze finishes) and centralized error handling.

A master pipeline calling children is cleaner and more reliable.

You need to ensure that WorkspaceA can be configured for source control. Which two

actions should you perform?

Each correct answer presents part of the solution. NOTE: Each correct selection is worth

one point.

A. Assign WorkspaceA to Capl.

B. From Tenant setting, set Users can synchronize workspace items with their Git repositories to Enabled

C. Configure WorkspaceA to use a Premium Per User (PPU) license

D. From Tenant setting, set Users can sync workspace items with GitHub repositories to Enabled

B. From Tenant setting, set Users can synchronize workspace items with their Git repositories to Enabled

Explanation:

✅ A. Assign WorkspaceA to Capacity — Required

Fabric Git integration only works for workspaces backed by capacity (Fabric capacity or Power BI Premium).

If the workspace is not assigned to a capacity, Git integration options won’t be available.

Capacity includes Fabric (F SKU) or Premium (P SKU).

👉 So this is mandatory

✅ B. Enable Git sync at tenant level — Required

The tenant setting:

“Users can synchronize workspace items with their Git repositories”

must be enabled by an admin.

This setting controls whether users can connect Fabric workspaces to Git (Azure DevOps or GitHub).

Without this, Git integration is blocked globally.

👉 Also mandatory

❌ Why the other options are incorrect

❌ C. Configure WorkspaceA to use a Premium Per User (PPU) license

PPU is not sufficient for Git integration.

Git integration requires capacity-backed workspaces, not just user licensing.

👉 Incorrect

❌ D. Enable “Users can sync workspace items with GitHub repositories”

This is too specific and incomplete.

The correct setting is broader: Git repositories (covers both Azure DevOps and GitHub).

👉 This option reflects an incorrect or outdated tenant setting name

🧠 Exam Tip

For Fabric Git integration, always remember the 2-layer requirement:

Tenant setting enabled

Workspace on capacity (not Pro or PPU-only)

You have a Fabric workspace that contains an eventstream named Eventstream1.

Eventstream1 processes data from a thermal sensor by using event stream processing,

and then stores the data in a lakehouse.

You need to modify Eventstream1 to include the standard deviation of the temperature.

Which transform operator should you include in the Eventstream1 logic?

A. Expand

B. Group by

C. Union

D. Aggregate

Summary:

This question tests your knowledge of transformation operators within a Fabric Eventstream. The goal is to calculate a statistical metric (standard deviation) from a stream of temperature data. Standard deviation is an aggregate function that must be computed over a set of values, typically within a defined window or group. Therefore, the operator must be capable of performing calculations like stddev() across multiple events.

Correct Option:

D. Aggregate:

The Aggregate operator is specifically designed to compute summary statistics over a set of events. It allows you to define a time window (e.g., tumbling window of 1 minute) and then apply aggregation functions like avg(), sum(), min(), max(), and crucially, stddev(). This is the direct and correct method to calculate the rolling standard deviation of the temperature readings from the thermal sensor before storing the result in the lakehouse.

Incorrect Options:

A. Expand:

The Expand operator is used to work with complex data types, such as parsing a JSON payload within an event into separate columns. It does not perform any statistical calculations across multiple events. It is used for data shaping and normalization, not for computing aggregates like standard deviation.

B. Group by:

While "Group by" is a fundamental concept in data aggregation, it is not a standalone transform operator available in the Fabric Eventstream visual interface for this purpose. The grouping logic is inherently a part of the Aggregate operator, where you define the window and the fields to group by. The "Aggregate" operator is the implementation that provides the necessary functions.

C. Union:

The Union operator is used to merge two or more separate event streams into a single output stream. It combines events from different sources, appending them together. It does not perform any calculation or transformation on the data within the events and is irrelevant for computing a statistical value from a single data source.

Reference:

Microsoft Official Documentation: Transform data by using an Eventstream in Microsoft Fabric

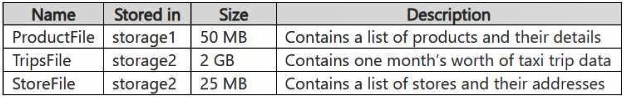

You have an Azure Data Lake Storage Gen2 account named storage1 and an Amazon S3

bucket named storage2.

You have the Delta Parquet files shown in the following table.

You have a Fabric workspace named Workspace1 that has the cache for shortcuts

enabled. Workspace1 contains a lakehouse named Lakehouse1. Lakehouse1 has the

following shortcuts:

A shortcut to ProductFile aliased as Products

A shortcut to StoreFile aliased as Stores

A shortcut to TripsFile aliased as Trips

The data from which shortcuts will be retrieved from the cache?

A. Trips and Stores only

B. Products and Store only

C. Stores only

D. Products only

E. Products. Stores, and Trips

Summary:

This question tests your understanding of the Shortcuts Cache feature in Microsoft Fabric. When a workspace has its cache enabled, data from external sources is automatically cached in OneLake after the first query to improve performance. The cache is populated on-demand. Therefore, only the shortcuts that have been queried will have their data retrieved from the cache. The question implies we need to identify which shortcuts have already been queried and thus would be served from the cache.

Correct Option:

B. Products and Stores only

The key detail is the Size of the files. TripsFile is 2 GB, while ProductsFile and StoreFile are 50 MB and 25 MB, respectively. The cache is populated on first access. A user is most likely to have already run exploratory queries on the smaller, more manageable dimension tables (Products and Stores) to understand the data. The very large 2 GB TripsFile is less likely to have been fully queried in a development or initial phase, so its data would be retrieved directly from the source (S3) and not from the cache.

Incorrect Options:

A. Trips and Stores only

This is illogical from a caching perspective. The 2 GB TripsFile is the least likely candidate to be cached due to its size and the time/bandwidth required to load it. It's improbable that this large file was cached while the smaller ProductsFile was not.

C. Stores only

This is too restrictive. Given the small and similar sizes of ProductsFile and StoreFile, it is highly probable that both would have been queried during initial data exploration and validation, leading to both being cached.

D. Products only

Similar to option C, this is too restrictive. There is no reason provided to believe only ProductsFile was queried and not the similarly sized StoreFile. Standard practice involves profiling multiple related tables.

E. Products, Stores, and Trips

This is incorrect because it assumes the entire 2 GB TripsFile has been cached. The cache is populated on-demand. Given its large size, it is very unlikely that a user has executed a query that required pulling the entire 2 GB dataset into the Fabric cache, especially when the question hints at determining what will be retrieved from the cache based on past activity.

Reference:

Microsoft Official Documentation: OneLake shortcurts cache

You have a Fabric workspace that contains a lakehouse named Lakehousel.

You plan to create a data pipeline named Pipeline! to ingest data into Lakehousel. You will

use a parameter named paraml to pass an external value into Pipeline1!. The paraml

parameter has a data type of int

You need to ensure that the pipeline expression returns param1 as an int value.

How should you specify the parameter value?

A. "@pipeline(). parameters. paraml"

B. "@{pipeline().parameters.paraml}"

C. "@{pipeline().parameters.[paraml]}"

D. "@{pipeline().parameters.paraml}-

Summary:

This question tests your knowledge of pipeline expression syntax in Azure Data Factory (ADF) and Azure Synapse Analytics, which is the same engine powering data pipelines in Fabric. To use a parameter's value within a dynamic expression, you must wrap the parameter reference in a special string interpolation syntax. This tells the pipeline execution engine to evaluate the expression inside the braces rather than treat it as a literal string.

Correct Option:

B. "@{pipeline().parameters.param1}"

This is the correct syntax for referencing a pipeline parameter within a dynamic expression string. The @ symbol denotes the start of an expression. The content within the curly braces {} is evaluated. pipeline() is the function to access pipeline-related properties, .parameters specifies the parameters collection, and .param1 is the name of the specific parameter. This expression will be evaluated and replaced with the integer value of param1.

Incorrect Options:

A. "@pipeline().parameters.param1"

This syntax is missing the crucial curly braces {}. The @ symbol alone is often used for parameters in certain SQL-based activities where the value is passed directly without dynamic expression evaluation. In a general pipeline expression context, this would be interpreted as a literal string, not the integer value of the parameter

C. "@{pipeline().parameters.[param1]}"

This syntax is incorrect because it uses square brackets [] around the parameter name. Square brackets are used in ADF expressions for array indexing or for escaping column names with special characters (e.g., ['parameter-name']). They are not necessary for a simple parameter name like param1 and will cause an evaluation error.

D. "@{pipeline().parameters.param1}-"

This is incorrect because it appends a hyphen - to the end of the expression. This would convert the entire expression result into a string (e.g., if param1 is 5, the result would be the string "5-"). The requirement is to return the parameter as an int value, and this extra character makes the result a string, which would be invalid for any activity expecting an integer.

Reference:

Microsoft Official Documentation: How to use parameters in a Data Factory pipeline

Note: This question is part of a series of questions that present the same scenario. Each

question in the series contains a unique solution that might meet the stated goals. Some

question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result,

these questions will not appear in the review screen.

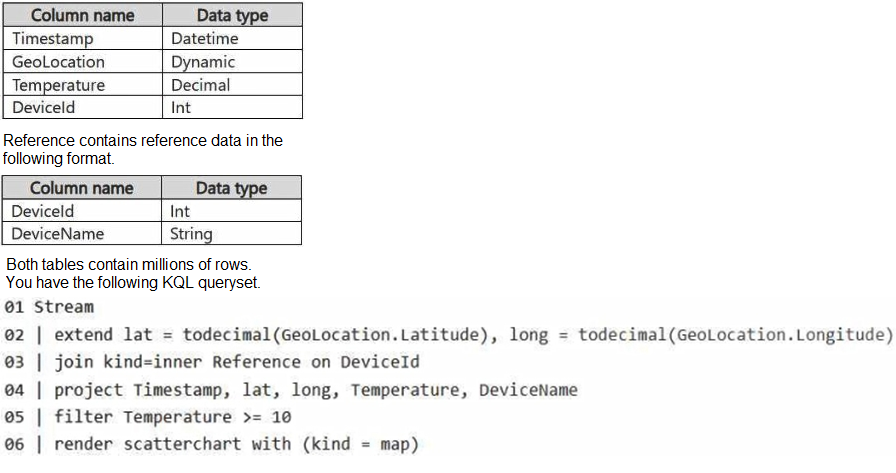

You have a KQL database that contains two tables named Stream and Reference. Stream

contains streaming data in the following format.

You need to reduce how long it takes to run the KQL queryset.

Solution: You move the filter to line 02.

Does this meet the goal?

A. Yes

B. No

Summary:

This question tests performance optimization in Kusto Query Language (KQL), specifically the impact of predicate placement on join operations. The goal is to reduce query execution time on large tables. A fundamental rule for optimizing joins is to reduce the number of rows in the larger table before the join is performed. Applying filters early in the query pipeline minimizes the data volume that subsequent, more expensive operations (like a join) must process.

Correct Option:

B. No

Moving the filter to line 02 does not solve the performance problem. While it is an improvement to filter early, the most significant performance issue in the original query is the placement of the join operation before the filter on Temperature. The query still joins millions of rows from the Stream table with millions of rows from the Reference table first, creating a massive intermediate result set. Only after this expensive join is the temperature filter applied. The optimal solution would be to filter the Stream table for Temperature >= 10 before the join on line 03.

Explanation of the Solution's Ineffectiveness:

The proposed solution of moving the filter to line 02 is logically correct in principle (filter early) but is presented incorrectly in the context of the given code lines. Line 02 is an extend operation. Simply moving the filter there would create a syntax error, as you cannot have a filter clause in the middle of an extend statement.

More importantly, even if we interpret "move to line 02" as "place immediately after the extend", the core performance bottleneck remains. The most impactful optimization for this query is to ensure the Temperature filter is applied to the Stream table before the join operation. The current solution, regardless of its exact line placement, does not achieve this if the join still precedes the filter. The correct sequence should be: filter the large Stream table, then perform the extend, and finally execute the join on the now-smaller result set.

Reference:

Microsoft Official Documentation: Join operator performance tips

This documentation emphasizes that to improve query performance, "if possible, reduce the left side ... of the join." The most effective way to reduce the left side (Stream) in this scenario is to apply the Temperature filter before the join.

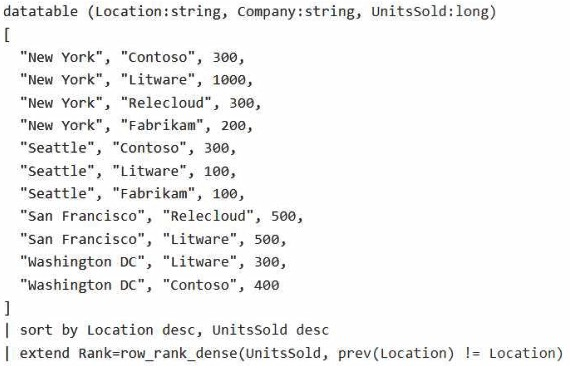

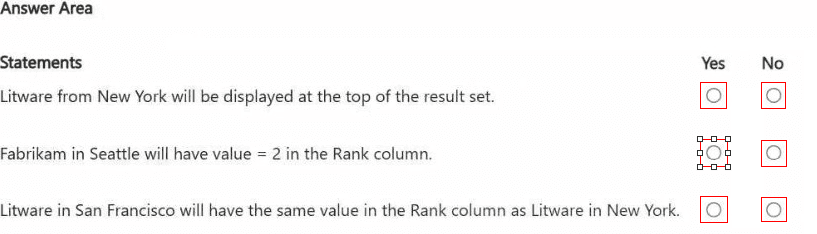

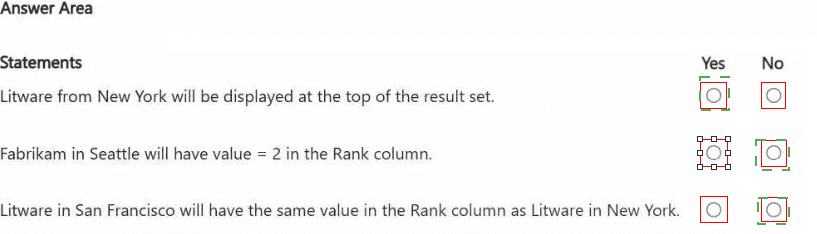

HOTSPOT

You are processing streaming data from an external data provider.

You have the following code segment.

For each of the following statements, select Yes if the statement is true. Otherwise, select

No.

NOTE: Each correct selection is worth one point.

Summary

This query ranks companies within each city based on their UnitsSold in descending order. The row_rank_dense() function assigns a rank, and the prev(Location) != Location argument resets the ranking counter whenever the Location changes. The entire dataset is first sorted by Location desc and UnitsSold desc, which groups all rows for a city together and sorts the companies within that city from highest to lowest sales.

Correct Option Explanations

1. Litware from New York will be displayed at the top of the result set.

Answer: No

The result set is sorted first by Location desc. "Seattle" and "San Francisco" come before "New York" alphabetically when sorted in descending order. Therefore, all rows for Seattle and San Francisco will appear before any rows from New York. Litware in New York will not be at the top.

2. Fabrikan in Seattle will have value = 2 in the Rank column.

Answer: Yes

Let's analyze the Seattle group after sorting:

Contoso: 300 UnitsSold -> Rank 1

Fabrikan: 100 UnitsSold -> Rank 2

Litware: 100 UnitsSold -> Rank 2 (This is a dense rank, so ties get the same rank, and the next rank is not skipped).

Since both Fabrikan and Litware in Seattle sold 100 units, they are tied and both receive a dense rank of 2. The statement is true.

3. Litware in San Francisco will have the same value in the Rank column as Litware in New York.

Answer: Yes

San Francisco Group: Relecloud (500) gets Rank 1, and Litware (500) is tied, so it also gets Rank 1.

New York Group: Litware (1000) is the highest, so it gets Rank 1.

Therefore, Litware in both San Francisco and New York has a Rank value of 1. The statement is true.

Reference:

Microsoft Official Documentation: row_rank_dense()

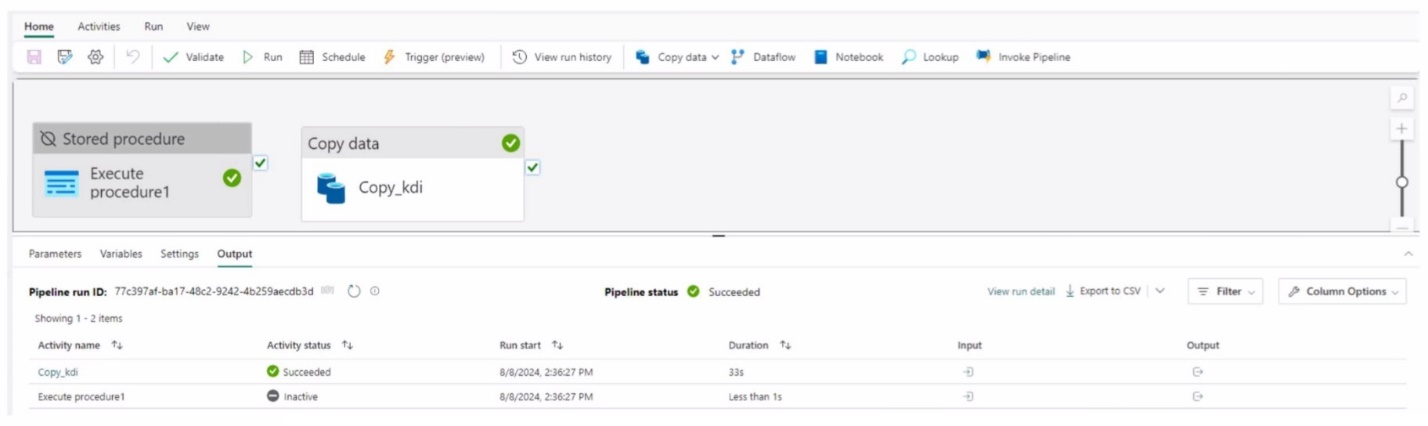

Exhibit.

You have a Fabric workspace that contains a write-intensive warehouse named DW1. DW1

stores staging tables that are used to load a dimensional model. The tables are often read

once, dropped, and then recreated to process new data.

You need to minimize the load time of DW1.

What should you do?

A. Disable V-Order.

B. Drop statistics.

C. Enable V-O-der.

D. Create statistics.

Summary:

This question focuses on optimizing load performance for temporary, write-intensive staging tables in a Fabric Warehouse. The key characteristic is that these tables are used once and then dropped. Traditional optimization techniques like creating statistics are designed for long-lived tables where the cost of creating stats is amortized over many queries. For short-lived staging tables, this overhead can actually increase total load time. The solution is to disable features that add unnecessary processing overhead during the write operation itself.

Correct Option:

A. Disable V-Order.

V-Order is an automatic write-time optimization that compresses and organizes data for faster read performance. However, applying V-Order adds computational overhead during the write process. For staging tables that are written once, read once, and then dropped, this write-time overhead is a net loss. The minor read performance gain does not justify the increased write time. Disabling V-Order minimizes the initial load (write) time, which is the explicit goal.

Incorrect Options:

B. Drop statistics.

While dropping statistics might save a negligible amount of space, it does not directly minimize load time. More importantly, for a table that is about to be read (to load the dimensional model), the absence of statistics would likely harm the performance of that subsequent read query, as the query optimizer would have no data distribution information to create an efficient execution plan.

C. Enable V-Order.

This is the opposite of what is required. Enabling V-Order would increase the write-time overhead during the initial load of the staging tables, thereby increasing the load time instead of minimizing it. This is beneficial for fact and dimension tables in the main model that are queried frequently, but not for transient staging tables.

D. Create statistics.

Creating statistics adds a significant step after the data load. For tables that are read only once, the time spent generating detailed statistics is often greater than the query performance benefit gained from having them. This would increase the total process time from load to completion of the read, thus failing to meet the goal of minimizing load time.

Reference:

Microsoft Official Documentation: Optimize performance with V-ORDER

You have a Fabric workspace named Workspace1 that contains an Apache Spark job

definition named Job1.

You have an Azure SQL database named Source1 that has public internet access

disabled.

You need to ensure that Job1 can access the data in Source1.

What should you create?

A. an on-premises data gateway

B. a managed private endpoint

C. an integration runtime

D. a data management gateway

Summary:

This question involves connecting a Fabric Spark job to an Azure SQL Database that has its public endpoint disabled. This means the database is only accessible through a private network connection (e.g., a VNet). To reach this private resource from the managed Fabric environment, you must establish a secure, private network path. The correct solution is a feature designed specifically for this purpose within the Microsoft Fabric and Azure networking ecosystem.

Correct Option:

B. a managed private endpoint

A managed private endpoint (MPE) is a feature within the Fabric admin settings that creates a private, outbound connection from the Fabric platform to a specific Azure PaaS service (like Azure SQL Database). It does not use the public internet. Since Source1 has public access disabled, an MPE is the required component to establish the necessary private network connectivity, ensuring Job1 can access the data securely.

Incorrect Options:

A. an on-premises data gateway

An on-premises data gateway is used to connect cloud services (like Power BI or Fabric) to data sources located within a private on-premises network, not to other Azure PaaS services. It is not the correct tool for connecting to an Azure SQL Database within Azure's own cloud.

C. an integration runtime

While an integration runtime (IR) is the core compute infrastructure for data movement and activity execution in Azure Data Factory and Synapse Pipelines, it is not the primary mechanism for enabling private connectivity from a Fabric Spark job definition. The Azure Integration Runtime uses the public internet, and the Self-hosted Integration Runtime suffers from the same on-premises limitation as Option A. The native, Fabric-specific solution is the managed private endpoint.

D. a data management gateway

This is an outdated term that is essentially synonymous with the "on-premises data gateway" mentioned in option A. It serves the same purpose and is therefore incorrect for the same reasons.

Reference:

Microsoft Official Documentation: Managed private endpoints in Microsoft Fabric

| Page 2 out of 11 Pages |