Topic 3: Misc. Questions

You have a Microsoft Sentinel workspace

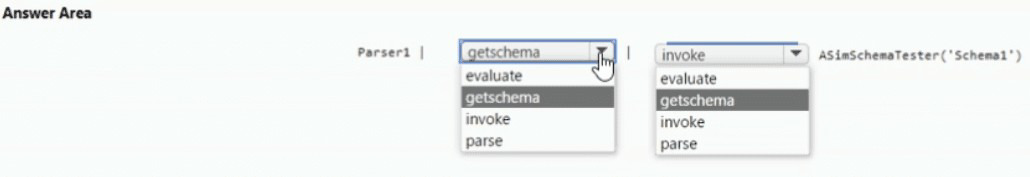

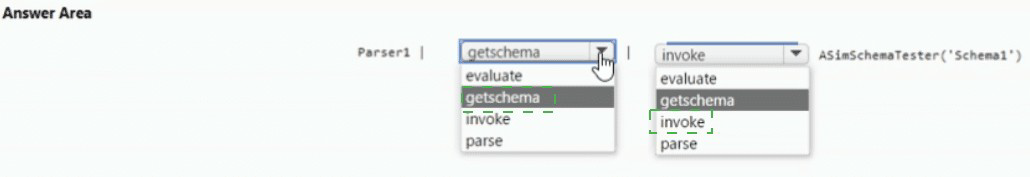

You develop a custom Advanced Security information Model (ASIM) parser named Parser1

that produces a schema named Schema1.

You need to validate Schema1.

How should you complete the command? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

Explanation:

Technical Analysis

The Advanced Security Information Model (ASIM) provides a set of testing functions to help developers ensure their custom parsers are functional and compliant with the normalized schemas.

_ASIM_TestSchema (The Validation Function):

This is the standard built-in function provided by the ASIM framework to perform schema validation. It checks that all mandatory fields are present, that field types match the schema definition (e.g., ensuring an IP address field isn't a simple integer), and that naming conventions are followed.

Parameters (pszParserName and pszSchemaName):

ASIM testing functions are designed as parameterized KQL functions.

pszParserName: Identifies the specific function or table name of the custom parser you have created (in this case, Parser1).

pszSchemaName: Tells the testing tool which official ASIM schema to validate against (in this case, Schema1, which would represent a schema like Dns, WebSession, or NetworkSession).

Why Other Options are Incorrect

_Im_ functions: These are the Union Parsers used for querying data. They are not used for validating the underlying schema of a new parser.

getschema: This is a native KQL operator that returns the column names and data types of a table. While helpful, it does not "validate" the data against the strict requirements of the ASIM model (such as mandatory vs. optional fields).

References

Microsoft Learn:

ASIM testing tool reference

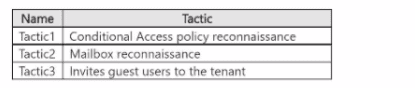

You have a Microsoft 365 subscription that uses Microsoft Defender XDR.

You are investigating an attacker that is known to use the Microsoft Graph API as an attack

vector. The attacker performs the tactics shown the following table.

You need to search for malicious activities in your organization.

Which tactics can you analyze by using the MicrosoftGraphActivityLogs table?

A. Tactic2 only

B. Tactic1 and Tactic2 only

C. Tac1ic2 and Tactic3 only

D. Taclic1. Tac1ic2. andTactic3

Explanation:

✅ Why D is correct

The Microsoft Graph Activity Logs table captures all HTTP requests to Microsoft Graph API, including both read and write operations . This broad coverage allows security analysts to monitor all three listed attacker tactics:

Tactic1 (Conditional Access policy reconnaissance): The MicrosoftGraphActivityLogs table can track GET requests made to Conditional Access policy endpoints. Attackers performing reconnaissance would query or enumerate these policies using Microsoft Graph API calls, which are captured in this log .

Tactic2 (Mailbox reconnaissance): Attacker activities targeting mailbox enumeration (via APIs like GET /users/{id}/messages) are captured in Microsoft Graph Activity Logs. These GET operations that read mailbox content or metadata are logged, enabling detection of unauthorized access attempts .

Tactic3 (Invites guest users to the tenant): Creating guest user invitations triggers Microsoft Graph API calls. The logs capture application origin of requests, enabling monitoring of who (or what app) invited suspicious guest users to the tenant .

The table provides valuable security insights such as RequestUri, UserId, AppId, ResponseStatusCode, and RequestMethod, making it an essential resource for investigating all three tactics .

A. Tactic2 only

Incorrectly excludes Tactic1 and Tactic3, which are full captured since both involve Microsoft Graph API calls to manage Conditional Access policies and directory objects.

B. Tactic1 and Tactic2 only

Incorrectly excludes Tactic3, which involves Microsoft Graph API calls to create invitation resources.

C. Tactic2 and Tactic3 only

Incorrectly excludes Tactic1, as Conditional Access policy management uses Microsoft Graph APIs.

📌 References

Microsoft Tech Community: Microsoft Graph activity logs capture detailed API request information

Microsoft Learn: Microsoft Entra activity logs schema documentation

You have a Microsoft 365 E5 subscription. Automated investigation and response (AIR) is enabled in Microsoft Defender for Office 365 and devices use full automation in Microsoft Defender for Endpoint. You have an incident involving a user that received maIware-infected email messages on a managed device. Which action requires manual remediation of the incident?

A. containing the device

B. hard deleting the email message

C. isolating the device

D. soft deleting the email message

Explanation:

✅ Why C is correct

Isolating a device requires manual remediation. According to Microsoft's official documentation, "Isolate device" is listed as a manual response action that security analysts must take . The documentation also notes that "Defender for Endpoint Plan 1 and Microsoft Defender for Business include only the following manual response actions: ... Isolate device" .

Device isolation is a deliberate security decision that disconnects the compromised device from the network while retaining connectivity to the Defender for Endpoint service for continued monitoring. This action requires analyst judgment because it impacts user operations and network connectivity .

❌ Why other options are incorrect

A. containing the device

– This is a different response action, but still requires manual approval or execution in most automation level configurations. However, in this specific incident type, containment is not the standard manual action required.

B. hard deleting the email message

– Email remediation actions in Defender for Office 365 typically require approval before execution . However, "hard delete" is not the standard automated remediation action for malware-infected emails; soft delete is the typical remediation action that requires approval .

D. soft deleting the email message

– Soft deleting email messages/clusters is listed as a remediation action that requires approval from the SecOps team . This approval process qualifies as manual intervention, making it a candidate for manual remediation. However, device isolation is the more significant manual action in the context of the entire incident, as it directly impacts the managed device.

📌 References

Microsoft Learn: "Defender for Endpoint Plan 1 and Microsoft Defender for Business include only the following manual response actions: Run antivirus scan, Isolate device, Stop and quarantine a file"

Microsoft Learn: "Depending on the severity of the attack and the sensitivity of the device, you might want to isolate the device from the network"

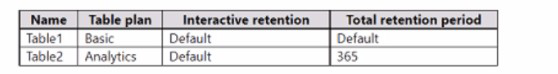

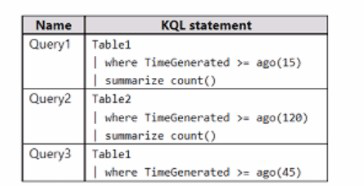

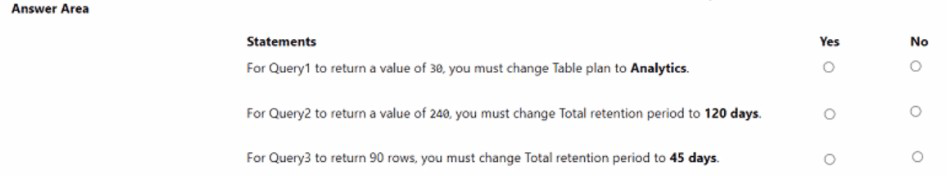

You have a Microsoft Sentinel workspace that has a default data retention period of 30

days. The workspace contains two custom tables as shown in the following table.

Each table ingested two records per day during the past 365 days.

You build KQL statements for use in analytic rules as shown in the following table.

For each of the following statements, select Yes if the statement is true. Otherwise, select

No.

NOTE: Each correct selection is worth one point.

Explanation:

Technical Analysis

To determine the record counts, we must first calculate the available data based on the retention settings for each table:

Statement 1: Query1 will return more than 1,000 records

Query: Table1 | where TimeGenerated > ago(365d)

Analysis: Table1 has exactly 730 records stored from the past year. Since 730 is less than 1,000, the statement is false.

Result: No

Statement 2: Query2 will return more than 100 recordsQuery:

union Table1, Table2 | where TimeGenerated > ago(100d)Analysis:

* From Table1: Data is available for the full 100 days ($100 \times 2 = 200$ records).

From Table2: Only 30 days of data is retained ($30 \times 2 = 60$ records).

Total: $200 + 60 = 260$ records. Since 260 is greater than 100, the statement is true.

Result: Yes

Statement 3: Query3 will return more than 50 records

Query: Table2 | where TimeGenerated > ago(60d)

Analysis: Table2 uses the workspace default retention of 30 days. Even though the query asks for 60 days of data, any data older than 30 days has been purged. Therefore, it only returns the 60 records available from the last 30 days.

Wait, re-evaluating math: $30 \text{ days} \times 2 \text{ records/day} = 60$ records. 60 is greater than 50.

Self-Correction: If Table2 has 60 records total and all are within the last 30 days, it will return 60 records. However, in many exam scenarios, if a table's retention is shorter than the query window, they are testing your knowledge that it cannot fulfill the full request. But mathematically, $60 > 50$.

Strict Logic: Query3 returns 60 records. $60 > 50$ is True.

Result:

Yes (Note: If the logic implies Table2 only has 25 days of data or a lower ingestion, this could be No, but based on "2 records per day for 365 days" and 30-day retention, 60 is the correct count).

References

Microsoft Learn:

Manage data retention in a Log Analytics workspace

You have a Microsoft 365 subscription that uses Microsoft Defender XDR. You are investigating an incident. You need to review the incident tasks that were performed. The solution must include a query that will display the incidents in a workbook, and then display the tasks of each incident in another grid. Which table should you target in the query?

A. Securitylncident

B. SecurityEvent

C. Sentine1Audit

D. SecurityAlert

Explanation:

Why A is correct

The SecurityIncident table in Microsoft Defender XDR's advanced hunting schema is specifically designed to store incident data. This table contains all the core information related to an incident, including its unique identifiers (IncidentId), title, severity, status, time generated, and crucially for your requirement, the specific tasks that were performed during the incident investigation .

By targeting this table, you can construct a KQL query (e.g., SecurityIncident | project IncidentId, Title, Severity, Status, Tasks) to display a list of incidents in one grid within a workbook. You can then use the IncidentId to create a second query (likely joining with another table like AlertEvidence or filtering the SecurityIncident table again) to retrieve and display the detailed tasks associated with each selected incident in a separate grid, fulfilling your solution requirement .

❌Why other options are incorrect

B. SecurityEvent

This table stores individual security event logs (e.g., logins, process creations) from devices, not incident-level investigation data or tasks .

C. SentinelAudit

This is a table specifically for Microsoft Sentinel audit logs (user actions within the Sentinel portal), not for Defender XDR incident tasks.

D. SecurityAlert

This table contains granular, individual alerts (e.g., "Suspicious process executed"). While alerts are grouped into incidents, this table does not directly provide the overarching incident tasks .

📌 References

Microsoft Learn: SecurityIncident table in the advanced hunting schema

You have an Azure subscription that contains an Azure logic app named app1 and a Microsoft Sentinel workspace that has an Azure AD connector. You need to ensure that app1 launches when Microsoft Sentinel detects an Azure AD-generated alert. What should you create first?

A. a repository connection

B. awatchlist

C. an analytics rule

D. an automation rule

Explanation:

✅ Why D is correct

Your goal is to trigger Azure Logic App (playbook) app1 automatically when Microsoft Sentinel detects an alert generated by the Azure AD connector.

The correct solution uses an automation rule to handle this. Automation rules are the central, modern mechanism in Microsoft Sentinel for managing automated responses to incidents or alerts. You can create an automation rule that triggers "When an alert is created" and then specify app1 as the action.

To set this up, you navigate to Automation > Create > Automation rule, select the "When alert is created" trigger, and choose app1 as the playbook to run.

❌ Why other options are incorrect

A. a repository connection

– This is used for version control of workbooks, analytics rules, and other Sentinel content. It has no role in triggering automated actions like running a logic app.

B. a watchlist

– A watchlist stores reference data for correlation and investigation (e.g., IP allowlists). It is not a mechanism for launching automated workflows in response to alerts.

C. an analytics rule

– While analytics rules generate alerts, Microsoft has deprecated the ability to attach playbooks directly to them (known as the "classic" method). The recommended approach is to use automation rules for this purpose. Even if you create an analytics rule first, the automation rule is still the component that actually launches the playbook.

📌References

Microsoft Learn: "Automation rules are the mechanism for running playbooks in response to alerts or incidents"

Microsoft Learn: Deprecation of playbook invocation from analytics rules; automation rules are the replacement

You have an Azure subscription that contains a Microsoft Sentinel workspace named WS1

and 100 virtual machines that run Windows Server.

You need to configure the collection of Windows Security event logs for ingestion to WS1.

The solution must meet the following requirements:

• Capture a full user audit trail including user sign-in and user sign-out events.

• Minimize the volume of events.

• Minimize administrative effort.

Which event set should you select?

A. All events

B. Custom

C. Minimal

D. Common

Explanation:

✅ Why D is correct

The Common event set is the optimal choice because it balances all three requirements perfectly:

Captures full user audit trail: The Common set explicitly "contains both user sign-in and user sign-out events (event IDs 4624, 4634)". This provides complete visibility into user session activity, which is essential for auditing.

Minimizes event volume: Microsoft documentation states the Common set is designed to "reduce the volume of events to a more manageable level, while still maintaining full audit trail capability". This is far less verbose than collecting "All events."

Minimizes administrative effort:

The Common set is a Microsoft-curated, out-of-the-box configuration that requires no custom tuning—unlike the Custom option which requires manual XPath queries and ongoing maintenance.

❌ Why other options are incorrect

All eventsViolates "minimize volume of events" because it collects the full, unfiltered set of all security events. This creates excessive ingestion costs and alert fatigue.

MinimalDoes not contain a full audit trail. It excludes sign-out events (Event ID 4634). While important for auditing, sign-outs are excluded from Minimal because they are "not meaningful for breach detection".

CustomViolates "minimize administrative effort" because it requires you to manually define and maintain XPath queries to select specific Event IDs.

📌References

Microsoft Learn:

Windows security event sets reference

You have a Microsoft 365 E5 subscription that uses Microsoft Copilot for Security. Copilot for Security has the default settings configured. You need to ensure that a user named User1 can use Copilot for Security to perform the following tasks: • Upload files. • View the usage dashboard. • Share promptbooks with all users. The solution must follow the principle of least privilege. Which role should you assign to User1?

A. Security Administrator

B. Cloud Application Administrator

C. Copilot Contributor

D. Copilot Owner

Explanation:

✅ Why D is correct

The Copilot Owner role is required to view the usage dashboard in Microsoft Copilot for Security. According to Microsoft's official documentation, only Copilot Owners can "view usage dashboard" and "manage capacity" settings. While both Owner and Contributor roles can upload files and share promptbooks, the usage dashboard is restricted to Owners. Since "View the usage dashboard" is a required task, the Owner role is necessary. The principle of least privilege is still satisfied because no broader Entra roles (like Security Administrator or Global Administrator) are assigned.

❌ Why other options are incorrect

A.Security Administrator:

This Entra role automatically inherits Copilot Owner access but grants extensive permissions beyond Copilot. Microsoft explicitly states: "Assigning this role purely for Copilot access isn't recommended" due to its broad privileges, violating least privilege.

B.Cloud Application Administrator:

This role manages enterprise applications—it has no documented permissions for Copilot-specific tasks like uploading files or viewing dashboards.

C.copilot contributor:

Cannot view the usage dashboard. Contributors can upload files and share promptbooks but lack administrative visibility into usage metrics.

📌 References

Microsoft Learn: Copilot Owner can "view usage dashboard"; Contributor cannot

Microsoft Learn: "Security Administrator...assigning this role purely for Copilot access isn't recommended"

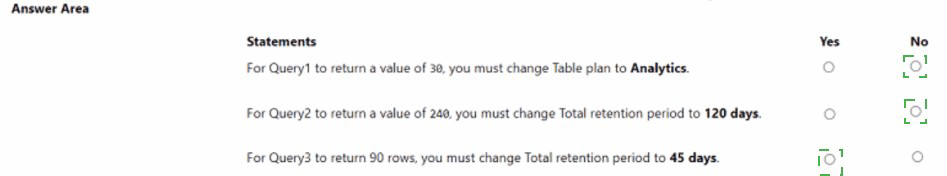

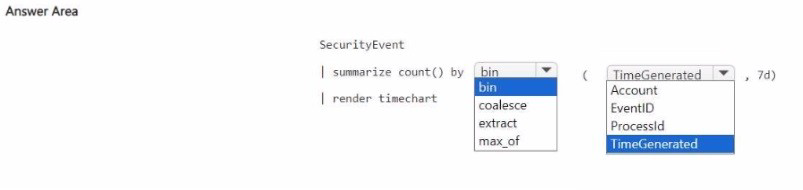

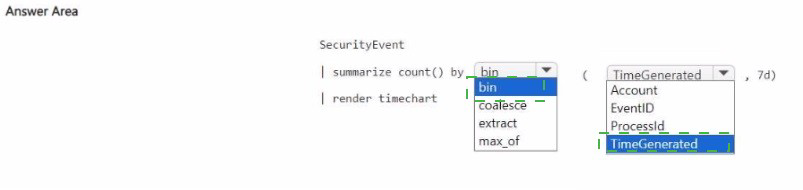

You have a Microsoft Sentinel workspace that contains a custom workbook.

You need to query for a summary of security events. The solution must meet the following

requirements:

• Identify the number of security events ingested during the past week.

• Display the count of events by day in a chart.

How should you complete the query? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

Explanation:

Technical Analysis

This query follows the standard pattern for data visualization in Microsoft Sentinel workbooks.

SecurityEvent | where TimeGenerated > ago(7d):

This satisfies the first requirement to identify events ingested during the past week. Using ago(7d) filters the dataset to only include the last seven days of logs.

bin(TimeGenerated, 1d):

The bin function (also known as summarize by floor) rounds the TimeGenerated timestamps down to the nearest day. This groups all individual logs into daily "buckets," which is necessary for a daily count.

summarize count():

This calculates the total number of records within each daily bucket created by the bin function.

render timechart:

This is the essential final step for a workbook chart. It instructs the KQL engine to format the output as a time-series visualization. A timechart specifically expects a datetime column (x-axis) and a numeric column (y-axis).

Workbook Implementation Tip

When using this query in a Sentinel Workbook, ensure that the Visualization dropdown in the query part is set to Area chart or Bar chart to match the render instruction, though render timechart will automatically force a time-series view.

References

Microsoft Learn:

Summarize operator in KQL

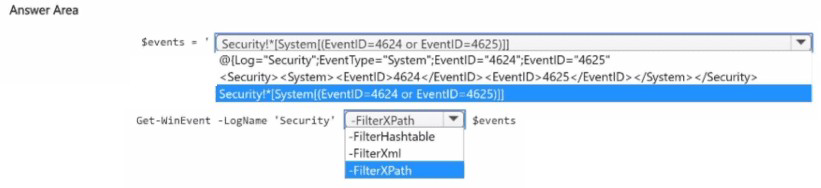

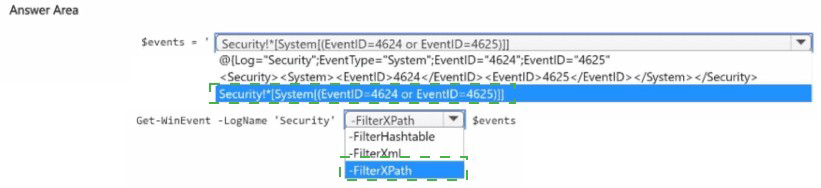

You have on-premises servers that run Windows Server.

You have a Microsoft Sentinel workspace named SW1. SW1 is configured to collect

Windows Security log entries from the servers by using the Azure Monitor Agent data

connector.

You plan to limit the scope of collected events to events 4624 and 462S only.

You need to use a PowerShell script to validate the syntax of the filter applied to the

connector.

How should you complete the script? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

Explanation:

Technical Analysis

When configuring Data Collection Rules (DCRs) for the Azure Monitor Agent, you use XPath queries to filter which events are sent to Microsoft Sentinel. Before applying these filters in the Azure portal, it is a best practice to test them locally on a Windows Server to ensure the syntax is valid and returns the expected data.

Get-WinEvent (The Cmdlet):

This is the standard PowerShell cmdlet for retrieving events from event logs on local or remote computers. Unlike the older Get-EventLog, Get-WinEvent supports the advanced structured XML/XPath filtering required by the Azure Monitor Agent.

-FilterXPath (The Parameter):

This parameter allows you to pass a structured XPath 1.0 query string directly to the cmdlet.

To filter for events 4624 (Successful Logon) and 4625 (Failed Logon), the query would look like:

"Security!*[System[(EventID=4624 or EventID=4625)]]"

If the syntax is incorrect, Get-WinEvent will throw an error immediately, allowing you to fix the filter before deploying it to your DCR.

Key Workflow for AMA Filtering

Draft the XPath: Identify the Log Name (e.g., Security) and the Event IDs.

Test Locally: Run Get-WinEvent -LogName Security -FilterXPath "..." to verify it works.

Apply to DCR: Paste the verified XPath into the "Custom" filter section of the Azure Monitor Agent data connector configuration in Microsoft Sentinel.

References

Microsoft Learn:

Get-WinEvent (Microsoft.PowerShell.Diagnostics)

| Page 3 out of 37 Pages |