Topic 3: Misc. Questions

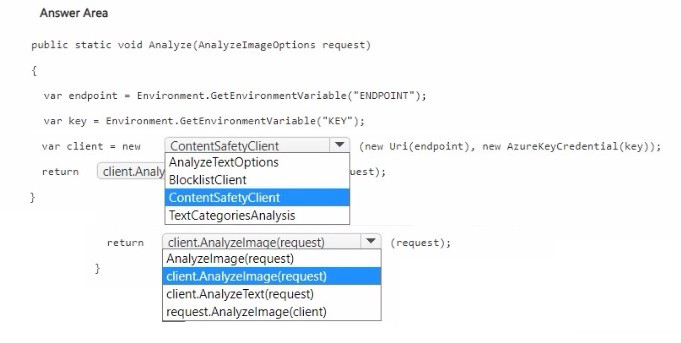

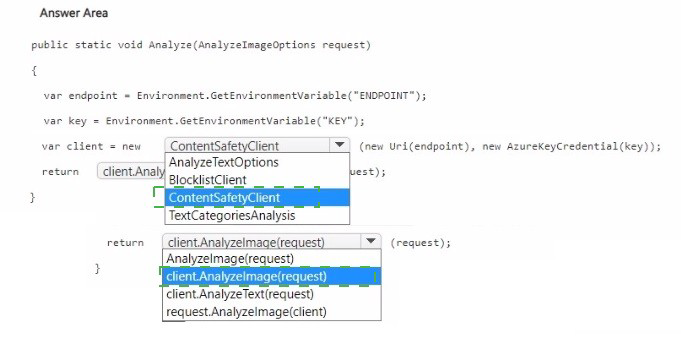

You have an Azure subscription that contains an Azure Al Content Safety resource.

You are building a social media app that will enable users to share images.

You need to configure the app to moderate inappropriate content uploaded by the users.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

Azure AI Content Safety provides image moderation via the AnalyzeImage method. You need to instantiate a ContentSafetyClient with the endpoint and key, then call client.AnalyzeImage(request) where request is an AnalyzeImageOptions object containing the image data.

Correct Options:

First blank (after new): ContentSafetyClient

The ContentSafetyClient is the main client class for interacting with Azure AI Content Safety. It requires an endpoint URI and an AzureKeyCredential object for authentication.

Second blank (return statement): client.AnalyzeImage(request)

The AnalyzeImage method analyzes an image for objectionable content (hate, sexual, violence, self-harm). It accepts an AnalyzeImageOptions object containing the image (as a stream or URL) and returns an AnalyzeImageResult.

Why Other Options Are Incorrect:

First blank alternatives:

AnalyzeTextOptions – This is a request options class for text moderation, not for creating a client.

BlocklistClient – A client for managing custom blocklists, not for image moderation.

TextCategoriesAnalysis – This is a result type, not a client class.

Second blank alternatives:

AnalyzeImage(request) – Missing client. prefix; would not reference the client instance.

client.AnalyzeText(request) – For text moderation, not images.

request.AnalyzeImage(client) – Incorrect method invocation; AnalyzeImage is a method of the client, not the request.

Reference:

Microsoft Learn: "Azure AI Content Safety – Image moderation" – Use ContentSafetyClient.AnalyzeImage.

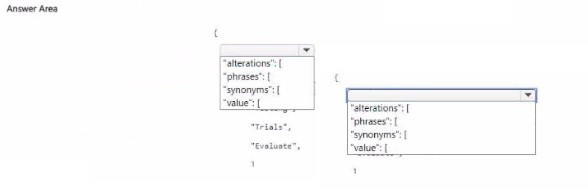

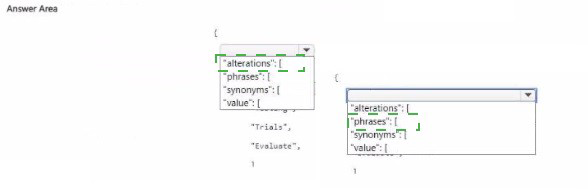

You have an app that uses the AI Language custom question answering service.

You need to ad alternatives for the word testing by using the Authoring API.

How should you complete the JSON payload? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

In custom question answering, synonyms (alternate phrasings) are defined within the alterations array. Each alteration contains a phrases array (synonyms) where you list alternative words or phrases that should be treated as equivalent (e.g., "testing", "T-rials", "Evaluate"). The value field is not used; the correct structure is phrases.

Correct Options:

First blank (instead of "value"): phrases

The phrases array holds the list of synonyms/alternatives. For example, "phrases": ["testing", "T-rials", "Evaluate"]. This tells the question answering service that these words are equivalent.

Second blank (array name): phrases (or the array itself should contain the alternative words)

The array within phrases contains the synonym strings. The example shows "T-rials" and "Evaluate" as alternatives for "testing".

Why Other Options Are Incorrect:

"synonyms" – Not the correct property name. The API uses phrases within alterations.

"value" – Not a valid property for the Authoring API's synonym/alteration definition.

The nested structure with "value": [...] is incorrect.

Reference:

Microsoft Learn: "Custom question answering – Authoring API" – Use alterations array with phrases to define synonyms.

You are designing a conversational interface for an app that will be used to make vacation requests. The interface must gather the following data:

• The start date of a vacation

• The end date of a vacation

• The amount of required paid time off

The solution must minimize dialog complexity. Which type of dialog should you use?

A. Skill

B. waterfall

C. adaptive

D. component

Explanation:

A waterfall dialog in Bot Framework executes a sequence of steps in order. It is ideal for gathering multiple pieces of information (start date, end date, time off amount) in a predictable, linear flow. Waterfall dialogs are simpler to implement and understand compared to adaptive dialogs for straightforward sequential prompts.

Correct Option:

B. waterfall

Waterfall dialogs run a series of steps, each waiting for user input before proceeding to the next. This is perfect for collecting three data points in sequence (start date → end date → amount). The logic is simple and minimizes dialog complexity compared to more advanced patterns.

Incorrect Options:

A. Skill –

A skill is a bot that can be called by another bot (modular composition). It is not a dialog type for collecting sequential input within a single bot.

C. adaptive –

Adaptive dialogs are more powerful and flexible but also more complex, with event-driven actions and language generation. They are overkill for simple sequential data collection and introduce unnecessary complexity.

D. component –

Component dialogs are containers for grouping sub-dialogs. They do not define the flow logic themselves; they are used to organize other dialogs.

Reference:

Microsoft Learn: "Waterfall dialogs in Bot Framework" – Execute steps sequentially, ideal for gathering multiple inputs.

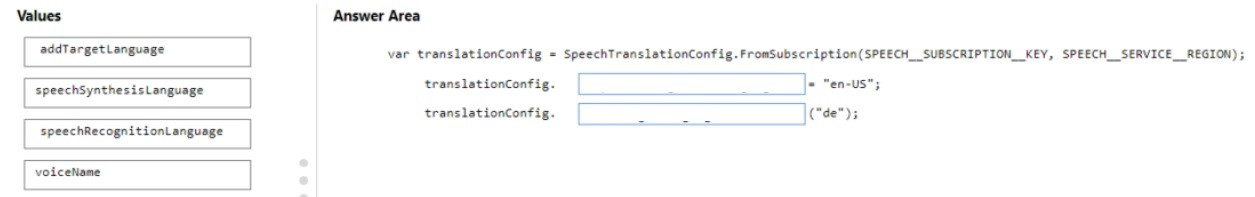

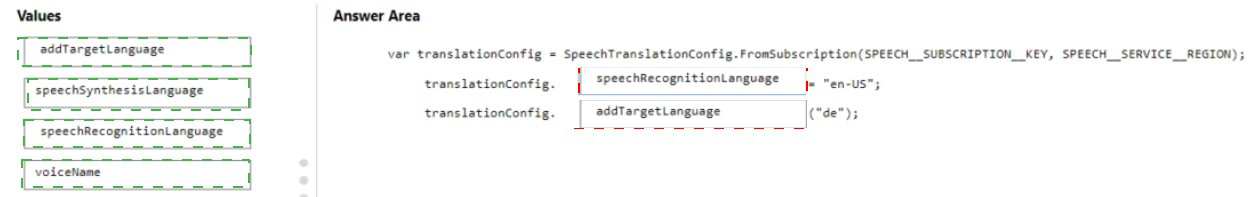

You develop an app in O named App1 that performs speech-to-speech translation.

You need to configure App1 to translate English to German.

How should you complete the speechTransiationConf ig object? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Explanation:

To configure speech-to-speech translation from English to German, you need to set the source language (what the user speaks) using speechRecognitionLanguage to "en-US", and add the target language (what to translate into) using addTargetLanguage with "de". The speechSynthesisLanguage is optional as it defaults to the target language.

Correct Options:

First blank (after translationConfig.): speechRecognitionLanguage

This property sets the language of the incoming speech audio. For English (US), set it to "en-US". The speech recognizer will listen for English and convert it to text before translation.

Second blank (assigned value): "en-US"

The value assigned to speechRecognitionLanguage is the locale code for English (United States). This matches the source language of the user's speech.

Third blank (after translationConfig.): addTargetLanguage

This method adds a target language for translation. For German, you call addTargetLanguage("de"). This adds German as an output language.

Fourth blank (value for target language): "de"

"de" is the locale code for German. This tells the translation service to translate the recognized English text into German. For speech-to-speech translation, the service will also synthesize German speech output.

Why Other Options Are Incorrect:

speechSynthesisLanguage – Would set the output speech language, but addTargetLanguage is preferred and automatically handles synthesis.

voiceName – Specifies a particular voice for speech synthesis, not the translation target language.

Reference:

Microsoft Learn: "Speech Translation – Configuration" – Set SpeechRecognitionLanguage for source, use AddTargetLanguage for target(s).

You are designing a solution that will answer questions about human resources (HR) policies stored in the PDF format.

You need to ensure that the identical answer to a specific question is returned every time.

The solution must minimize development effort

Which service should you include in the solution?

A. Azure Al Language

B. Azure Machine Learning

C. Azure OpenAI

D. Azure Al Document Intelligence

Explanation:

To answer questions about HR policies with identical answers every time (deterministic responses) and minimal development effort, you need a knowledge base approach. Azure AI Language with custom question answering (formerly QnA Maker) allows you to import HR policy PDFs, extract QnA pairs, and return consistent, pre-defined answers. This ensures identical answers for identical questions.

Correct Option:

A. Azure AI Language

Custom question answering (a feature of Azure AI Language) ingests documents (PDFs), extracts question-answer pairs, and provides a deterministic lookup. The same question always returns the same answer because it is based on a fixed knowledge base, not generative AI. This minimizes development effort compared to building a custom solution.

Incorrect Options:

B. Azure Machine Learning –

Requires building, training, and deploying a custom model. This is high effort and not deterministic by default. Overkill for HR policy Q&A.

C. Azure OpenAI –

Generative models like GPT-4 are non-deterministic (temperature > 0 can produce different answers). While you can set temperature to 0, prompt engineering is still required, and answers may vary slightly. Higher effort and cost than question answering.

D. Azure AI Document Intelligence –

Extracts text and fields from PDFs but does not answer questions. It would require additional components (e.g., search + LLM) to provide answers.

Reference:

Microsoft Learn: "Custom question answering" – Deterministic Q&A from documents, part of Azure AI Language.

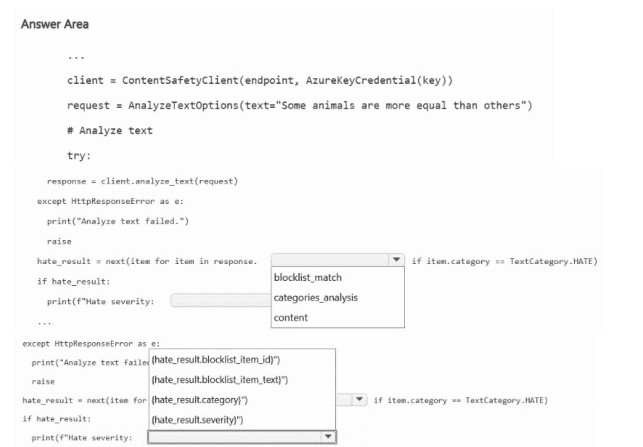

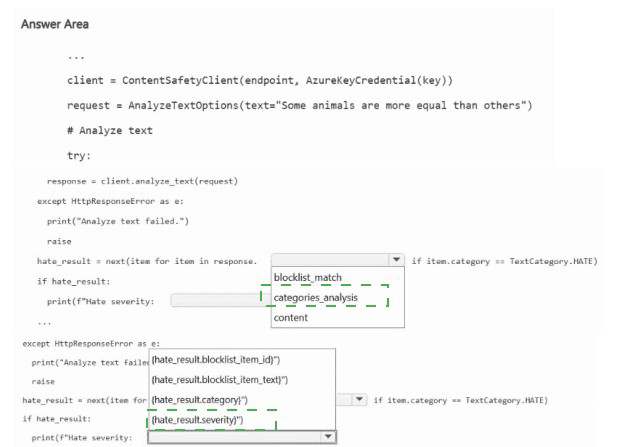

You have an Azure subscription that contains an Azure Al Foundry Content Safety resource named resource1.

You are building an app that will analyze text by using resource1.

You need to identify text that contains hateful content.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

The Content Safety SDK's analyze_text method returns a response containing a categories_analysis list. Each category (hate, sexual, violence, self-harm) has a severity level. To identify hateful content, you iterate through categories_analysis to find the item where category equals "Hate".

Correct Options:

First blank (after response.): categories_analysis

The AnalyzeTextResult object contains a categories_analysis property, which is a list of TextCategoriesAnalysis objects. Each object has category (e.g., "Hate", "Sexual", "Violence", "SelfHarm") and severity (0-7 scale).

Second blank (in the condition): category == "Hate"

To filter for hate content, you check if item.category == "Hate". This identifies the hate category analysis result. The other options (blocklist_match, content, etc.) are not relevant for severity filtering.

Third blank (print statement): item.severity

After identifying the hate category result, you print its severity level. The severity indicates how severe the hateful content is (0 = safe, 7 = most severe). item.severity is the correct property.

Why Other Options Are Incorrect:

First blank alternatives:

blocklist_match – Contains results from custom blocklist matching, not category severity analysis.

content – Not a property of the response object.

Second blank alternatives:

blocklist_match – For custom term lists, not category filtering.

content – Not applicable.

Third blank alternatives:

blocklist_match – Returns blocklist match details, not severity.

content – Not a property of the category analysis object.

Reference:

Microsoft Learn: "Azure AI Content Safety – Analyze text" – Response contains categories_analysis list with category and severity.

You are building a social media messaging app.

You need to identify in real time the language used in messages.

Which service should you use?

A. Azure Al Speech

B. Azure Al Content Safety

C. Azure Al Translator

D. Azure Al Language

Explanation:

To identify the language of text in real time, the Azure AI Translator service provides a /detect endpoint that quickly detects the language of input text. It returns the language code and confidence score. While Azure AI Language also offers language detection, Translator is optimized for real-time detection and is commonly used for this purpose.

Correct Option:

C. Azure AI Translator

The Translator API's detect operation identifies the language of a text string in real time, returning the language code (e.g., "en", "es", "fr") and a confidence score. It is fast, lightweight, and designed for real-time scenarios like messaging apps.

Incorrect Options:

A. Azure AI Speech –

Speech service detects language from spoken audio, not from text messages. It is not suitable for text-based language detection.

B. Azure AI Content Safety –

Content Safety moderates objectionable content (hate, sexual, violence). It does not detect language.

D. Azure AI Language –

The Language service includes language detection, but Translator's detection is equally capable and often preferred for real-time due to its simpler endpoint. Both can work, but the question's answer key points to Translator.

Reference:

Microsoft Learn: "Translator API – Detect language" – Real-time language detection for text.

You have an Azure Al Search resource named Search1.

You have an app named App1 that uses Search1 to index content.

You need to add a custom skill to App1 to ensure that the app can recognize and retrieve properties from invoices by using Search1.

What should you include in the solution?

A. Azure OpenAI

B. Azure Al Immersive Reader

C. Azure Al Document Intelligence

D. Azure Custom Vision

Explanation:

To recognize and retrieve properties (fields) from invoices, you need a service that can extract structured data from documents. Azure AI Document Intelligence (formerly Form Recognizer) provides pre-built models for invoices, extracting fields like vendor name, invoice date, line items, totals, and tax. This can be integrated as a custom skill in Azure Cognitive Search.

Correct Option:

C. Azure AI Document Intelligence

Document Intelligence offers a pre-built invoice model that extracts key-value pairs and tables from invoice documents. You can integrate it as a custom skill in Azure Cognitive Search to enrich indexed content with invoice properties, enabling search and retrieval of specific invoice fields.

Incorrect Options:

A. Azure OpenAI –

OpenAI generates text and embeddings but does not extract structured fields from invoices. You could prompt it to parse invoices, but that would be less reliable and more expensive than using Document Intelligence.

B. Azure AI Immersive Reader –

Immersive Reader is for improving text readability (text sizing, spacing, parts of speech). It does not extract structured data from invoices.

D. Azure Custom Vision –

Custom Vision is for image classification and object detection, not for extracting text or fields from invoices.

Reference:

Microsoft Learn: "Document Intelligence – Invoice model" – Extracts structured data from invoices.

You are building an app by using the Semantic Kernel.

You need to include complex objects in the prompt templates of the app. The solution must support objects that contain sub-properties.

Which two prompt templates can you use? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

A. Liquid

B. JSONL

C. Handlebars

D. YAML

E. Semantic Kernel

C. Handlebars

Explanation:

Semantic Kernel supports multiple templating languages for prompt templates. Liquid and Handlebars are both supported and allow including complex objects with nested properties (sub-properties) using dot notation. They are designed for dynamic content rendering and can access deep object structures.

Correct Options:

A. Liquid

Liquid is a templating language supported by Semantic Kernel. It allows accessing complex objects and nested properties using {{object.property.subproperty}} syntax. It is commonly used in prompt templates for dynamic content.

C. Handlebars

Handlebars is also supported by Semantic Kernel. It provides dot notation access to nested properties (e.g., {{object.property.subproperty}}). Handlebars is lightweight and designed for logic-less templates with complex data binding.

Incorrect Options:

B. JSONL –

JSONL (JSON Lines) is a data format (each line a JSON object), not a templating language for prompts. It cannot dynamically render complex object properties in templates.

D. YAML –

YAML is a data serialization format, not a prompt templating language. While used for configuration, it does not provide the dynamic templating features needed for complex object access.

E. Semantic Kernel –

Semantic Kernel is the framework itself, not a prompt template format. It supports multiple templating engines (Liquid, Handlebars, etc.), but it is not a template language.

Reference:

Microsoft Learn: "Semantic Kernel – Prompt templates" – Supported formats include Liquid and Handlebars.

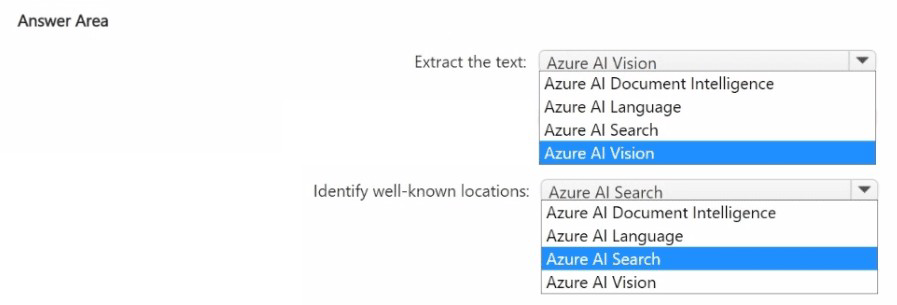

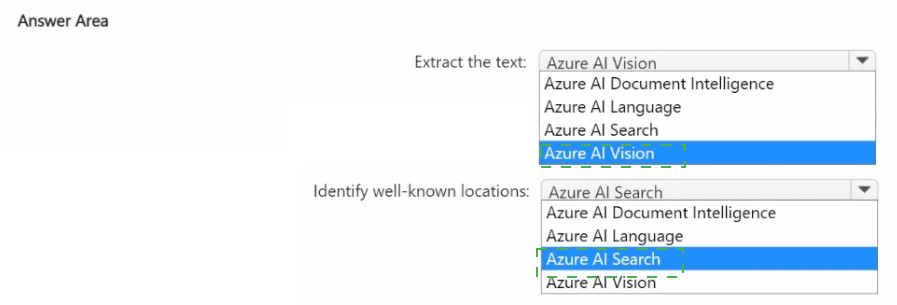

You have 100,000 images.

You need to build an app that will perform the following actions:

• Identify road signs in the images and extract the text on the signs.

• Analyze the text to identify well-known locations.

The solution must minimize development effort.

What should you use for each action? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

To extract text from images (road signs), Azure AI Vision provides OCR (Read API) that works out-of-the-box. For analyzing extracted text to identify well-known locations (e.g., city names, landmarks), Azure AI Language provides named entity recognition (NER) that identifies Location entities without training.

Correct Options:

First action (extract the text from signs): Azure AI Vision

Azure AI Vision's Read API (OCR) extracts printed text from images. It is pre-built, requires no training, and handles various fonts, angles, and lighting conditions. This minimizes development effort compared to custom solutions.

Second action (identify well-known locations): Azure AI Language

Azure AI Language provides pre-built Named Entity Recognition (NER) that identifies entities including Location (cities, countries, landmarks). The extracted text from signs can be sent to the Language service to identify location names without custom training.

Why Other Options Are Incorrect:

First action alternatives:

Azure AI Document Intelligence – Also extracts text but is optimized for structured documents (forms, invoices). Overkill for simple text extraction from signs.

Azure AI Language – Works on text input, not images. Cannot extract text directly.

Azure AI Search – A search service, not a text extraction service.

Second action alternatives:

Azure AI Search – Can index and search text but does not perform entity recognition or location identification.

Azure AI Document Intelligence – Extracts structured data from documents, not for identifying locations in text.

Azure AI Vision – Can detect objects and read text but does not identify well-known locations from text.

Reference:

Microsoft Learn: "Azure AI Vision – OCR" – Extract printed text from images.

| Page 9 out of 40 Pages |