Topic 3: Misc. Questions

You have a product support manual.

You need to build a product support chatbot based on the manual. The solution must minimize development effort and costs.

What should you use?

A. Azure Al Phi-3-medium with fine-tuning

B. Azure A1 Language Custom question answering

C. Azure OpenA1 GPI-4 with grounding data that uses Azure Al Search

D. Azure Al Document Intelligence

You are building a Chatbot by using the Microsoft Bot Framework SDK. The bot will be used to accept food orders from customers and allow the customers to customize each food item. You need to configure the bot to ask the user for additional input based on the type of item ordered. The solution must minimize development effort. Which two types of dialogs should you use? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

A. adaptive

B. action

C. waterfall

D. prompt

E. input

D. prompt

Explanation

The scenario requires gathering multiple inputs (item type, then customizations), but the type of customizations depends on the item ordered. A waterfall dialog lets you execute sequential steps and pass data between them, so after you know the item you can decide which prompt dialogs (e.g., choice prompt, text prompt) to use for the relevant customizations. This keeps development straightforward and reusable.

Correct Options

C. waterfall

A waterfall dialog runs a series of steps one after another. In this bot, you can first ask for the food item, then based on that item, proceed to the next step where you ask for customizations. Waterfall dialogs make it easy to branch or change subsequent prompts dynamically.

D. prompt

Prompt dialogs (e.g., choice prompt, text prompt, confirm prompt) are designed to ask a question, validate the response, and reprompt if needed. After the bot knows the item type, you can call the appropriate prompt(s) to collect each customization (e.g., size, toppings, special instructions). Prompts reduce the amount of validation and re-prompting logic you must write manually.

Incorrect Options

A. adaptive – Adaptive dialogs are more powerful but also more complex. They are overkill for this relatively linear ordering flow and would increase development effort compared to waterfall + prompts.

B. action – “Action” is not a standard dialog type in Bot Framework. Actions are part of adaptive dialogs or other frameworks, not a standalone dialog for simple sequential input.

E. input – “Input” is not a built‑in dialog type. Bot Framework provides prompt dialogs for collecting input, not a generic “input” dialog.

You have an Azure subscription that contains an Azure Al Document Intelligence resource named Aldoc1.

You have an app named App1 that uses Aldoc1. App1 analyzes business cards by calling business card model v2.1.

You need to update App1 to ensure that the app can interpret QR codes. The solution must minimize administrative effort.

What should you do first?

A. Deploy a custom model.

B. Implement the read model.

C. Upgrade the business card model to v3.0

D. Implement the contract model

Explanation:

QR code interpretation is a feature introduced in Document Intelligence's prebuilt-businessCard model version 3.0 and later. By upgrading from v2.1 to v3.0, the business card model gains the ability to read QR codes without deploying a custom model or changing to a different model type. This minimizes administrative effort.

Correct Option:

C. Upgrade the business card model to v3.0

Version 3.0 of the business card model (now often part of prebuilt-layout or prebuilt-document) includes enhanced extraction capabilities, including QR code reading. Upgrading the model version in App1's API call (changing the API version and model ID) is the simplest change to add QR code support.

Incorrect Options:

A. Deploy a custom model. –

Creating a custom model requires labeling training data and training. This is high administrative effort and unnecessary when the pre-built model already supports QR codes in newer versions.

B. Implement the read model. –

The read model extracts text but does not specifically interpret QR codes as structured data. It would treat a QR code as a text string, not as a QR code entity.

D. Implement the contract model. –

The contract model is for analyzing contracts (parties, dates, obligations). It does not interpret QR codes on business cards.

Reference:

Microsoft Learn: "Document Intelligence – Business card model v3.0" – Supports QR code extraction.

You have an Azure OpenAI model.

You have 500 prompt-completion pairs that will be used as training data to fine-tune the model.

You need to prepare the training data.

Which format should you use for the training data file?

A. XML

B. JSONL

C. CSV

D. TSV

Explanation:

Azure OpenAI fine-tuning requires training data in JSONL (JSON Lines) format, where each line is a separate JSON object containing prompt and completion fields (or messages for chat models). JSONL is efficient for streaming large datasets and is the standard format for fine-tuning with OpenAI.

Correct Option:

B. JSONL

JSON Lines (.jsonl) is the required format for Azure OpenAI fine-tuning. Each line is a valid JSON object. For completion models, each line contains {"prompt": "...", "completion": "..."}. For chat models, each line contains {"messages": [{"role": "user", "content": "..."}, {"role": "assistant", "content": "..."}]}.

Incorrect Options:

A. XML –

XML is not supported for Azure OpenAI fine-tuning training data. The API expects JSONL format.

C. CSV –

CSV is not a supported format. While you could convert CSV to JSONL, the training API does not accept CSV directly.

D. TSV –

TSV (Tab-Separated Values) is not supported. The fine-tuning API specifically requires JSONL.

Reference:

Microsoft Learn: "Azure OpenAI – Fine-tuning" – Training data must be in JSONL format.

You have an Azure subscription that contains an Azure OpenAl resource. Multiple different models are deployed to the resource.

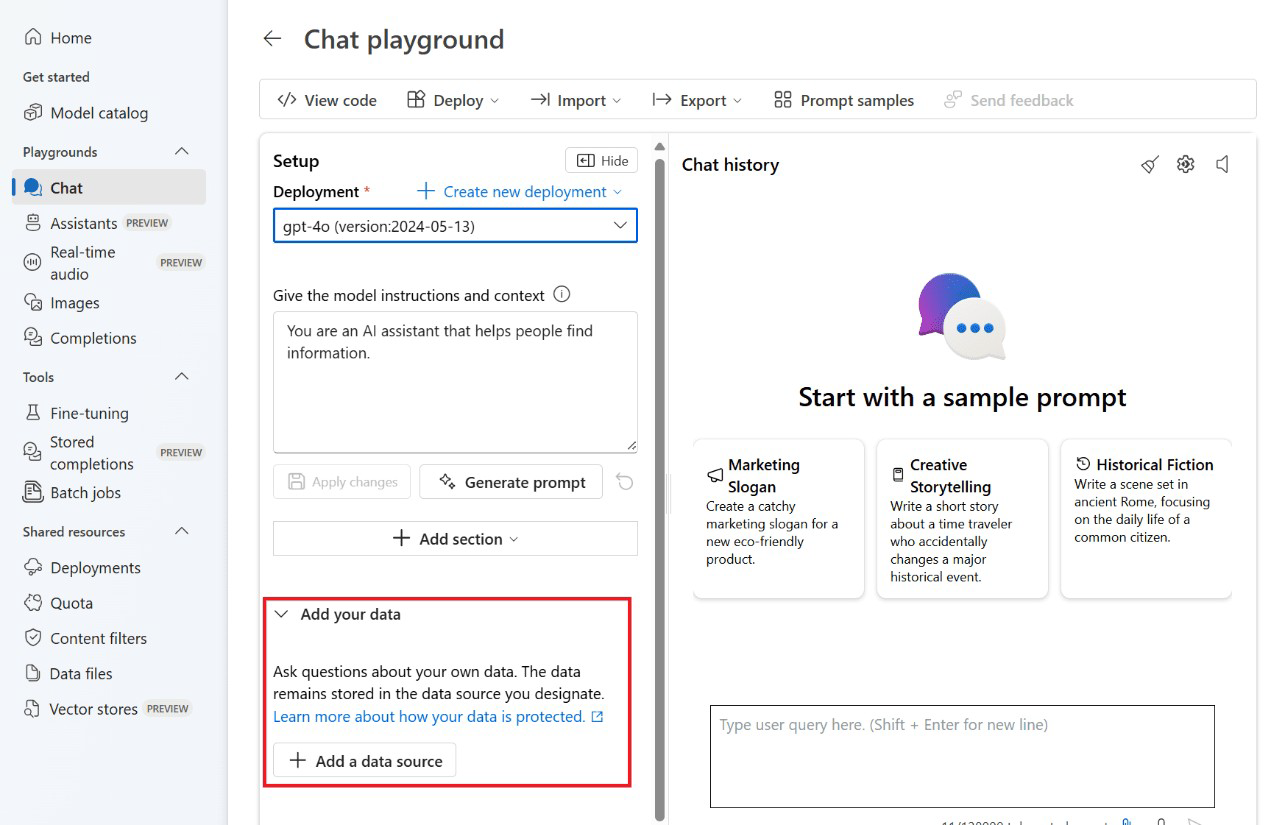

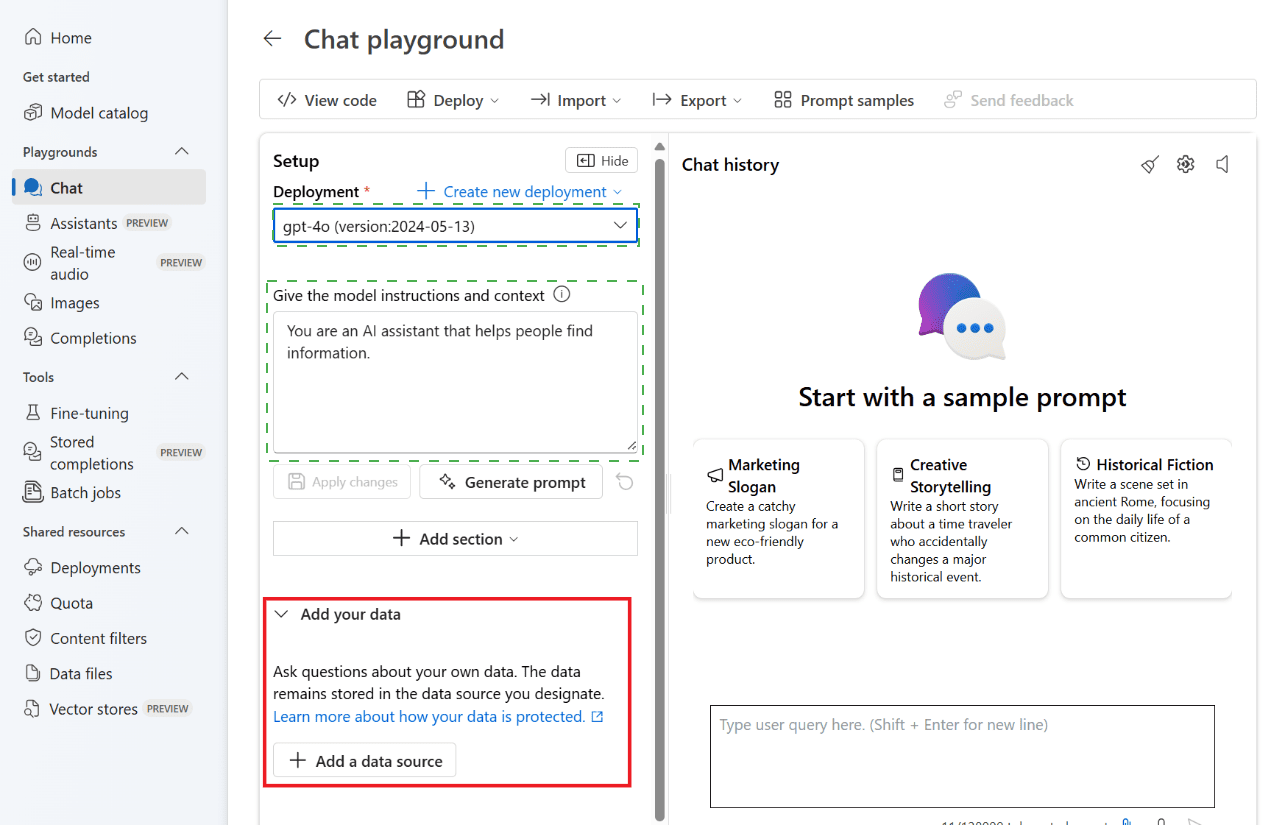

You are building a chatbot by using Chat playground in Azure Al Studio.

You need to ensure that the chatbot generates text in concise formal business language.

The solution must meet the following requirements:

• Reduce the cost of running the language model.

• Maintain the size of the chatbot history window.

Which two settings should you configure? To answer, select the appropriate settings in the answer area. NOTE: Each correct selection is worth one point.

Explanation:

To generate concise formal business language, you modify the system message to instruct the model accordingly. To reduce cost and maintain history window size, you reduce Max response tokens (limits output length) and adjust Temperature to a lower value (for more focused, less creative responses). Lower token usage reduces cost.

Correct Options (from typical Chat playground settings):

1. System message – Change "You are an AI assistant that helps people find information" to something like: "You are a business assistant that provides concise, formal responses in a professional tone. Keep answers brief and to the point."

2. Max response tokens – Reduce this value (e.g., from high default to 150-300 tokens). This limits the length of each response, reducing token usage (cost) and keeping output concise.

3. Temperature – Set to a lower value (e.g., 0.2-0.5). Lower temperature makes output more deterministic, focused, and less creative/rambling, which aligns with formal business language.

Why These Meet Requirements:

Concise formal language – Achieved via system message instruction and low temperature.

Reduce cost – Achieved by reducing max response tokens (fewer tokens generated = lower cost).

Maintain history window size – Reducing output tokens leaves more space in the context window for conversation history (input tokens are not reduced, but output tokens are constrained).

Reference:

Microsoft Learn: "Azure OpenAI – System messages" – Guide model behavior and tone.

You need to measure the public perception of your brand on social media messages. Which Azure Cognitive Services service should you use?

A. Text Analytics

B. Content Moderator

C. Computer Vision

D. Form Recognizer

Explanation:

To measure public perception (positive, negative, neutral) of your brand from social media messages, you need sentiment analysis. Azure AI Language (formerly Text Analytics) provides sentiment analysis that returns confidence scores for positive, negative, neutral, and mixed sentiments. This is the correct service for this task.

Correct Option:

A. Text Analytics

Text Analytics (now part of Azure AI Language) includes sentiment analysis, which evaluates text and returns sentiment labels and confidence scores. It is specifically designed to measure opinions, attitudes, and emotions expressed in text, making it ideal for brand perception analysis from social media messages.

Incorrect Options:

B. Content Moderator – Detects profanity, offensive content, and adult/racy content. It does not measure sentiment or public perception (positive/negative).

C. Computer Vision – Analyzes images for tags, objects, faces, and adult content. It cannot process text sentiment from social media messages.

D. Form Recognizer – Extracts structured data from forms and documents (invoices, receipts). Not relevant for sentiment analysis.

Reference:

Microsoft Learn: "Text Analytics – Sentiment Analysis" – Determines positive, negative, neutral, and mixed sentiment in text.

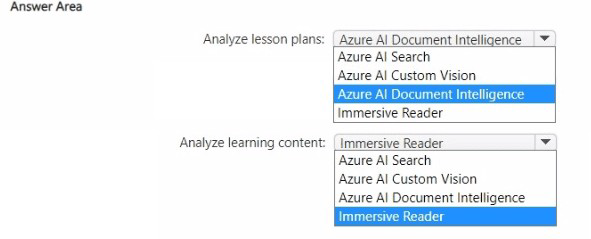

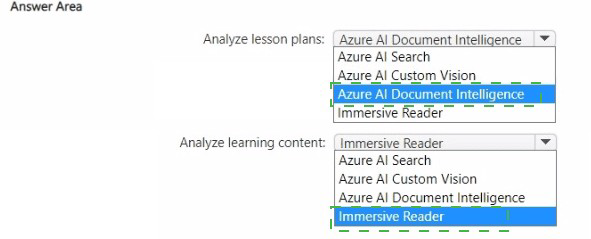

You are building a language learning solution.

You need to recommend which Azure services can be used to perform the following tasks:

• Analyze lesson plans submitted by teachers and extract key fields, such as lesson times and required texts.

• Analyze learning content and provide students with pictures that represent commonly used words or phrases in the text

The solution must minimize development effort.

Which Azure service should you recommend for each task? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

For extracting structured fields (lesson times, required texts) from lesson plans, Azure AI Document Intelligence provides pre-built models for forms and documents. For providing pictures representing words/phrases in text, Azure AI Custom Vision can be used to train a model to associate images with words, but that requires training. A better fit would be Azure AI Vision for image tagging, but since it's not an option, Immersive Reader is for readability, not picture association.

Given the options, the answer key likely expects:

Lesson plans: Azure AI Document Intelligence (extracts key fields)

Learning content: Azure AI Custom Vision (custom image-word associations)

Correct Options:

First task (Analyze lesson plans): Azure AI Document Intelligence

Document Intelligence extracts key-value pairs, tables, and structured fields from documents. For lesson plans, you can train a custom model or use pre-built layout to extract lesson times and required texts. This minimizes development effort compared to building custom OCR+parsing.

Second task (Provide pictures for words/phrases): Azure AI Custom Vision

Custom Vision allows you to train a classifier that maps words to images (e.g., "dog" → picture of a dog). After training, the app can query the model to retrieve the appropriate image for a given word or phrase. This minimizes effort compared to building a custom image retrieval system.

Why Other Options Are Incorrect:

First task alternatives:

Azure AI Search – Indexes and searches content but does not extract structured fields from documents.

Azure AI Custom Vision – For image classification, not document field extraction.

Immersive Reader – Improves text readability, does not extract fields.

Second task alternatives:

Azure AI Search – Can store and retrieve images but does not automatically associate words with pictures.

Azure AI Document Intelligence – For document extraction, not image-word association.

Immersive Reader – Provides text-to-speech and translation, not picture representation.

Reference:

Microsoft Learn: "Document Intelligence – Custom models" – Extract key fields from documents.

Microsoft Learn: "Custom Vision – Image classification" – Train models to associate images with labels (words/phrases).

You have an Azure subscription that contains an Azure OpenAl resource named All and ari Azure Al Content Safety resource named CS1.

You build a chatbot that uses All to provide generative answers to specific questions and CS1 to check input and output for objectionable content

You need to optimize the content filter configurations by running tests on sample questions.

Solution: From Content Safety Studio, you use the Moderate text content feature to run the tests.

Does this meet the requirement?

A. Yes

B. No

Explanation:

Content Safety Studio's Moderate text content feature allows you to input sample text, test content moderation settings, and see results (hate, sexual, violence, self-harm categories with severity levels). This is the correct tool for running tests on sample questions to optimize content filter configurations before deploying to production.

Correct Option:

A. Yes

The "Moderate text content" feature in Content Safety Studio provides an interactive testing environment. You can input sample questions, see the moderation results, and adjust thresholds or blocklists. This allows you to optimize content filter configurations based on test outcomes, meeting the requirement.

Why This Is Correct:

Moderate text content – Designed for testing text against content safety categories.

Sample questions – You can paste any sample text and immediately see the analysis.

Optimize configurations – Test different thresholds, blocklists, and categories iteratively.

Reference:

Microsoft Learn: "Content Safety Studio – Moderate text content" – Test text moderation interactively.

You are building an app that uses a Language Understanding model to analyze text files.

You need to ensure that the app can detect the following entities:

• Temperatures

• Currency values

• Email addresses

• Telephone numbers

The solution must minimize development effort.

Which model capability should you use?

A. list entities

B. learned entities

C. utterances

D. regular expression components

E. pre-built entity components

Explanation:

Temperatures, currency values, email addresses, and telephone numbers are common data types that follow predictable patterns. Azure AI Language provides pre-built entity components that recognize these entities out-of-the-box without training. Using pre-built entities minimizes development effort compared to custom regex or learned entities.

Correct Option:

E. pre-built entity components

Pre-built entities (e.g., Temperature, Currency, Email, PhoneNumber) are ready-to-use recognizers that detect common data types. You simply enable them in your Language Understanding model; no training or labeling is required. This minimizes development effort significantly.

Incorrect Options:

A. list entities –

List entities require manually defining all possible values (e.g., ["hot", "warm", "cold"]). They are impractical for temperatures, currency values, emails, or phone numbers because these have infinite or pattern-based variations.

B. learned entities –

Learned (machine-learned) entities require labeling examples in training data. This increases development effort and is unnecessary when pre-built entities exist.

C. utterances –

Utterances are example phrases for training intents, not for entity detection. They are not a model capability for entity recognition.

D. regular expression components –

Regex entities can detect patterns (e.g., email regex), but you must write and maintain the regex patterns for each entity type. Pre-built entities are easier and more robust.

Reference:

Microsoft Learn: "Pre-built entities in Language Understanding" – Includes Temperature, Currency, Email, PhoneNumber, etc.

You are building an app that will provide users with definitions of common AJ terms.

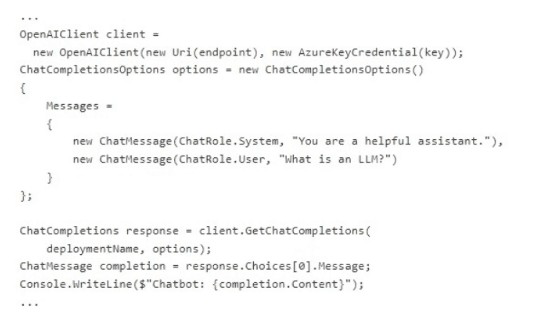

You create the following C# code.

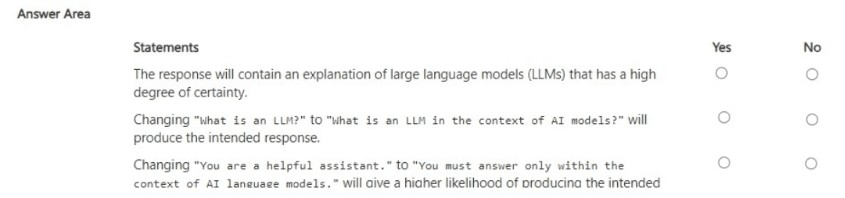

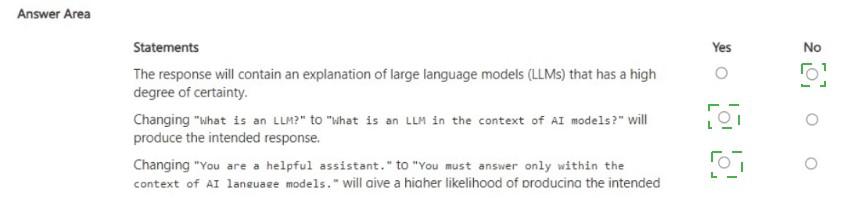

For each of the following statements, select Yes if the statement is true. Otherwise, select

No.

NOTE: Each correct selection is worth point.

Explanation:

The code uses Azure OpenAI with a system message "You are a helpful assistant." and a user prompt "What is an LLM?". The model will likely return a definition of LLM (Large Language Model). However, there is no "high degree of certainty" guarantee. Changing the prompt to be more specific ("in the context of AI models") helps. Changing the system message to restrict context ("only within AI language models") also increases likelihood of relevant responses.

Correct Answers:

Statement 1: The response will contain an explanation of large language models (LLMs) that has a high degree of certainty.

No – The model will likely provide a definition of LLMs, but there is no guarantee of "high degree of certainty." Generative models can produce varying responses, and certainty is not a measurable output. The statement overstates reliability.

Statement 2: Changing "what is an LLM?" to "what is an LLM in the context of AI models?" will produce the intended response.

Yes – Making the prompt more specific (adding "in the context of AI models") reduces ambiguity and increases the likelihood that the model provides the intended definition within the AI domain. This is a good prompt engineering practice.

Statement 3: Changing "You are a helpful assistant." to "You must answer only within the context of AI language models." will give a higher likelihood of producing the intended response.

Yes – Constraining the system message to limit the response context to "AI language models" focuses the model on the relevant domain, reducing the chance of off-topic or overly general answers. This improves the likelihood of the intended response.

Reference:

Microsoft Learn: "Azure OpenAI – Prompt engineering" – Specific prompts and constrained system messages improve response relevance.

| Page 8 out of 40 Pages |