Topic 3: Misc. Questions

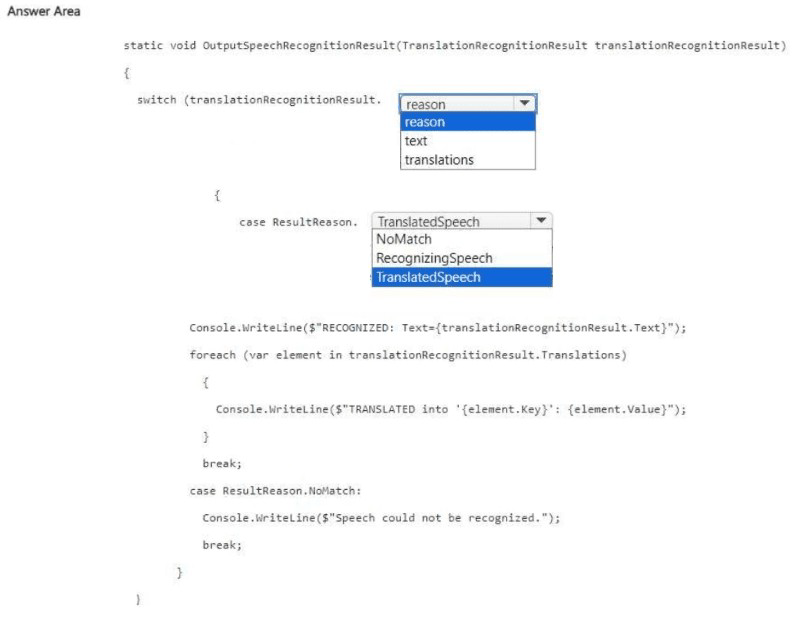

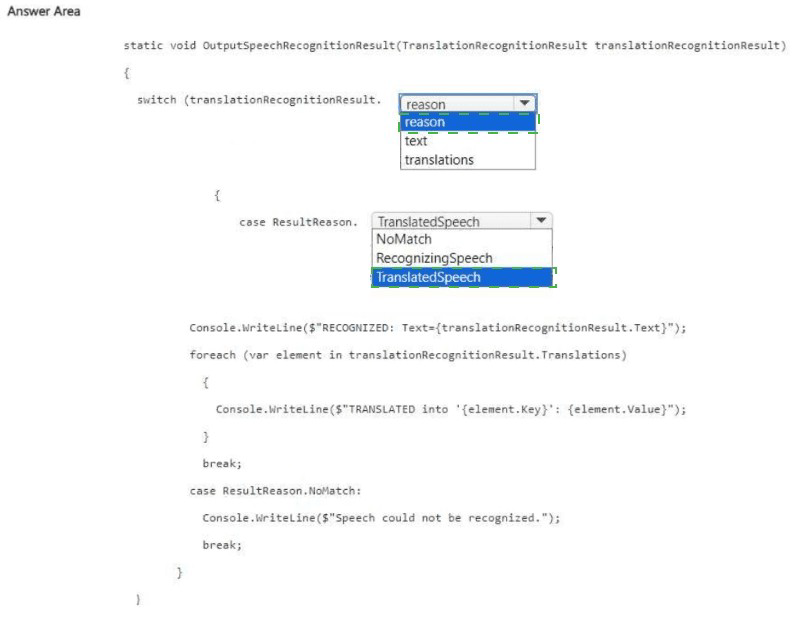

You need to build an app that will use the Azure Al Speech service to translate audio files.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation

The code processes the result of a speech translation recognition. The TranslationRecognitionResult object has a Reason property to determine the outcome. For a successful translation, the Reason should be TranslatedSpeech. The translated text is stored in the Text property, and the Translations dictionary contains the translated outputs per target language.

Correct Options

First blank (after translationRecognitionResult.): Reason

The Reason property (of type ResultReason) indicates whether the recognition was successful (TranslatedSpeech), failed (NoMatch), or is ongoing (RecognizingSpeech). This is the correct property to switch on.

Second blank (case): TranslatedSpeech

When the recognition successfully translates the speech, ResultReason.TranslatedSpeech is returned. This case block handles the successful translation.

Why Other Options Are Incorrect

text – Not a property that indicates the result reason.

translations – Contains the translation outputs but does not indicate success/failure.

RecognizingSpeech – Indicates an interim result (partial recognition), not the final result.

NoMatch – Used in the fallback case, not the success case.

Reference

Microsoft Learn: Speech Translation – Recognition results – TranslationRecognitionResult.Reason with ResultReason.TranslatedSpeech for success.

You have a library that contains 1,000 video files.

You need to perform sentiment analysis on the videos by using an Azure Al Content Understanding project. The solution must minimize development effort.

Which type of template should you use for the project?

A. Video shot analysis

B. Media asset management

C. Advertising

Explanation

Content Understanding projects use templates to define the extraction schema. For analyzing sentiment in video files, the Media asset management template is designed to process video assets and extract metadata including sentiment, key moments, and descriptive attributes. This minimizes development effort compared to building a custom schema from scratch.

Correct Option

B. Media asset management

This template is built for processing video files (e.g., libraries, archives, media assets). It includes pre-configured capabilities for sentiment analysis, scene detection, and content summarization, reducing the need for custom configuration.

Why Other Options Are Incorrect

A. Video shot analysis –

Focuses on technical shot detection (cuts, transitions, camera movement), not sentiment analysis.

C. Advertising –

Optimized for ad creative analysis (product placement, brand logos, call-to-action), not general video sentiment.

Reference

Microsoft Learn: Azure AI Content Understanding – Templates – Media asset management template supports sentiment analysis for video libraries.

You are building an app that will use Azure Al Language to extract meaning from text messages.

You need to provide additional context by adding references to supporting articles in Wikipedia.

What should you use?

A. entity linking

B. custom entity extraction recognition (NER)

C. Azure Al Content Safety

D. key phrase extraction

Explanation

Entity linking identifies named entities in text and links them to a knowledge base (Wikipedia). This provides additional context by returning a Wikipedia URL for each linked entity. It is the correct feature for adding references to supporting Wikipedia articles.

Correct Option

A. entity linking

Entity linking disambiguates entities (e.g., "Paris" as city vs person) and returns a url property pointing to a Wikipedia article. This gives users direct access to supporting articles, fulfilling the requirement.

Why Other Options Are Incorrect

B. custom NER – Extracts custom entities you define but does not link to Wikipedia. Requires training and does not provide external references.

C. Azure AI Content Safety – Detects objectionable content; does not link to Wikipedia.

D. key phrase extraction – Returns important topics but does not link to external articles.

Reference

Microsoft Learn: Entity linking in Azure AI Language – Returns Wikipedia URLs for recognized entities.

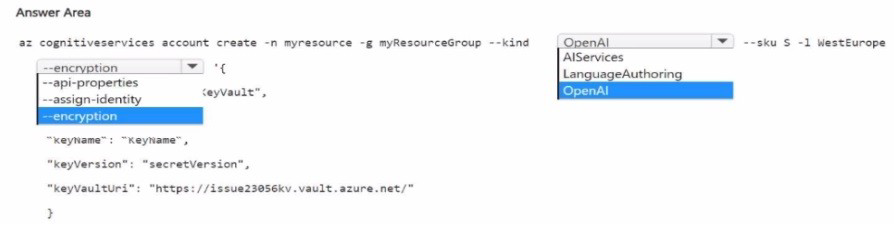

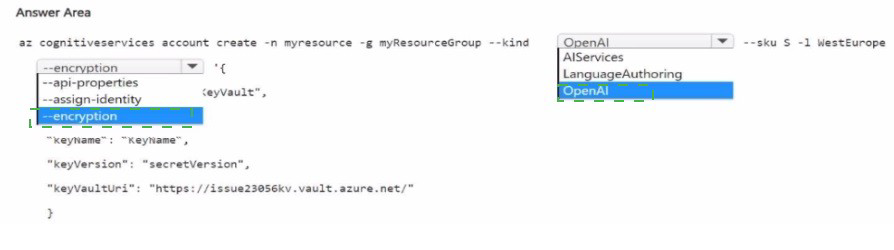

You have an Azure subscription

You need to create a new resource that will generate fictional stories in response to user prompts. The solution must ensure that the resource uses a customer-managed key to protect data.

How should you complete the script? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation

To generate fictional stories, you need an Azure OpenAI resource. To use a customer‑managed key (CMK), you must specify the key vault URI, key name, and key version using the --api-properties parameter (or --encryption in newer CLI versions), and also enable a system‑assigned or user‑assigned identity with --assign-identity.

Correct Options

First blank (--kind): OpenAI

The resource kind for Azure OpenAI is OpenAI. This creates a resource capable of generating text responses (fictional stories).

Second blank (--assign-identity): --assign-identity

Required to assign a system‑assigned managed identity to the resource. The identity is used to authenticate to Azure Key Vault for accessing the customer‑managed key.

Third blank (key properties):

--api-properties (or --encryption) with the key vault URI, key name, and key version.

From the answer area, the correct structure is:

text

--api-properties keyVaultUri="https://issue23056kv.vault.azure.net/" keyName="KeyName" keyVersion="secretVersion"

Why Other Options Are Incorrect

Omission of --assign-identity – Without a managed identity, the resource cannot access Key Vault.

Incorrect key properties format – Missing keyName or keyVersion would cause CMK setup to fail.

Using --keyVaultUri alone – The full syntax requires keyVaultUri, keyName, and keyVersion.

Reference

Microsoft Learn: Create Cognitive Services resource with customer-managed key – Use --assign-identity and --api-properties keyVaultUri=... keyName=... keyVersion=....

https://selfexamtraining.com/uploadimages/image_2026-04-03_144411142.png

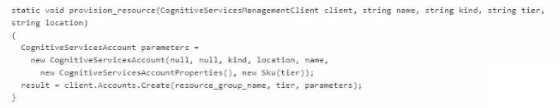

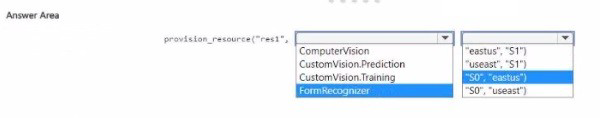

Explanation

The function provision_resource creates a Cognitive Services resource. The parameters are: name, kind, tier, location (in that order based on the call signature). To create a Computer Vision resource in East US with the S1 pricing tier, the correct order is "res1", "ComputerVision", "eastus", "S1". However, the function expects (name, kind, tier, location), so the arguments must match that order.

Correct Option

provision_resource("res1", "ComputerVision", "eastus", "S1") – assuming the function signature is (name, kind, tier, location). But the answer area shows options like "eastus", "S1" after the kind. Looking at the provided answer choices, the correct match is:

Kind: ComputerVision

Location: "eastus"

Tier: "S1"

From the answer area, the correct selection is:

provision_resource("res1", "ComputerVision", "eastus", "S1")

Why Other Options Are Incorrect

CustomVision.Prediction / CustomVision.Training – Not Computer Vision; these are for Custom Vision.

FormRecognizer – Different service.

"useast" – Invalid region (should be "eastus").

"S0" – Free tier, not S1.

Reference

Microsoft Learn: Cognitive Services SKUs – Computer Vision uses "ComputerVision" kind, "S1" tier.

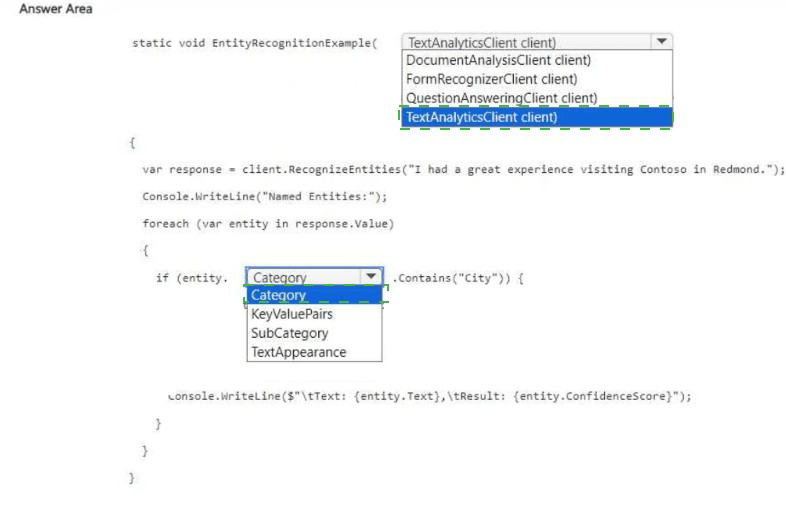

You have 100,000 documents.

You are building an app that will identify city names in each document by using Azure Al Language.

You need to test the detection client.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

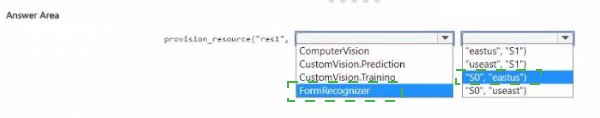

Explanation

The task is to identify city names using Azure AI Language. The correct client for named entity recognition (NER) is TextAnalyticsClient. The response contains entities with a Category property; for city names, you check if the Category equals "City" or "Location" (depending on the model version). The code should filter on Category.

Correct Options

First blank (client declaration): TextAnalyticsClient client

Named entity recognition (NER) for cities is part of the Text Analytics (Azure AI Language) client library. The other clients (DocumentAnalysisClient, FormRecognizerClient, QuestionAnsweringClient) are for different services.

Second blank (after entity.): Category

The RecognizeEntities method returns a collection of CategorizedEntity objects. Each entity has a Category property (e.g., "Person", "Location", "City"). You compare entity.Category to "City" to filter city names.

Why Other Options Are Incorrect

DocumentAnalysisClient / FormRecognizerClient – For Document Intelligence (form extraction), not text entity recognition.

QuestionAnsweringClient – For QnA Maker / custom question answering.

KeyValuePairs – Not a property of CategorizedEntity.

SubCategory – Available for some entities (e.g., "USState" under "Location"), but not the primary category.

TextAppearance – For handwriting detection, not category filtering.

Reference

Microsoft Learn: Text Analytics – Entity Recognition – Use TextAnalyticsClient.RecognizeEntities.

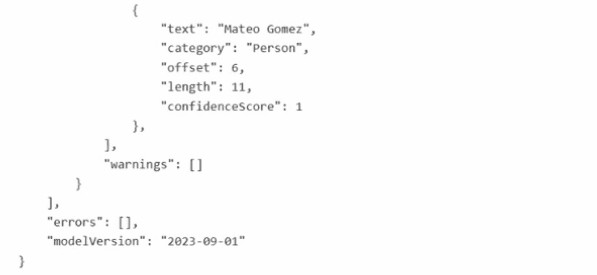

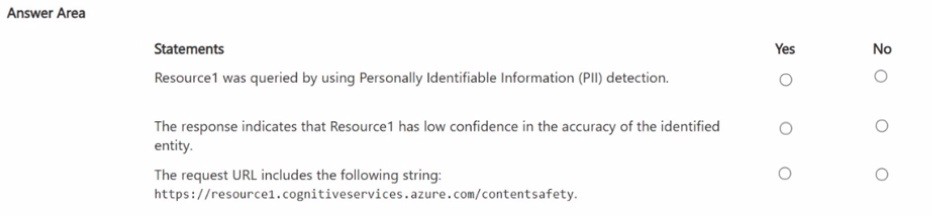

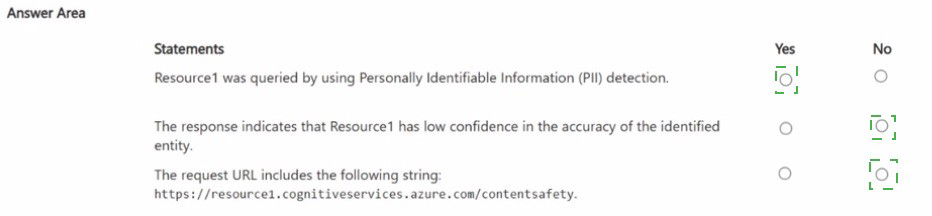

You have an Azure subscription that contains an Azure Al Language service resource named Resourcel. You query Resourcel by running a cURL command and receive the following response.

For each of the following statements, select Yes if the statement is true. Otherwise, select

No. NOTE: Each correct selection is worth point.

Explanation

The response shows a "category": "Person" entity with "confidenceScore": 1. This is the Named Entity Recognition (NER) output, not PII detection (which would have categories like "PhoneNumber", "Email", "SSN"). A confidence score of 1 (100%) indicates high confidence, not low confidence. The request URL shown (/contentsafety) is for Content Safety, not Language service NER.

Correct Answers

Statement 1: Resource1 was queried by using Personally Identifiable Information (PII) detection.

No – The category "Person" is from standard NER, not PII detection. PII detection categories include "PhoneNumber", "Email", "SSN", etc. The response lacks PII‑specific categories.

Statement 2: The response indicates that Resource1 has low confidence in the accuracy of the identified entity.

No – "confidenceScore": 1 means 100% confidence, which is high confidence, not low.

Statement 3: The request URL includes the following string: https://resource1.cognitiveservices.azure.com/contentsafety.

No – The /contentsafety endpoint is for Azure AI Content Safety, not for Language service NER. The correct endpoint for NER is /language/.../entities/recognition/general or similar.

Reference

Microsoft Learn: Language service NER – Returns categories like Person, Location, Organization with confidence scores.

You have an Azure OpenAI model named All.

You are building a web app named App1 by using the Azure OpenAI SDK You need to configure App1 to connect to All What information must you provide?

A. the endpoint, key, and model name

B. the deployment name, endpoint. and key

C. the endpoint, key, and model type

D. the deployment name, key, and model name

Explanation

To connect to an Azure OpenAI model using the Azure OpenAI SDK, you must provide the endpoint (resource URL), the API key (subscription key for authentication), and the deployment name (the name you gave when deploying the model, e.g., "gpt-35-turbo-deployment"). The model name alone is insufficient because multiple deployments of the same model can exist.

Correct Option

B. the deployment name, endpoint, and key

Endpoint – The resource URL (e.g., https://your-resource.openai.azure.com/).

Key – The API key for authentication (passed in the api-key header).

Deployment name – Identifies which deployed model instance to call (e.g., gpt-35-turbo or gpt-4 deployment).

Why Other Options Are Incorrect

A. the endpoint, key, and model name – The model name (e.g., "gpt-35-turbo") is not enough; you need the deployment name, which can be different from the model name.

C. the endpoint, key, and model type – "Model type" is vague and not a required parameter in the SDK.

D. the deployment name, key, and model name – Missing the endpoint; the SDK requires the endpoint to locate the resource.

Reference

Microsoft Learn: Azure OpenAI SDK – Authentication – Requires endpoint, key, and deployment name.

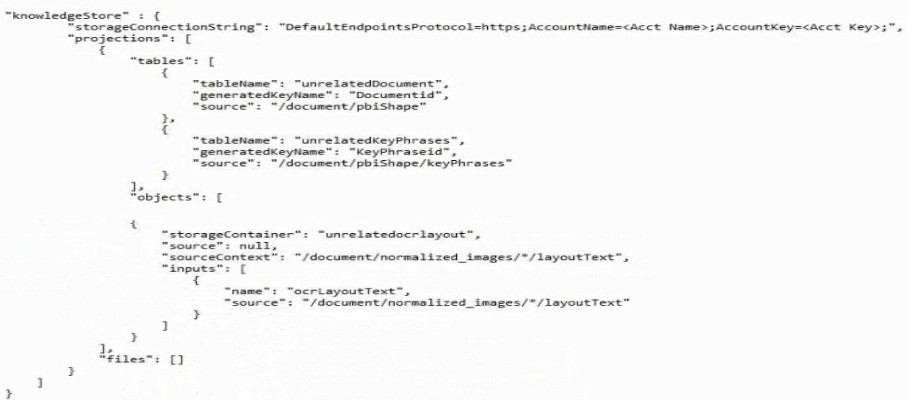

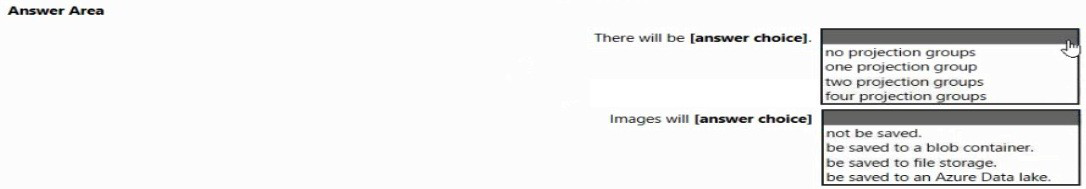

You create a knowledge store for Azure Cognitive Search by using the following JSON.

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE Each correct selection is worth one point.

Explanation

The JSON defines a "projections" array containing one projection group (the group that includes tables, objects, and files). The "files": [] array is empty, but the "objects" section specifies a "storageContainer" for saving layout text to blob storage. Images are not saved because "files": [] is empty and no projection explicitly saves images.

Correct Answers

First blank: one projection group

The "projections" array has a single object (the group containing tables, objects, and files). That counts as one projection group.

Second blank: not be saved

The "files" array is empty ("files": []), meaning no file projections are defined. The "objects" projection saves text (/document/normalized_images/*/layoutText) but not the actual images. Therefore, images are not saved.

Why Other Options Are Incorrect

Two / four projection groups – Only one group is present.

be saved to a blob container / file storage / Azure Data Lake – No file projection is configured, and objects save only text, not images.

Reference

Microsoft Learn: Knowledge store projections – A projection group can contain tables, objects, and files. Empty "files": [] means no file projections.

You have the following files:

• File1.pdf

• File2.jpg

• File3.docx

• File4.webp

• FileS.png

Which files can you analyze by using Azure Al Content Understanding?

A. File1 .pdf and File3.docx only

B. File1.pdf, File2jpg, and File5.png only

C. File1.pdf, File2.jpg. and File3.docx only

D. File1.pdf, File2.jpg. File3.docx, and FileS.png only

E. File1.pdf. File2.jpg, File3.docx. File4.webp, and File5.png

Explanation

Azure AI Content Understanding supports common document and image formats. Based on typical supported formats for document analysis services (e.g., Document Intelligence, Content Understanding), PDF, JPG, DOCX, and PNG are supported. WEBP may not be universally supported in all preview or general availability versions, so it is excluded from the correct answer.

Correct Option

D. File1.pdf, File2.jpg, File3.docx, and File5.png only

PDF (File1) – Standard document format, supported.

JPG (File2) – Common image format, supported.

DOCX (File3) – Microsoft Word format, supported.

PNG (File5) – Common image format, supported.

WEBP (File4) – Not universally supported in all Azure AI Content Understanding implementations, so it is excluded.

Why Other Options Are Incorrect

A – Missing JPG and PNG, which are supported.

B – Missing DOCX, which is supported.

C – Missing PNG, which is supported.

E – Includes WEBP, which is typically not supported.

Reference

Microsoft Learn: Azure AI Content Understanding – Supported formats – PDF, JPG, PNG, DOCX, and others; WEBP may not be listed.

| Page 7 out of 40 Pages |