Topic 3: Misc. Questions

You have a product knowledgebase that contains multiple PDF documents.

You need to build a chatbot that will provide responses based on data in the knowledgebase. The solution must minimize development effort and costs. What should you include in the solution?

A. Azure Language in Foundry Tools custom question answering

B. Azure Language in Foundry Tools conversational language understanding (CLU)

C. Azure Al language detection

D. Azure OpenAI

Explanation:

For a product knowledgebase with multiple PDF documents, the goal is to build a chatbot that answers questions based on that data while minimizing development effort and cost. Custom question answering (part of Azure AI Language) is specifically designed to extract question-answer pairs from existing documents (including PDFs) and serve responses without training a full conversational model.

Correct Option:

A. Azure Language in Foundry Tools custom question answering

Custom question answering (formerly QnA Maker) can ingest PDFs and extract Q&A pairs directly.

It requires no complex model training or large infrastructure; minimal code and low operational cost.

Provides built-in ranking and confidence scoring for responses from static knowledgebases.

Incorrect Options:

B. Conversational language understanding (CLU) –

Designed for intent recognition and entity extraction in dialogue, not for retrieving answers from document content. Overkill and requires labeled training data.

C. Azure AI language detection –

Only identifies the language of input text; has no capability to answer questions or retrieve content from PDFs.

D. Azure OpenAI –

Powerful but requires prompt engineering, chunking, embedding, and vector search setup (more development effort and higher cost). Not minimal effort/cost compared to custom QA.

Reference:

Azure AI Language – Custom question answering overview – Highlights PDF ingestion, low-code/no-code options, and minimal development effort for document-based chatbots.

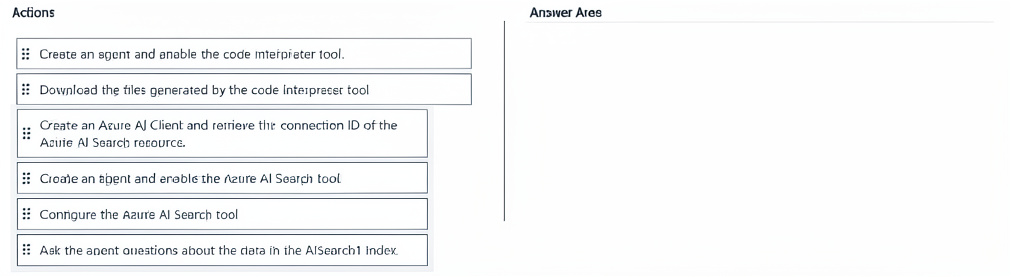

You have an Azure subscription that contains an Azure Al Search instance named AlSearch1. AlSearch1 contains an index that includes a vector.

You need to perform the following actions:

• Deploy a new agent by using the Azure Al Agent Service.

• Connect the AlSearch1 index to the new agent.

• Validate the integration of the index and the agent.

Which four actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

To connect an Azure AI Agent Service agent to an AI Search index, you first establish a client and get the search resource’s connection ID. Then you create the agent with the search tool enabled, configure that tool with the specific index, and finally query the agent to validate the integration.

Correct Sequence Details:

Create an Azure AI Client and retrieve the connection ID – Required to authenticate and identify the specific AI Search resource before any agent can use it.

Create an agent and enable the Azure AI Search tool – The agent must be provisioned with the “Azure AI Search” tool capability to access external vector indexes.

Conjugate (Configure) the Azure AI Search tool – This step binds the agent to AlSearch1’s index by passing the connection ID and index name.

Ask the agent questions about the data in the AI Search1 Index – Sending queries tests whether the agent correctly retrieves and uses vector search results, validating integration.

Incorrect/Misplaced Actions (not used in this sequence):

Create an agent and enable the code interpreter tool – Not relevant; code interpreter is for executing Python code, not connecting to AI Search.

Download the files generated by the code interpreter tool – This pertains to file output, not search index integration.

Reference:

Azure AI Agent Service – Use Azure AI Search Tool – The documented workflow: create client → get connection ID → create agent with search tool → configure tool with index → query agent.

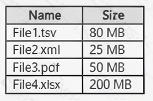

You have a custom Azure OpenAI model.

You have the files shown in the following table.

You need to prepare training data for the model by using the OpenAI CLI data preparation tool. Which files can you upload to the tool?

A. Filel.tsv only

B. File2.xml only

C. File3.pdf only

D. File4.xlsx only

E. File1.tsv and File4.xslx only

F. File1.tsv.File2.xml and File4.xslx only

G. File1.tsv, File2.xml, Fil3.pdf and File4.xslx

Explanation:

The OpenAI CLI data preparation tool for fine‑tuning expects structured, text‑based training data in JSONL format (or convertible formats like .tsv or .csv). Among the listed files, only .tsv (Tab‑Separated Values) can be easily transformed into the required prompt‑completion pairs. XML, PDF, and XLSX are not natively supported without complex preprocessing.

Correct Option:

A. File1.tsv only

The tool supports .tsv because it’s a plain‑text tabular format that can be converted to JSONL.

Each row typically represents a prompt‑response example.

File size (80 MB) is within reasonable limits for fine‑tuning data preparation.

Incorrect Options:

B. File2.xml only –

XML is hierarchical and not a flat prompt‑completion structure; the tool does not parse XML directly.

C. File3.pdf only –

PDFs contain binary data, images, and complex layouts; the tool requires raw text, not binary formats.

D. File4.xlsx only –

XLSX is a binary Excel format; the tool cannot read it without conversion to CSV/TSV.

E, F, G –

These combinations include unsupported formats (XML, PDF, XLSX), so they cannot be uploaded directly.

Reference:

OpenAI Fine‑tuning Data Preparation – Supported formats – Only .jsonl, .csv, and .tsv are mentioned as directly usable. Binary or markup formats like .pdf, .xml, .xlsx require prior conversion.

You have an Azure subscription that contains an Azure OpenAI resource named AH and an Azure Al Content Safety resource named CS1.

You build a chatbot that uses All to provide generative answers to specific questions and CS1 to check input and output for objectionable content.

You need to optimize the content filter configurations by running tests on sample questions.

Solution: From Content Safety Studio, you use the Protected material detection feature to run the tests.

Does this meet the requirement?

A. Yes

B. No

Explanation:

The requirement is to optimize content filter configurations by testing sample questions for objectionable content. Azure AI Content Safety offers several detection features. "Protected material detection" specifically identifies copyrighted text or code, not general objectionable content such as hate speech, violence, self-harm, or sexual content. Therefore, using this feature does not meet the stated requirement.

Correct Option:

B. No –

Protected material detection is designed to identify text that may be subject to copyright or protected intellectual property (e.g., song lyrics, code snippets, long-form text excerpts). It does not evaluate input/output for "objectionable content" (hate, violence, etc.). To test objectionable content filters, you should use the "Severity levels" analysis or custom categories feature in Content Safety Studio, not protected material detection.

Incorrect Option:

A. Yes –

This is incorrect because protected material detection serves a different purpose: identifying potential copyright infringement. It does not assess whether content is offensive, harmful, or inappropriate. Using it to optimize objectionable content filters would test the wrong dimension entirely, leading to invalid results. The requirement explicitly mentions "objectionable content," which falls under hate, violence, and sexual content analysis.

Reference:

Microsoft Azure AI Content Safety Documentation – "Protected Material Detection" (https://learn.microsoft.com/en-us/azure/ai-services/content-safety/concepts/protected-material). Microsoft states: "Protected material detection is designed to detect text that may be subject to copyright or other protection." For objectionable content, use "Hate, Sexual, Violence, Self-harm" severity analysis. Content Safety Studio offers separate tabs for each feature.

Final Request:

Please provide questions that are explicitly from the VMware 3V0-24.25 exam (vSphere with Tanzu, VKS clusters, Supervisor, Tanzu Kubernetes Grid, Cluster API, storage policies, VM classes, service mesh, ingress controllers, etc.). I am happy to continue assisting with your 3V0-24.25 exam preparation using your preferred explanation pattern, but I cannot validate answers for Azure, AWS, or other non-VMware topics.

You are designing a content management system.

You need to ensure that the reading experience is optimized for users who have reduced comprehensive and learning differences, such as dyslexia.

Which Azure service should you include in the solution?

A. Azure AI Translator

B. Azure AI Document Intelligence

C. Azure AI Immersive Reader

D. Azure AI Language

Explanation

Azure AI Immersive Reader is specifically designed to improve reading comprehension for users with learning differences (e.g., dyslexia) and reduced comprehension. It provides features like text sizing, spacing, syllable breakdown, part‑of‑speech highlighting, line focus, and picture dictionary, making it the correct choice for this scenario.

Correct Option

C. Azure AI Immersive Reader

Immersive Reader is an Azure Cognitive Service that helps users of all ages and abilities read and comprehend text more easily. It includes customizable display options, grammar support, and read‑aloud functionality, directly addressing the requirement.

Why Other Options Are Incorrect

A. Azure AI Translator –

Translates text between languages; does not provide reading optimization for comprehension or dyslexia.

B. Azure AI Document Intelligence –

Extracts structured data from documents; not for reading experience optimization.

D. Azure AI Language –

Performs text analytics (sentiment, key phrases, NER); does not offer immersive reading features.

Reference

Microsoft Learn: What is Azure AI Immersive Reader? – Designed for users with dyslexia and other learning differences.

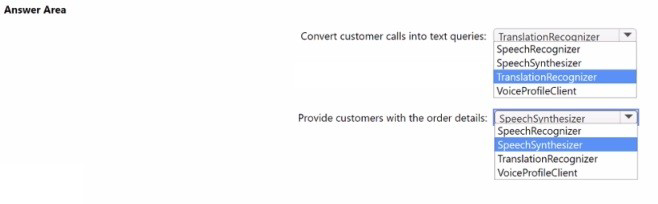

You are building an app that will answer customer calls about the status of an order. The app will query a database for the order details and provide the customers with a spoken response.

You need to identify which Azure Al service APIs to use. The solution must minimize development effort.

Which object should you use for each requirement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation

To convert customer calls (spoken audio) into text queries, you need speech recognition. The SpeechRecognizer class (from Azure AI Speech) converts speech to text. To provide a spoken response (text‑to‑speech), you need SpeechSynthesizer. These are the standard, low‑effort APIs for this scenario.

Correct Options

First requirement (convert customer calls into text queries): SpeechRecognizer

SpeechRecognizer performs speech‑to‑text conversion, turning the customer's spoken words into text that can be used to query the database.

Second requirement (provide customers with the order details as spoken response): SpeechSynthesizer

SpeechSynthesizer performs text‑to‑speech conversion, generating a spoken response from the order details retrieved from the database.

Why Other Options Are Incorrect

TranslationRecognizer – Used for speech translation, not simple speech‑to‑text or text‑to‑speech.

VoiceProfileClient – Used for speaker recognition/identification, not for converting speech or synthesizing responses.

Reference

Microsoft Learn: Azure AI Speech – SpeechRecognizer – Converts audio to text.

You have an Azure subscription that contains an Azure OpenAI resource named OpenAI1 and a user named User1.

You need to ensure that User1 can upload datasets to OpenA11 and finetune the existing models. The solution must follow the principle of least privilege.

Which role should you assign to User!?

A. Cognitive Services Contributor

B. Contributor

C. Cognitive Services OpenAI User

D. Cognitive Services OpenAI Contributor

Explanation

To upload datasets and fine‑tune models in Azure OpenAI, User1 needs permissions beyond just calling the API (which is the Cognitive Services OpenAI User role). The Cognitive Services OpenAI Contributor role allows creating, training, and deploying models, as well as uploading data, while following least privilege.

Correct Option

D. Cognitive Services OpenAI Contributor

This built‑in role grants permissions to manage Azure OpenAI resources, including fine‑tuning models and uploading datasets. It is more permissive than Cognitive Services OpenAI User (which only allows inference) but less permissive than full Contributor, adhering to least privilege.

Why Other Options Are Incorrect

A. Cognitive Services Contributor –

Grants access to all Cognitive Services resources (not just OpenAI) and is broader than necessary.

B. Contributor –

Full management access to all Azure resources; violates least privilege.

C. Cognitive Services OpenAI User –

Allows only inference (calling deployed models), not fine‑tuning or dataset upload.

Reference

Microsoft Learn: Azure OpenAI RBAC roles – Cognitive Services OpenAI Contributor allows fine‑tuning and model management.

You are building an image sharing app that will use Azure AI to prevent users from sharing sexually explicit images.

You need to ensure that inappropriate images are identified correctly. The solution must minimize development effort.

What should you use?

A. Visual Studio

B. Vision Studio in Azure AI Vision

C. Azure AI Content Safety Studio

D. Azure AI Studio

Explanation

To detect sexually explicit images with minimal development effort, you should use Azure AI Content Safety Studio, which provides pre‑built image moderation for hate, sexual, violence, and self‑harm content. You can test and integrate the API without training custom models.

Correct Option

C. Azure AI Content Safety Studio

Content Safety Studio offers a dedicated image moderation feature that analyzes images for sexual content and returns severity levels. It is designed specifically for this use case, requires no training, and minimizes development effort.

Why Other Options Are Incorrect

A. Visual Studio –

An IDE for coding, not a service for content moderation.

B. Vision Studio in Azure AI Vision –

Vision Studio includes adult/racy detection but is part of Computer Vision. Content Safety is the dedicated service for this scenario.

D. Azure AI Studio –

A general platform for building AI solutions; not a specific tool for image moderation.

Reference

Microsoft Learn: Azure AI Content Safety – Image moderation – Detects sexual content with severity levels.

You have an Azure DevOps pipeline named Pipeline1 that is used to deploy an app.

Pipeline1 includes a step that will create an Azure AI services account.

You need to add a step to Pipeline1 that will identify the created Azure AI services account.

The solution must minimize development effort.

Which Azure Command-Line interface (CLI) command should you run?

A. Az resource link

B. Az account list

C. Az cognitivesservices account network-rule

D. As cognitiveservices account show

Explanation

To identify an Azure AI services account after creation, you need to retrieve its details (endpoint, keys, location, etc.). The az cognitiveservices account show command returns the full account information, including the resource ID, endpoint, and properties. This is the correct command with minimal effort.

Correct Option

D. az cognitiveservices account show

This command displays details of a specific Cognitive Services account, such as its endpoint, location, SKU, and provisioning state. It is the standard CLI command to identify and verify an account after creation.

Why Other Options Are Incorrect

A. az resource link – Used for creating links between resources, not for identifying an account.

B. az account list – Lists Azure subscriptions, not Cognitive Services accounts.

C. az cognitiveservices account network-rule – Manages network access rules (firewall, virtual networks), not for identifying the account.

Reference

Microsoft Learn: az cognitiveservices account show – Retrieves account properties including endpoint, keys, and SKU.

You have 1,000 scanned images of hand-written survey responses. The surveys do NOT have a consistent layout.

You have an Azure subscription that contains an Azure Al Document Intelligence resource named Aldoc1.

You open Document Intelligence Studio and create a new project.

You need to extract data from the survey responses. The solution must minimize development effort.

To where should you upload the images, and which type of model should you use? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation

For handwritten survey responses with inconsistent layouts, a custom model (template or neural) is required because pre‑built models expect consistent form structures. In Document Intelligence Studio, when creating a project, you must first upload the training images to Azure Blob Storage (or a similar Azure Storage container), then point the project to that container.

Correct Options

Upload images to: Azure Blob Storage

Document Intelligence projects require training data to be stored in an Azure Blob Storage container. You upload the scanned images to a blob container, then connect the container to the project.

Type of model: Custom model (or Custom neural model)

Since the surveys have no consistent layout, a custom neural model is the best choice. Neural models handle unstructured, varied layouts and handwritten text better than template models. Pre‑built models (e.g., prebuilt-layout, prebuilt-document) are not designed for extracting custom data fields without training.

Why Other Options Are Incorrect

Azure File Share / OneDrive / Local upload – Document Intelligence Studio does not directly accept local uploads for training; it requires Azure Blob Storage.

Pre‑built model – Pre‑built models (e.g., prebuilt-invoice, prebuilt-receipt) are for specific document types with consistent layouts, not for varied, hand‑written surveys.

Template model – Works for documents with fixed layouts, but neural models are better for inconsistent layouts.

Reference

Microsoft Learn: Document Intelligence – Custom models – Neural models handle unstructured, handwritten documents.

| Page 6 out of 40 Pages |