Topic 3: Misc. Questions

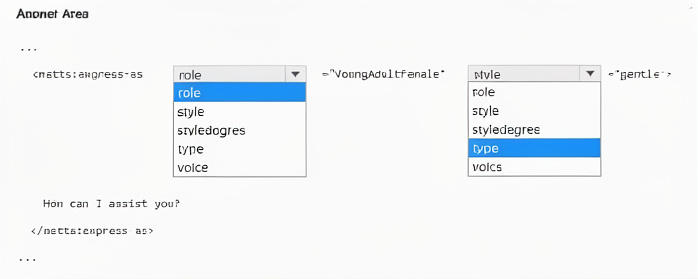

You are building a text-to-speech app that will use a custom neural voice.

You need to create an SSML file for the app. The solution must ensure that the voice profile meets the following requirements:

• Expresses a calm tone

• Imitates the voice of a young adult female

How should you complete the code? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

Explanation:

The

Correct Option Details:

role="YoungAdultFemale" –

The role attribute specifies the demographic characteristic of the voice (e.g., YoungAdultFemale, YoungAdultMale, SeniorFemale). This imitates a young adult female voice.

style="gentle" –

The style attribute defines the emotional or speaking style. gentle produces a soft, calm tone suitable for soothing or polite interactions. Other styles include cheerful, sad, angry, whispering, etc.

Incorrect Options (why they don’t fit):

style="youngAdultFemale" – style does not accept demographic values; youngAdultFemale is not a valid style. Styles describe emotional delivery, not age/gender.

type="YoungAdultFemale" – type is not a valid attribute of

voice="YoungAdultFemale" – voice attribute belongs to the

Reference:

SSML with custom neural voice – mstts:express-as – Explains style (e.g., gentle) for tone and role (e.g., YoungAdultFemale) for demographic imitation.

List of supported styles and roles – Shows gentle for calm delivery and YoungAdultFemale as a valid role.

You have an Azure subscription that contains a Microsoft Foundry resource.

You need to build an app that will suggest product names from a given product description.

Which Foundry model should you use?

A. embeddings

B. DALL-E

C. GPT-4

D. Whisper

Explanation:

The task is to suggest product names based on a product description. This requires a generative language model that understands the description and produces creative, contextually appropriate names. GPT-4 excels at natural language understanding and generation for such creative text tasks, while the other options serve different purposes like vectorization, image generation, or audio transcription.

Correct Option:

C. GPT-4

GPT-4 is a large language model (LLM) designed for text generation, including creative tasks like naming, summarization, and content creation.

It can take a product description as input and generate multiple relevant name suggestions.

Available in Azure Foundry as a model deployment via the Azure OpenAI service.

Incorrect Options:

A. embeddings –

Embedding models convert text into numerical vectors for similarity search or clustering. They cannot generate new product names; they only represent existing text.

B. DALL-E –

Generates images from text descriptions, not text-based suggestions. Irrelevant for product name generation.

D. Whisper –

An audio transcription model that converts speech to text. Has no capability for text generation or creative naming.

Reference:

Azure OpenAI Service – GPT-4 model – Explains use cases including creative text generation, content creation, and naming suggestions.

Azure AI Foundry – Model catalog – Lists GPT-4 under conversational and generative language models.

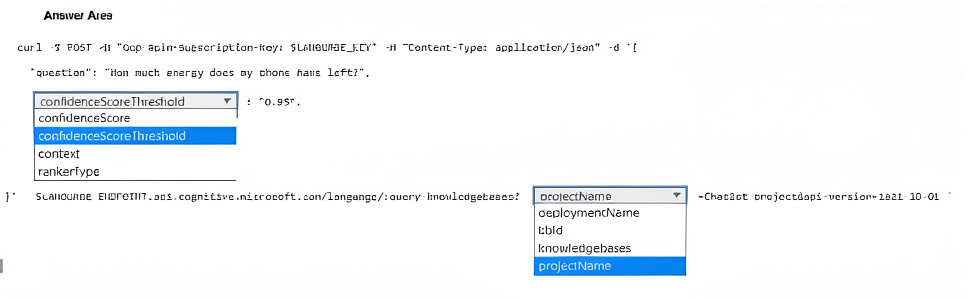

You have a chatbot that uses the Azure Al Language custom question answering service.

You need to test the chatbot. The solution must ensure that the chatbot responds only when an answer has a confidence score of at least 95 percent.

How should you complete the cURL statement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

The custom question answering endpoint for querying a knowledge base uses /knowledgebases in the URL. The requirement that the chatbot responds only when confidence ≥ 95% is achieved by setting confidenceScoreThreshold: 0.95. The confidenceScore parameter is an output, not an input filter.

Correct Option Details:

knowledgebases – The REST endpoint for querying a custom question answering project is:

{endpoint}/language/query-knowledgebases?projectName={name}&deploymentName={name}&api-version=2021-10-01

The path segment is query-knowledgebases.

confidenceScoreThreshold – This input parameter filters answers; only those with confidence ≥ the specified value (here 0.95) are returned. Matches "responds only when an answer has a confidence score of at least 95 percent."

Incorrect Options (why they don’t fit):

projectName / deploymentName – These are query parameters in the URL string, not the path segment. The dropdown shown is for the path after query-knowledgebases?, so projectName is incorrect there.

kbd – Not a valid custom question answering endpoint path.

confidenceScore – This is an output field in each answer, not an input filter. Setting it in the request body has no effect.

context / rankertype – These are optional input parameters but do not control the confidence threshold for filtering responses.

Reference:

Custom question answering REST API – Query knowledge base – Shows confidenceScoreThreshold as the request parameter to filter by confidence.

Confidence score documentation – Explains setting threshold to ensure only high-confidence answers are returned.

You are building an app that will use Azure AI to monitor workspaces for safety regulation compliance.

You need to recommend a service that meets the following requirements:

Generates alerts when employees enters high-risk areas

Monitors video feeds in real time

Minimizes development effort

What should you recommend?

A. Azure AI Video Indexer

B. Object detection in Azure Custom Vision

C. Azure Vision in Foundry Tools Spatial Analysis

D. Azure Vision in Foundry Tools Image Analysis

Explanation:

The requirement involves real‑time monitoring of video feeds to detect when employees enter high‑risk areas. Spatial analysis specifically tracks people’s presence and movement in physical spaces, generating alerts for zone entry events. It minimizes development effort by providing pre‑built operations (e.g., person crossing line, person entering zone) without training custom models.

Correct Option:

C. Azure Vision in Foundry Tools Spatial Analysis

Designed for real‑time video analysis of people’s location, movement, and zone entry.

Can trigger alerts when a person enters a configured high‑risk zone.

Minimizes effort by offering ready‑to‑use operations accessible via container or SDK.

Tracks multiple people across camera views without custom ML training.

Incorrect Options:

A. Azure AI Video Indexer –

Extracts insights like faces, labels, and transcriptions from pre‑recorded videos. Not built for real‑time alerting on zone entry.

B. Object detection in Azure Custom Vision –

Detects and classifies objects in still images or frame‑by‑frame. Requires custom training for “person,” lacks built‑in zone‑entry logic and real‑time streaming support.

D. Azure Vision in Foundry Tools Image Analysis –

Analyzes static images for tags, captions, OCR, etc. No real‑time video tracking or spatial event detection.

Reference:

Azure Spatial Analysis – People counting and zone entry – Pre‑built operations for monitoring occupancy and generating alerts when people enter specific zones in real time.

You are developing an app that will use the text-to-speech capability of the Azure Al Speech service. The app will be used in motor vehicles.

You need to optimize the quality of the synthesized voice output.

Which Speech Synthesis Markup Language (SSML) attribute should you configure?

A. the style attribute of the mstts: express -as element

B. the level attribute of the emphasis element

C. the pitch attribute of the prosody element

D. the effect attribute of the voice element

Explanation:

In a motor vehicle environment, background noise (engine, road, wind) can reduce speech clarity. Optimizing synthesized voice quality in such conditions requires adjusting pitch, rate, and volume to make speech more audible. The SSML prosody element's pitch attribute modifies the voice's tonal range — a slightly higher pitch can cut through low-frequency noise better than default settings.

Correct Option:

C. the pitch attribute of the prosody element

The prosody element controls pitch, rate, volume, and contour of synthesized speech.

In noisy vehicle environments, adjusting pitch slightly higher improves intelligibility by making consonants crisper and avoiding masking by low-frequency engine rumble.

This directly optimizes output quality for the listener, not emotional expression or emphasis.

Incorrect Options:

A. the style attribute of the mstts:express‑as element –

Controls emotional speaking style (e.g., "cheerful", "sad"). Quality optimization for noise is unrelated to emotional delivery.

B. the level attribute of the emphasis element –

Adds stress to specific words or phrases (e.g., "strong", "moderate"). Does not improve overall clarity in noisy environments.

D. the effect attribute of the voice element –

The

Reference:

SSML prosody element – Azure AI Speech – Explains how pitch, rate, and volume affect speech quality in different acoustic environments.

Speech tuning for in-vehicle use – Recommends pitch and rate adjustments for vehicle scenarios.

You have a training dataset that contains 10,000 PDF documents. The documents contain scanned books, comics, and magazines.

You are building a solution that will use Azure Al and a custom model.

You need to train the model by using Language Studio. The solution must meet the following requirements:

• Tag each item as a book, comic, or magazine.

• Minimize development effort.

What should you use?

A. a custom extraction model

B. a multi label classification project

C. a custom named entity recognition (NER) project

D. a multi label image classification model

Explanation:

The dataset contains scanned books, comics, and magazines — visual documents where classification depends on page layout, imagery, and formatting. A multi-label image classification model is designed to assign one or more labels (book, comic, magazine) directly from images (scanned pages). This minimizes development effort via Language Studio's drag-and-drop training without requiring OCR or text extraction.

Correct Option:

D. a multi label image classification model

Language Studio supports custom image classification for scanned documents.

Models learn visual patterns (e.g., comic panels vs. magazine layouts) without text extraction.

Multi-label allows an item to be tagged as multiple categories if needed (e.g., comic magazine).

Minimizes effort — no need for custom NER or text preprocessing pipelines.

Incorrect Options:

A. a custom extraction model –

Extraction models pull specific fields from documents (e.g., dates, names). Not designed for high-level document type tagging.

B. a multi label classification project –

Ambiguous; Language Studio has text multi-label classification, but that requires clean text input. Scanned PDFs need OCR first, increasing effort.

C. a custom named entity recognition (NER) project –

NER extracts entities (person, location) from text. It cannot classify a full document as book/comic/magazine based on visual features.

Reference:

Azure AI Language – Custom image classification – Explains tagging images (including scanned document pages) with custom labels like book/comic/magazine using Language Studio.

Compare custom text vs. image classification – Highlights when image classification is appropriate (scanned documents with visual distinctions).

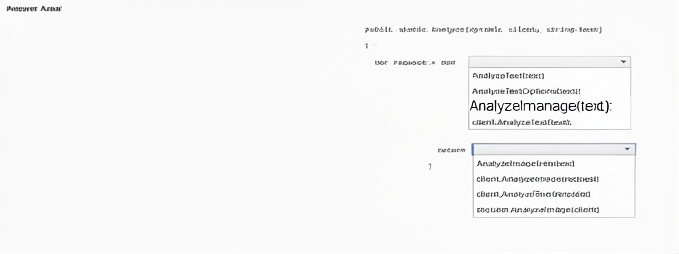

You have an Azure subscription that contains a Content Safety in Foundry Control Plane resource.

You are building a social media messaging app.

You need to build a solution that will analyze messages and flag messages that contain explicit content.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

The code uses a deployment name (completionName) and a user question. For non-chat text generation (legacy completion models like text-davinci-003), GetCompletions is correct. The response contains Choices collection; index [0] holds the primary generated text. Embeddings and image generation do not answer text questions.

Correct Option Details:

GetCompletions – This method calls a completion deployment (e.g., text-davinci-003) to generate text from a prompt. It matches the pattern GetCompletions(deploymentName, prompt).

response.Value.Choices[0].Text – The Completions object returns a list of Choice objects. Choices[0].Text contains the generated answer string, suitable for console output.

Incorrect Options:

GetEmbeddings – Returns vector representations of input text, not natural language answers. Cannot answer "what is Microsoft Azure?" in readable form.

GetImageGenerations – Generates images from prompts, not text answers. Irrelevant for console Q&A.

response.Value.Choices[1].Text – Index [1] would be a secondary completion choice if n > 1 was set. Default n=1 means only Choices[0] exists; [1] causes runtime error.

response.Value.Id / response.Value.PromptFilterResults – These return metadata (request ID, content safety results), not the generated answer text.

Reference:

Azure OpenAI .NET SDK – GetCompletions method – Official documentation for text completions.

Completions class – Choices property – Shows Choices[0].Text as the primary generated output.

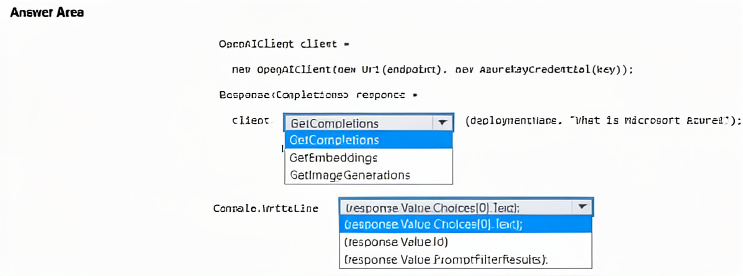

You have an Azure subscription that contains an Azure OpenA1 resource named All.

You plan to develop a console app that will answer user questions.

You need to call All and output the results to the console.

How should you complete the code? To answer, select the appropriate options in the answer area

NOTE: Each correct selection is worth one point.

Explanation:

The code uses a deployment name (completionName) and a user question. For non-chat text generation (legacy completion models like text-davinci-003), GetCompletions is correct. The response contains Choices collection; index [0] holds the primary generated text. Embeddings and image generation do not answer text questions.

Correct Option Details:

GetCompletions – This method calls a completion deployment (e.g., text-davinci-003) to generate text from a prompt. It matches the pattern GetCompletions(deploymentName, prompt).

response.Value.Choices[0].Text – The Completions object returns a list of Choice objects. Choices[0].Text contains the generated answer string, suitable for console output.

Incorrect Options:

GetEmbeddings – Returns vector representations of input text, not natural language answers. Cannot answer "what is Microsoft Azure?" in readable form.

GetImageGenerations – Generates images from prompts, not text answers. Irrelevant for console Q&A.

response.Value.Choices[1].Text – Index [1] would be a secondary completion choice if n > 1 was set. Default n=1 means only Choices[0] exists; [1] causes runtime error.

response.Value.Id / response.Value.PromptFilterResults – These return metadata (request ID, content safety results), not the generated answer text.

Reference:

Azure OpenAI .NET SDK – GetCompletions method – Official documentation for text completions.

Completions class – Choices property – Shows Choices[0].Text as the primary generated output.

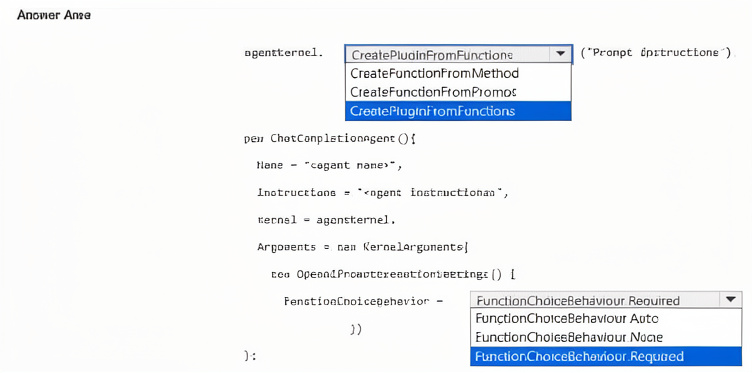

You are building an agent by using the Semantic Kernel. The agent will use a custom plugin. You need to ensure that the agent meets the following requirements:

• The agent must use function calling.

• All functions that match the instructions must be triggered.

• All required parameters in the function must be requested by the agent if the user fails to provide them.

How should you complete the code? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

Explanation:

The agent must use function calling, trigger all matching functions, and request missing required parameters. FunctionChoiceBehavior Required forces the model to call functions when the instruction matches, and Semantic Kernel automatically handles parameter prompting. CreatePluginFromFunctions is the correct method to build a plugin from multiple functions.

Correct Option Details:

CreatePluginFromFunctions –

This method creates a plugin by aggregating one or more functions from methods or delegates, allowing the agent to expose all custom functions collectively.

FunctionChoiceBehavior Required –

This setting ensures the agent must call functions that match the user’s intent, and if required parameters are missing, the agent will ask the user for them (auto-prompting).

Incorrect Options (why they don’t fit):

CreatePluginFromFunction – Not a standard method; singular form is incorrect. The SDK uses CreatePluginFromFunctions (plural) or ImportPluginFromFunctions.

CreateFunctionFromMethod / CreateFunctionFromPrompt – These create individual functions, not the plugin itself. The code needs a plugin container first.

FunctionChoiceBehavior Auto – Would allow the model to decide whether to call functions or respond directly, violating “must use function calling.”

FunctionChoiceBehavior None – Disables function calling entirely.

FunctionChoiceBehavior Required (listed twice in image – same correct choice).

Reference:

Microsoft Semantic Kernel – Function Calling – Explains FunctionChoiceBehavior.Required forces function invocation and parameter requests.

Semantic Kernel – KernelPlugin.CreateFromFunctions – Official method for creating a plugin from multiple functions.

You have a Microsoft Foundry project named Project1.

You plan to create an app named App1 that will connect to Project1 and chat by using a generative AI model.

You need to connect App1 to Project1 by using the Microsoft Foundry SDK. The solution must minimize development effort.

What should you configure in App1?

A. a connection string

B. a project scope key

C. an AIProjectClient object

D. a SASCredentials object

Explanation:

To connect an app to a Microsoft Foundry project (Azure AI Foundry) and chat using a generative AI model via the Foundry SDK, you need an AIProjectClient object. It provides a high-level, unified interface for project interactions, including model connections, and minimizes development effort compared to low-level credential handling.

Correct Option:

C. an AIProjectClient object

The AIProjectClient is the primary entry point in the Azure AI Foundry SDK for connecting to a specific project.

It abstracts authentication, endpoint management, and model invocation, reducing boilerplate code.

Designed specifically to minimize development effort when building chat applications on Foundry projects.

Incorrect Options:

A. a connection string –

Connection strings are more common for databases or storage; the Foundry SDK does not primarily use a simple connection string for project chat access.

B. a project scope key –

Scope keys are used in older custom question answering or Language Studio scenarios, not as the main client object for generative AI chat in Foundry SDK.

D. a SASCredentials object –

SAS credentials are for Azure Storage access (blobs, queues), not for connecting to a Foundry project’s AI model chat capabilities.

Reference:

Azure AI Foundry SDK – AIProjectClient class – Shows that AIProjectClient is the recommended way to connect to a project, authenticate, and access models with minimal code.

| Page 5 out of 40 Pages |