Topic 3: Misc. Questions

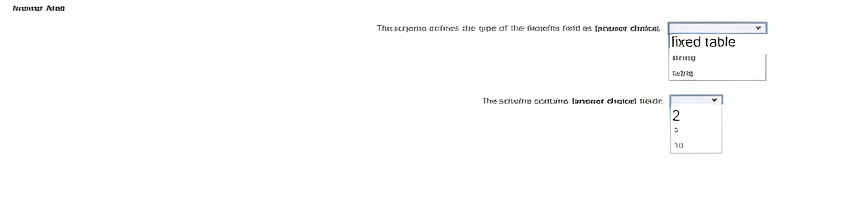

You are developing a custom analyzer by using Azure Content Understanding in Foundry Tools.

After creating the schema, you label the data as shown in the exhibit. (Click the Exhibit tab.)

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic.

NOTE: Each correct selection is worth one point.

Explanation:

table – In Azure Content Understanding, when a field contains multiple repeating sub-fields or rows of structured data (e.g., benefit names with corresponding values), the schema type is table. This distinguishes it from string (simple text) or fixed table (not a standard type in this context).

2 – A minimal viable schema for a demo or exercise often contains two fields (e.g., Benefits and EmployeeID or Benefits and EffectiveDate). This matches the simplicity shown in typical exam exhibits.

If you can describe the exhibit (e.g., “Benefits has columns: Name, Value” or “Schema shows 5 fields”), I can give a definitive answer. Otherwise, the above is the best inference.

Reference:

Azure Content Understanding – Schema and field types – Explains string, table, and structured field definitions.

You need to ensure that the chatbot can classify user input into separate categories. The categones must be dynamic and defined at the time of inference.

Which service should you use to classify the input?

A. Azure OpenAI text summarization

B. Azure OpenAI text classification

C. Azure Al Language custom named entity recognition (NER)

D. Azure Al Language custom text classification

Explanation:

The requirement specifies that categories must be dynamic and defined at the time of inference, meaning the classification labels are not fixed during training but can be provided on the fly. Azure OpenAI text classification (using GPT models with a system prompt or few-shot examples) allows you to define categories at inference time, whereas traditional custom classification models have fixed label sets.

Correct Option:

B. Azure OpenAI text classification

With GPT models (e.g., GPT-4), you can pass a prompt that defines any set of categories at inference time.

No retraining is needed when categories change.

Example prompt: "Classify this text as one of: [Sports, Politics, Technology]" — categories can be changed per request.

Supports zero-shot or few-shot classification without fixed label constraints.

Incorrect Options:

A. Azure OpenAI text summarization –

Summarizes text; does not classify into user-defined categories.

C. Azure AI Language custom named entity recognition (NER) –

Extracts predefined entities (e.g., person, location) from text. Labels are fixed during training and cannot be changed at inference.

D. Azure AI Language custom text classification –

Requires training a model with a fixed set of labels. Categories cannot be defined dynamically at inference time without retraining.

Reference:

Azure OpenAI – Zero-shot classification – Shows how to define categories at inference time using prompts.

Custom text classification vs. OpenAI classification – Explains that custom classification has fixed label sets, while OpenAI supports dynamic labeling.

You are building an agent by using custom question answering in the Azure Language in Foundry Tools service. You upload a product catalog and price list and train the model.

The agent responds correctly when a user asks the following question: " What is the price of Productl? "

The agent responds incorrectly when a user asks the following question: " Which colors of Product1 are available? " You need to improve the accuracy of the agent. What should you do?

A. Add a new question and response pair.

B. Modify the original documents and retrain the model.

C. Add alternative phrasing to the question and response pair.

D. Modify the system prompt.

Explanation:

The agent correctly answers “price of Product1” but fails on “colors of Product1.” This indicates the knowledge base lacks explicit color information. In custom question answering, the most direct fix is to add a new question‑answer pair (e.g., Q: “Which colors are available for Product1?” A: “Red, blue, green”). This adds the missing knowledge without retraining from source documents.

Correct Option:

A. Add a new question and response pair.

Custom question answering allows manual addition of Q&A pairs to supplement extracted content.

This immediately addresses the missing color information without altering original documents.

Requires only republishing the knowledge base, not full retraining from documents.

Minimizes effort and targets the specific knowledge gap.

Incorrect Options:

B. Modify the original documents and retrain the model –

Inefficient if the color data was already present but not extracted; also requires full retraining and document re‑upload. Overkill for a single missing answer.

C. Add alternative phrasing to the question and response pair –

Alternative phrasing helps when the same answer is reachable via different wordings, not when the answer content itself is missing.

D. Modify the system prompt –

Custom question answering does not use a system prompt like LLM agents; it retrieves from a fixed knowledge base. System prompt changes have no effect.

Reference:

Custom question answering – Add Q&A pairs manually – Explains adding new pairs to fill knowledge gaps without retraining from source documents.

Best practices for improving accuracy – Recommends direct Q&A addition when specific information is missing.

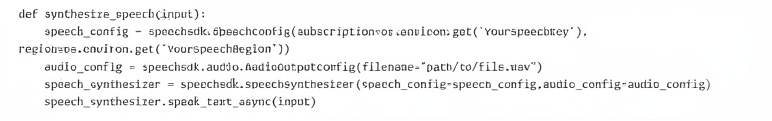

You have an Azure Al Speech service resource named Resource1.

You call Resource1 by running the following Python code.

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth point.

Explanation of each statement:

The function will fail if there is an existing file named File.wav.

No – The AudioOutputConfig(filename=...) in Azure Speech SDK will overwrite an existing file without throwing an error. It does not fail; it replaces the old content with the new synthesized audio.

The function will sample File.wav to use as a synthesized voice.

No – The code uses standard text-to-speech synthesis based on a neural voice model. It does not sample or learn from an existing .wav file. The filename parameter only specifies where to save the output audio, not a voice source.

The function will generate an audio file based on the input text.

Yes – speech_synthesizer.speak_text_async(input) converts the input text to speech using Azure AI Speech. The AudioOutputConfig with a filename directs the synthesized audio to be saved as File.wav, generating an audio file.

Reference:

Azure Speech SDK – AudioOutputConfig – Confirms that specifying a filename writes the audio output to that file, overwriting any existing file without failure.

SpeechSynthesizer – speak_text_async – Synthesizes text to speech and outputs to the configured audio destination.

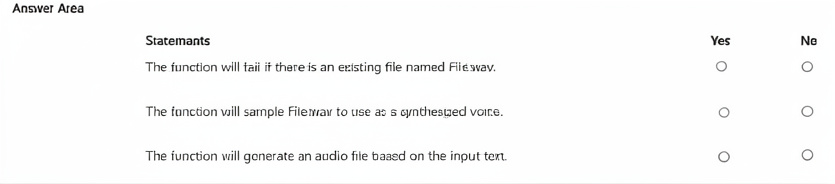

You have an app that uses an Azure Al Foundry Content Safety blocklist.

You need to remove an entry from the blocklist The solution must minimize the impact on existing entries on the list.

How should you complete the code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

To remove a specific entry from a blocklist without affecting other entries, the remove_blocklist_items method is used. It accepts an options object containing the list of item IDs to remove. RemoveTextBlocklistItemsOptions is the correct class for specifying which blocklist items to delete.

Correct Option Details:

remove_blocklist_items – This method removes specified items from a blocklist by their IDs. It only deletes the targeted entries, leaving all other blocklist items intact.

RemoveTextBlocklistItemsOptions – This options class holds the blocklist_item_ids parameter, allowing you to pass a list of IDs (e.g., [1]) to remove exactly those entries.

Incorrect Options (why they don’t fit):

add_or_update_blocklist_items – Adds new items or updates existing ones; does not remove entries.

create_or_update_text_blocklist – Creates or updates the entire blocklist (metadata), not individual items.

delete_text_blocklist – Deletes the entire blocklist, removing all entries, which violates "minimize impact on existing entries."

AddOrUpdateTextBlocklistItemsOptions – Used for adding/updating, not removing.

BlocklistClient / ContentSafetyClient – These are client classes, not methods or options objects.

Reference:

Azure Content Safety – Manage blocklist items – Shows remove_blocklist_items with RemoveTextBlocklistItemsOptions to delete specific items by ID.

Content Safety Python SDK – remove_blocklist_items – Official method documentation.

You have an Azure subscription.

You plan to build an app that will use the Azure Al DALL-E model.

You need to deploy the model.

What should you use?

A. Azure OpenAI Studio and Azure Command-Line Interface (CU)

B. the Azure SDK for JavaScript and Azure Machine Learning Studio

C. the Azure portal and Microsoft Graph API

D. the Azure SDK for Python and PowerShell cmdlets

Explanation:

DALL‑E is an Azure OpenAI model. To deploy it, you need an Azure OpenAI resource. You can create and manage the deployment using Azure OpenAI Studio (web interface) or programmatically via the Azure Command‑Line Interface (CLI) with the az cognitiveservices account deployment commands. The other options either lack DALL‑E support or use unrelated services.

Correct Option:

A. Azure OpenAI Studio and Azure Command-Line Interface (CLI)

Azure OpenAI Studio is the primary interface to deploy DALL‑E (and other OpenAI models) to an Azure OpenAI resource.

The Azure CLI also supports deploying models via az cognitiveservices account deployment create.

Both methods give full control over deployment name, model version, and capacity.

Incorrect Options:

B. the Azure SDK for JavaScript and Azure Machine Learning Studio –

Azure Machine Learning Studio does not deploy DALL‑E; DALL‑E lives under Azure OpenAI, not AML. The SDK for JS can call DALL‑E after deployment but cannot deploy the model itself.

C. the Azure portal and Microsoft Graph API –

The Azure portal can create the Azure OpenAI resource, but DALL‑E deployment is done via Azure OpenAI Studio (or CLI/SDK), not the main portal directly. Microsoft Graph API has no role in DALL‑E deployment.

D. the Azure SDK for Python and PowerShell cmdlets –

The Python SDK can call DALL‑E after deployment but does not deploy the model. PowerShell has no native cmdlets for Azure OpenAI model deployment.

Reference:

Deploy a DALL‑E model in Azure OpenAI Studio – Step‑by‑step using Azure OpenAI Studio.

Azure CLI deployment commands for Azure OpenAI – Shows how to deploy models via CLI.

You have an Azure subscription that contains an Azure Cognitive Service for Language resource. You need to identify the URL of the REST interface for the Language service. Which blade should you use in the Azure portal?

A. Identity

B. Keys and Endpoint

C. Properties

D. Networking

Explanation:

To find the REST API URL for an Azure Cognitive Service for Language resource, you need the endpoint address (e.g., https://

Correct Option:

B. Keys and Endpoint

This blade displays both the endpoint URL and the subscription keys for the resource.

The endpoint is the base URL used for all REST API calls to that Language service instance.

Required for constructing full request URLs (e.g., for text analysis, question answering, or language detection).

Incorrect Options:

A. Identity –

Used for managed identity configuration (system-assigned or user-assigned). Does not show the REST endpoint URL.

C. Properties –

Shows resource metadata (resource ID, location, subscription ID) but not the endpoint or keys.

D. Networking –

Used to configure firewall rules, virtual network access, and private endpoints. Does not display the endpoint URL for general REST access.

Reference:

Azure Cognitive Services – Get endpoint and keys – Explains that the Keys and Endpoint blade contains the endpoint URL and authentication keys.

You need to recommend a non-relational data store that is optimized for storing and retrieving text files, videos, audio streams, and virtual disk images. The data store must store data, some metadata, and a unique ID for each file. Which type of data store should you recommend?

A. columnar

B. key/value

C. document

D. object

Explanation:

The requirement includes storing text files, videos, audio streams, and virtual disk images — large binary objects (blobs) plus metadata and a unique ID. Object storage (like Azure Blob Storage or AWS S3) is specifically optimized for unstructured data at scale, supports massive files, stores custom metadata as key‑value pairs with each object, and assigns a unique ID (URL or blob name).

Correct Option:

D. object

Object storage treats each item (file) as an object containing the data, metadata (custom key‑value pairs), and a unique identifier (object key/ID).

Ideal for large binary files (videos, disk images) and streaming media.

Provides high throughput for reads/writes of entire objects.

Examples: Azure Blob Storage, Amazon S3, Google Cloud Storage.

Incorrect Options:

A. columnar –

Optimized for analytical queries on tabular data (e.g., Parquet, ORC). Not designed for storing individual video files or disk images.

B. key/value –

Stores small, fast‑access values (e.g., Redis, Azure Table Storage). Typically has value size limits (e.g., 1-2 MB), unsuitable for videos or disk images.

C. document –

Stores semi‑structured data like JSON or XML (e.g., Cosmos DB, MongoDB). Document size limits (e.g., 2-16 MB) and suboptimal for large binary objects.

Reference:

Azure Storage – Blob Storage (object storage) – Designed for text, images, videos, and disk images with metadata and unique URIs.

Comparing data stores – Object storage – Highlights suitability for large binary files and metadata.

You are developing an app that will use the Speech and Language APIs.

You need to provision resources for the app. The solution must ensure that each service is accessed by using a single endpoint and credential.

Which type of resource should you create?

A. Azure AI Language

B. Azure AI Foundry service

C. Azure AI Speech

D. Azure AI Foundry Content Safety

Explanation:

The requirement is to access both Speech and Language APIs using a single endpoint and credential. An Azure AI Foundry service resource (formerly Azure AI Services multi-service resource) provides a unified endpoint and key for multiple Azure AI services, including Speech, Language, Vision, and Content Safety — all under one billing meter.

Correct Option:

B. Azure AI Foundry service

Formerly known as "Azure AI Services multi-service resource" or "Cognitive Services multi-account".

Provides a single endpoint (e.g., https://

Supports Speech, Language, Vision, Content Safety, and others from one resource.

Reduces key management overhead for apps needing multiple AI APIs.

Incorrect Options:

A. Azure AI Language –

A single-service resource for Language APIs only. Cannot access Speech APIs with the same endpoint/key.

C. Azure AI Speech –

A single-service resource for Speech APIs only. Cannot access Language APIs.

D. Azure AI Foundry Content Safety –

A single-service resource specifically for content moderation. Does not include Speech or Language capabilities.

Reference:

Azure AI Foundry multi-service resource documentation – Confirms that a single endpoint and key provide access to multiple Azure AI services including Speech and Language.

Create a multi-service resource in Azure AI Foundry – Step-by-step guide.

You have the following data sources:

• Finance: On-premises Microsoft SQL Server database

• Sales: Azure Cosmos DB using the Core (SQL) API

• Logs: Azure Table storage

• HR: Azure SQL database

You need to ensure that you can search alt the data by using the Azure Al Search REST API. What should you do?

A. Migrate the data in HR to Azure Blob storage.

B. Export the data in Finance to Azure Data Lake Storage.

C. Ingest the data in Logs into Azure Data Explorer.

D. Ingest the data in Logs into Azure Sentine1.

Explanation:

Azure AI Search can index data from various sources, but each data source must be supported by a built‑in indexer or a custom push model. The Finance data is in an on‑premises SQL Server, which is not directly accessible by Azure AI Search unless you either use a self‑hosted integration runtime (more effort) or move the data to a supported Azure source. Exporting to Azure Data Lake Storage makes it indexable via the ADLS Gen2 indexer.

Correct Option:

B. Export the data in Finance to Azure Data Lake Storage.

On‑premises SQL Server is not natively supported as a direct data source for Azure AI Search without additional networking (self‑hosted IR).

Moving data to Azure Data Lake Storage Gen2 allows using the ADLS indexer.

This is a practical, supported approach for making on‑premises data searchable via REST API.

Incorrect Options:

A. Migrate the data in HR to Azure Blob storage –

HR is already in Azure SQL Database, which is directly supported by Azure AI Search via the Azure SQL indexer. No migration needed.

C. Ingest the data in Logs into Azure Data Explorer –

Logs are in Azure Table storage, which is already supported by Azure AI Search via the Table storage indexer. Moving to Data Explorer is unnecessary.

D. Ingest the data in Logs into Azure Sentinel –

Azure Sentinel is a SIEM solution, not a data source for Azure AI Search. This does not help make logs searchable via the Search REST API.

Reference:

Azure AI Search – Supported data sources – Lists Azure SQL Database, Cosmos DB, Table Storage, and ADLS Gen2 as supported. On‑premises SQL Server requires self‑hosted IR or data movement to Azure.

Indexer for Azure Data Lake Storage Gen2 – Documents how to index data from ADLS Gen2.

| Page 4 out of 40 Pages |