Topic 3: Misc. Questions

You have a 20-GB video file named File1.avi that is stored on a local drive.

You need to index File1.avi by using the Azure AI Video Indexer website.

What should you do first?

A. Upload File1.avi to the Azure AI Video Indexer website.

B. Upload File1.avi to the www.youtube.com webpage.

C. Upload File1.avi to Microsoft OneDrive.

D. Upload File1.avi to an Azure Storage queue.

Explanation:

Azure AI Video Indexer website supports indexing videos directly from URL sources, but it does not allow direct upload of large files (e.g., 20 GB) from a local drive through the web interface. You must first upload the video to a publicly accessible URL. Microsoft OneDrive provides a shareable link that Video Indexer can ingest, while other options are either unsupported or not the required first step.

Correct Option:

C. Upload File1.avi to Microsoft OneDrive

Video Indexer accepts video URLs from public or authenticated sources like OneDrive (with shared link).

The website provides an option to enter a video URL rather than uploading large files directly.

This bypasses browser upload limits and timeouts.

Incorrect Options:

A. Upload File1.avi to the Azure AI Video Indexer website –

The website does not support direct upload of 20 GB local files; it expects a URL or uses API upload with chunking (not the website).

B. Upload File1.avi to the www.youtube.com webpage –

YouTube is not a supported source for Video Indexer website ingestion.

D. Upload File1.avi to an Azure Storage queue –

Storage queues are for messaging, not for storing or serving video files for indexing.

Reference:

Azure AI Video Indexer – Upload and index videos – States you can provide a video URL from OneDrive or other accessible sources.

Video Indexer website limitations – Confirms large files require URL upload, not direct upload.

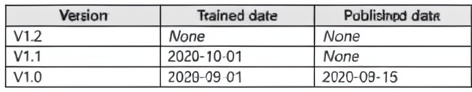

You plan to use a Conversational Language Understanding application named app1 that is deployed to a container. App1 was developed by using a Conversational Language Understanding authoring resource named Iu1. App1 has the versions shown in the following table.

You need to create a container that uses the latest deployable version of app1.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

The table shows v1.2 has no trained date (not trained), v1.1 is trained but not published, and v1.0 is trained and published. The latest deployable version is the most recent trained version — v1.1 (trained 2020-10-01), even though it is not published. Export for containers requires a trained model. You export it in GZIP format, then run a container with the mounted model file.

Why these actions in this order:

Select v1.1 of app1 –

v1.2 has no training date (cannot be exported). v1.1 is the latest trained version. "Deployable" in container context means trained, not necessarily published via the cloud endpoint.

Export the model by using the Export for containers (GZIP) option –

Containers require the model exported in GZIP format. The "Export as JSON" option is for other purposes.

Run a container and mount the model file –

The exported GZIP file must be mounted to the container at runtime so the container can load the specific model version.

Actions not used (and why):

Export the model by using the Export as JSON option –

JSON export is for local testing or analysis, not for container deployment.

Select v1.2 / v1.0 of app1 –

v1.2 is not trained (cannot export); v1.0 is older than v1.1, not the latest.

Run a container that has version set as an environment variable –

For LUIS/CLU containers, the model is mounted as a file, not specified by environment variable alone.

Select v1.0 of app1 –

Not the latest deployable version.

Reference:

Export a Conversational Language Understanding model for container deployment – States you must select a trained version, export as GZIP, and mount the file when running the container.

Container deployment requirements – Confirms that only trained versions (published or not) can be exported for containers.

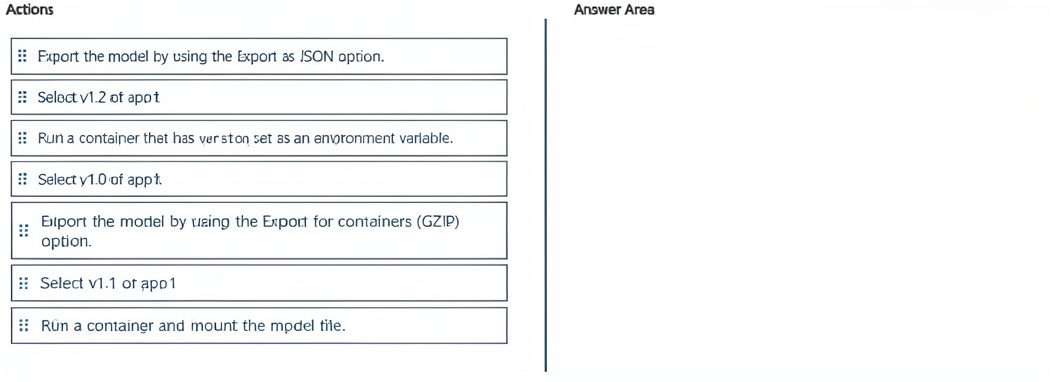

You Have a chatbot that uses the Azure Al Language custom question answering service. The model used by the service was trained by using an internal support FAQ document.

You discover that the chatbot fails to provide correct answers to common questions.

You need to increase the accuracy of the responses provided by the chatbot. The solution must minimize development effort.

Which three actions should you perform in sequence from Language Studio? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

Active learning automatically suggests alternative phrasings based on user queries that the model answered with low confidence. Reviewing and accepting these suggestions improves the model's ability to recognize varied questions. Finally, retraining and republishing applies the improvements, all with minimal manual effort.

Why these actions in this order:

Enable active learning – Turns on the feature that collects user queries and generates suggested alternative phrasings. This is a one‑time configuration and minimizes manual effort by automating suggestion generation.

Review and accept the alternative phrases – After active learning runs, Language Studio shows suggested alternate questions. Accepting them teaches the model to recognize common rewordings of existing answers.

Retrain and republish the model – Once new alternative phrasings are accepted, the model must be retrained to incorporate them, then republished so the chatbot uses the updated knowledge base.

Actions not used (and why):

Update the question and answer pairs – This is manual and higher effort than accepting active learning suggestions.

Open the Edit knowledge base pane / Open the Review suggestions pane – These are intermediate steps, not primary actions in the minimal‑effort sequence (the "Review and accept" action implicitly covers opening the pane).

Modify the FAQ document, and then reload it – High effort (editing source documents) and not the minimal‑effort approach.

Reference:

Custom question answering – Active learning – Explains enabling active learning, reviewing suggested alternatives, and retraining to improve accuracy with minimal effort.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are building a chatbot that will use question answering in Azure Cognitive Service for Language.

You have a PDF named Doc1.pdf that contains a product catalogue and a price list You upload Doc1.pdf and train the model.

During testing, users report that the chatbot responds correctly to the following question: What is the price of < product > ?

The chatbot fails to respond to the following question: How much does < product > cost?

You need to ensure that the chatbot responds correctly to both questions.

Solution: From Language Studio, you add alternative phrasing to the question and answer pair, and then retrain and republish the model.

Does this meet the goal?

A. Yes

B. No

Explanation:

The chatbot already knows the answer for “What is the price of

Correct Answer:

A. Yes

Why this solution meets the goal:

Alternative phrasing allows you to map multiple user utterances to the same Q&A pair without duplicating answers.

After adding “How much does

Retraining and republishing make the change live for the chatbot.

Reference:

Custom question answering – Add alternate questions – Explicitly states that adding alternate phrasing resolves issues where users ask the same question in different words.

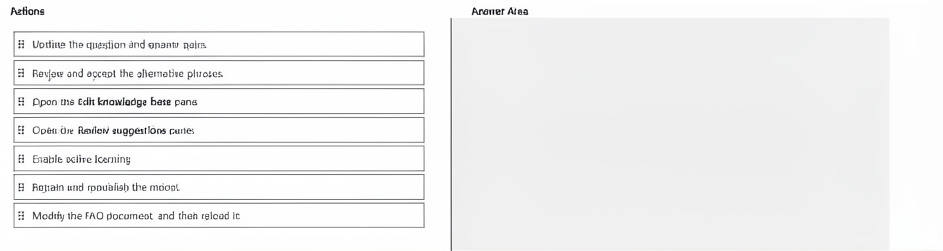

You have a flow that you plan to deploy to Azure Machine Learning managed online endpoints for real-time inference.

You need to modify the YAML configuration file for the deployment to ensure that trace data and system metrics are collected and sent to an Application Insights resource named my-app-insights.

How should you modify the file? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

For Azure Machine Learning managed online endpoints, enabling Application Insights logging is done by setting app_insights_enabled: true in the deployment YAML. This sends system metrics and trace data to the default Application Insights resource linked to the workspace. You do not specify the resource name directly in the endpoint YAML; the workspace’s associated Application Insights is used.

Correct Option Details:

app_insights_enabled –

This boolean property, when set to true, enables collection of trace data and system metrics from the deployment and sends them to the workspace’s Application Insights resource.

true –

Enables the integration. The resource name my-app-insights is already associated with the Azure Machine Learning workspace, so no further naming is required in the deployment YAML.

Incorrect Options (why they don’t fit):

app_insights / application_insights / azureml / environment_variables –

These are not valid property names for enabling Application Insights in an online endpoint deployment YAML. The correct property is app_insights_enabled.

my-app-insights / The name of the deployment / The instrumentation key / The fully qualified resource ID –

These are not valid values for app_insights_enabled (which expects a boolean). The Application Insights resource is configured at the workspace level, not per-deployment by name or key in this YAML.

Reference:

Azure Machine Learning – Managed online endpoint YAML reference – Shows app_insights_enabled: true to send metrics and traces to Application Insights.

Monitor online endpoints with Application Insights – Confirms that enabling this property automatically uses the workspace’s linked Application Insights resource.

You have an Azure subscription that contains an Azure OpenAI resource named All and an Azure Al Content Safety resource named CS1.

You build a chatbot that uses All to provide generative answers to specific questions and CS1 to check input and output for objectionable content.

You need to optimize the content filter configurations by running tests on sample questions.

Solution: From Content Safety Studio, you use the Safety metaprompt feature to run the tests

Does this meet the requirement?

A. Yes

B. No

Explanation:

The requirement is to optimize content filter configurations for both input and output by running tests on sample questions. Content Safety Studio's Safety metaprompt feature is used to create and test metaprompts for grounding and safety instruction injection — it does not directly test or optimize the content filter configurations (e.g., severity thresholds for hate, sexual, violence, self-harm). Filter configuration is tested via the moderation APIs or Safety evaluations, not the metaprompt feature.

Correct Answer:

No

Why the solution does not meet the requirement:

The Safety metaprompt feature helps craft system messages that guide model behavior, not configure or test the content filter thresholds.

Optimizing content filters (e.g., adjusting severity levels for objectionable content) requires using the Content Safety moderation API or Safety evaluations in Azure AI Studio, not the metaprompt tool.

The metaprompt feature does not run tests on sample questions to measure filter performance; it assists in prompt engineering.

Reference:

Azure AI Content Safety – Safety metaprompt – Clarifies that metaprompts inject safety instructions into system messages, not configure or test moderation filters.

Content filter configuration – Testing filters requires APIs or evaluation tools, not Safety metaprompt.

You are building an app that will analyze documents by using the Azure Al Language service.

You need to identify industry-specific technical terms in the documents. The solution must minimize development effort.

What should you use?

A. key phrase extraction

B. custom named entity recognition (NER)

C. conversational language under-standing (CLU)

D. language detection

Explanation:

The requirement is to identify industry-specific technical terms in documents. General key phrase extraction or language detection cannot recognize custom, domain-specific terms. Custom named entity recognition (NER) allows you to train a model to label industry-specific terminology (e.g., medical, legal, or engineering terms) with minimal development effort using Language Studio's labeling interface.

Correct Option:

B. custom named entity recognition (NER)

Designed to extract and label domain-specific entities that are not covered by prebuilt models.

You provide labeled examples (e.g., “Hematoxylin” → StainName), and the model learns to identify similar terms in new documents.

Minimizes effort via Language Studio’s drag-and-drop labeling and automatic model training.

Ideal for technical or industry jargon.

Incorrect Options:

A. key phrase extraction –

Identifies important concepts or topics in general text, but cannot be trained to recognize specific, custom industry terms. Outputs are generic key phrases, not labeled categories.

C. conversational language understanding (CLU) –

Designed for intent and entity detection in conversational utterances (chatbots), not for extracting technical terms from documents.

D. language detection –

Only identifies the language (e.g., English, French) of the input text; has no ability to extract domain-specific terms.

Reference:

Azure AI Language – Custom NER overview – Explains how to train models for industry-specific entities with minimal coding.

Custom NER vs. key phrase extraction – Highlights that key phrase extraction is not customizable for domain-specific terms.

You plan to use Microsoft Foundry to implement a Retrieval Augmented Generation (RAG) pattern.

You have grounding data stored in an Azure Files share.

You need to configure a Microsoft Foundry project to ensure that a model can use the grounding data.

Which actions should you perform in sequence? To answer, drag the appropriate actions to the correct order.

Each action may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Explanation:

To implement RAG, you first create a Foundry project. Next, you need to connect the Azure Files share (grounding data) to the project — this is done by adding a data connection. Finally, you add a model deployment (e.g., GPT-4) so that the model can retrieve and ground responses on the connected data.

Why these actions in this order:

Create a project – The parent container for all resources and configurations in Foundry.

Add a data connection – Establishes the link to the Azure Files share, making the grounding data accessible for indexing and retrieval. Without this, the model cannot access the files.

Add a deployment – Deploys a generative AI model (e.g., GPT-4) that will perform RAG inference using the connected data.

Actions not used (and why):

Add a model – Models are deployed via deployments; "add a model" is not a direct action in this sequence. You add a deployment of a model.

Add a playbook – Playbooks are automation workflows, not required for basic RAG configuration on grounding data.

Reference:

Retrieval Augmented Generation (RAG) in Azure AI Foundry – Explains steps: create project → add data source (connection) → deploy model for RAG.

Add data to a Foundry project – Shows how to add an Azure Files connection as grounding data.

You have an Azure subscription that contains a Microsoft Foundry hub named Hub1, an Azure OpenAI resource named resource1, and a user named User1.

You need to ensure that User1 can create a new Azure Content Understanding in Foundry Tools project in Hub1. The solution must follow the principle of least privilege.

Which role should you assign to User1?

A. Cognitive Services OpenAI Contributor

B. Cognitive Services OpenAI User

C. Azure AI Administrator

D. Azure AI Developer

Explanation:

To create an Azure Content Understanding project in a Microsoft Foundry hub, a user needs access to the Azure OpenAI resource (since Content Understanding uses OpenAI models) and the hub. The principle of least privilege requires the minimal role. Cognitive Services OpenAI User allows a user to use existing OpenAI deployments (including creating projects that consume them) but not manage the resource itself, which is sufficient and less privileged than Contributor.

Correct Option:

B. Cognitive Services OpenAI User

Grants permission to call existing Azure OpenAI deployments (e.g., for Content Understanding inference or project creation).

Does not allow modifying the OpenAI resource (e.g., deleting deployments), following least privilege.

Paired with a Foundry hub role (e.g., Azure AI Developer at hub level) for full project creation, but among the options, this is the correct OpenAI‑specific role.

Incorrect Options:

A. Cognitive Services OpenAI Contributor –

Too broad for least privilege; allows full management of the OpenAI resource, including creating and deleting deployments.

C. Azure AI Administrator –

Very broad role; can manage the entire Foundry hub, OpenAI resource, and all projects. Violates least privilege.

D. Azure AI Developer –

A Foundry hub role, not specific to OpenAI. While needed in the hub, this role alone does not grant access to use the OpenAI deployment for Content Understanding.

Reference:

Azure OpenAI – Cognitive Services OpenAI User role – Provides inference access without resource management.

Azure Content Understanding prerequisites – Requires access to an Azure OpenAI deployment.

You are building an app that will process scanned expense claims and extract and label the following data:

• Merchant information

• Time of transaction

• Date of transaction

• Taxes paid

• Total cost

You need to recommend an Azure Al Document Intelligence model for the app. The solution must minimize development effort.

What should you use?

A. the prebuilt Read model

B. the prebuilt receipt model

C. a custom template model

D. a custom neural model

Explanation:

The required fields — merchant information, transaction time, date, taxes paid, and total cost — exactly match the data extracted from receipts. The prebuilt receipt model in Azure AI Document Intelligence (formerly Form Recognizer) is specifically trained to extract these fields from scanned receipts or expense claims, requiring no custom training and thus minimizing development effort.

Correct Option:

B. the prebuilt receipt model

Extracts merchant name, transaction date, transaction time, taxes, tip, total, and line items from receipts.

Works on scanned images or PDFs of receipts without any custom labeling or training.

Minimizes development effort — just call the model with your document.

Ideal for expense claim automation.

Incorrect Options:

A. the prebuilt Read model –

Extracts raw text, handwritten text, and layout (words, lines, paragraphs), but does not label fields like taxes paid or total cost. You would need post‑processing to identify those values.

C. a custom template model –

Requires manual labeling of training documents. Higher development effort; unnecessary when a prebuilt model already covers the fields.

D. a custom neural model –

Also requires custom training and labeling. Overkill for standard receipt extraction.

Reference:

Azure AI Document Intelligence – Prebuilt receipt model – Lists extracted fields: merchant name, date, time, taxes, total, etc.

Choose a Document Intelligence model – Recommends the receipt model for expense claim automation.

| Page 3 out of 40 Pages |