Topic 3: Misc. Questions

You have an Azure subscription that contain an Azure OpenAI resource named AI1.

You build a chatbot that uses AI1 to provide generation answers to specific questions.

You need to ensure that the chatbot checks all input output for objectionable content.

Which types of resource should you create first?

A. Azure Machine Learning

B. Log Analytics

C. Azure AI Content Safety

D. Microsoft Defender Threat intelligence (Defender TI)

Explanation:

To check input and output for objectionable content (hate speech, sexual content, violence, self-harm), you need a dedicated content moderation service. Azure AI Content Safety provides text and image moderation APIs that can analyze prompts and responses before they are shown to users. This should be created first and integrated into the chatbot flow.

Correct Option:

C. Azure AI Content Safety

Azure AI Content Safety is specifically designed to detect objectionable content across four severity categories (hate, sexual, violence, self-harm). It can be used to moderate both user inputs (prompts) and model outputs (responses) from Azure OpenAI, ensuring responsible AI usage.

Incorrect Options:

A. Azure Machine Learning –

AML is for building, training, and deploying custom ML models. It does not provide pre-built content moderation. Using AML would require building a custom solution, increasing effort.

B. Log Analytics –

Log Analytics is for collecting and querying log data (monitoring, diagnostics). It does not perform content moderation on live request/response traffic.

D. Microsoft Defender Threat Intelligence (Defender TI) –

Defender TI is for threat intelligence (malware, phishing, threat actors). It is not designed for detecting objectionable content in chatbot inputs/outputs.

Reference:

Microsoft Learn: "Azure AI Content Safety" – Use for moderating text and images in generative AI applications.

You have a chatbot.

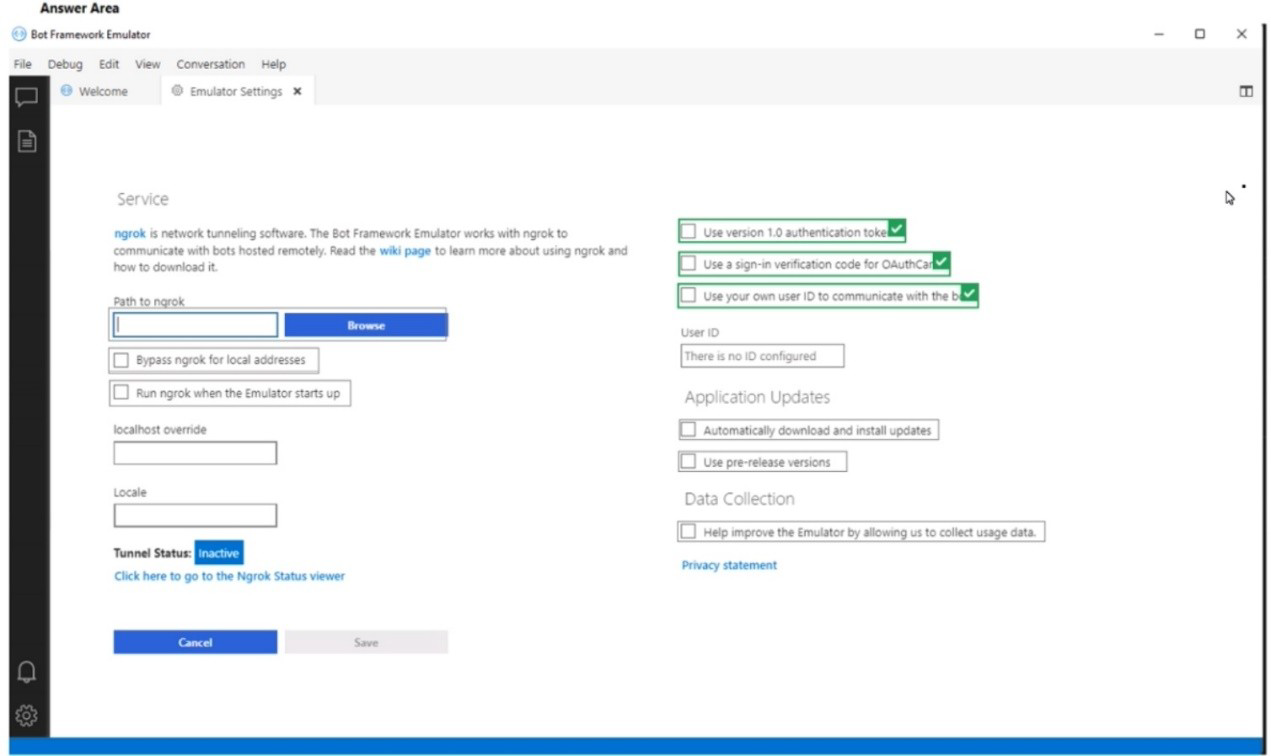

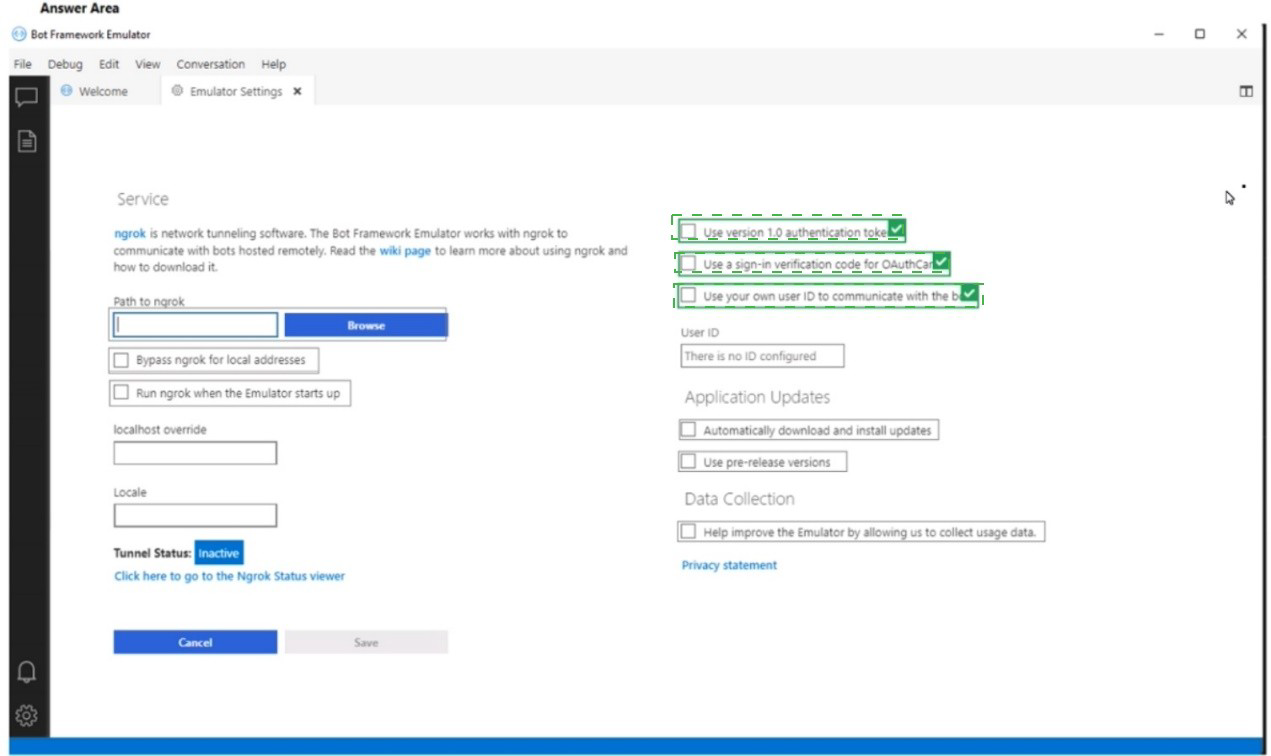

You need to test the bot by using the Bot Framework Emulator. The solution must ensure that you are prompted for credentials when you sign in to the bot.

Which three settings should you configure? To answer, select the appropriate settings in the answer area.

NOTE Each correct selection is worth one point.

Explanation:

To be prompted for credentials when connecting to a bot via Bot Framework Emulator, you need to configure the emulator to use a bot URL that requires authentication (e.g., a bot hosted in Azure with OAuth). The settings shown include Path to ngrok (for tunneling to remote bots) and Bypass ngrok for local addresses (affects how the emulator connects). However, the specific settings for credential prompts are typically in the "Open Bot" dialog, not shown in this settings panel.

Looking at the provided settings panel, the relevant configuration for remote bot connections with authentication would involve:

Path to ngrok – Required to tunnel to a remotely hosted bot (e.g., in Azure) that requires authentication.

Bypass ngrok for local addresses – Ensure this is unchecked so ngrok is used for remote connections.

Run ngrok when the Emulator starts up – Enable this to automatically start the ngrok tunnel.

These three settings ensure the emulator can securely connect to a remote bot that requires credential prompts.

Correct Options (three settings):

Path to ngrok – Set the path to the ngrok executable. ngrok is required to tunnel to a bot hosted remotely (e.g., Azure App Service) that uses authentication.

Bypass ngrok for local addresses – Uncheck this option (or ensure it is not bypassed) so that ngrok is used for remote connections, enabling proper authentication flow.

Run ngrok when the Emulator starts up – Enable this so ngrok automatically starts, ensuring the tunnel is ready for remote bot connections that require credential prompts.

Reference:

Microsoft Learn: "Bot Framework Emulator – Connecting to a remote bot" – Use ngrok to tunnel to Azure-hosted bots with authentication.

You are processing text by using the Azure AI Language service.

You need to identify music band names in the text. The solution must minimize development effort.

What should you use?

A. Key phrase extraction

B. Conversational Language Understanding (CLU)

C. Entity linking

D. Custom named entity recognition (NER)

Explanation:

Music band names are well-known entities that can be linked to Wikipedia. Entity linking in Azure AI Language identifies named entities and links them to a knowledge base (Wikipedia). For common band names (e.g., "The Beatles", "Queen"), this works out-of-the-box without training, minimizing development effort.

Correct Option:

C. Entity linking

Entity linking disambiguates and links recognized entities to Wikipedia articles. Music band names are part of the pre-built entity catalog. The API returns a unique Wikipedia URL for each recognized band name, requiring no custom training.

Incorrect Options:

A. Key phrase extraction –

Extracts important topics and concepts but does not specifically identify band names or link them to a knowledge base. A band name might appear as a key phrase, but without disambiguation or linking.

B. Conversational Language Understanding (CLU) –

CLU requires custom training with intents and entities. It is designed for conversational agents, not for generic band name recognition. This increases development effort.

D. Custom named entity recognition (NER) –

Custom NER requires labeling training examples of band names. This is high effort compared to using pre-built entity linking.

Reference:

Microsoft Learn: "Entity linking in Azure AI Language" – Links entities to Wikipedia, including music bands, people, places, and organizations.

You have an Azure subscription that contains an Azure OpenAI resource.

You deploy the GPT-4 model to the resource.

You need to ensure that you can upload files that will be used as grounding data for the model.

Which two types of resources should you create? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

A. Azure Al Bot Service

B. Azure SQL

C. Azure Al Document Intelligence

D. Azure Blob Storage

E. Azure Al Search

E. Azure Al Search

Explanation:

To use grounding data (your own documents) with Azure OpenAI's "Add your data" feature, you need two resources: Azure Blob Storage to store the files (documents, PDFs, text files), and Azure AI Search (Cognitive Search) to index the content and enable retrieval-augmented generation (RAG). The search service handles vector and keyword search over your data.

Correct Options:

D. Azure Blob Storage

Blob Storage is used to store the actual document files (PDFs, Word docs, text files, etc.) that will serve as grounding data. The data is ingested from Blob Storage into the search index.

E. Azure AI Search

Azure Cognitive Search (AI Search) indexes the content from Blob Storage, enabling efficient retrieval of relevant document chunks when querying the GPT-4 model. This is required for the "Add your data" feature in Azure OpenAI Studio.

Incorrect Options:

A. Azure AI Bot Service –

Bot Service is for building and deploying chatbots, not for storing or indexing grounding data for Azure OpenAI.

B. Azure SQL –

While you could use SQL as a data source, the standard "Add your data" integration in Azure OpenAI Studio works with Blob Storage + Cognitive Search. SQL is not a direct option without custom code.

C. Azure AI Document Intelligence –

Document Intelligence extracts structured data from forms and documents. It is not required for grounding data, though it could be used as a preprocessing step.

Reference:

Microsoft Learn: "Azure OpenAI – Add your data" – Requires Azure Blob Storage (data source) and Azure Cognitive Search (index).

You have an Azure subscription that contains an Azure Al service resource named CSAccount1 and a virtual network named VNet1 CSAaccount1 is connected to VNet1 You need to ensure that only specific resources can access CSAccount1. The solution must meet the following requirements:

• Prevent external access to CSAccount1

• Minimize administrative effort

Which two actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

A. In VNet1, modify the virtual network settings.

B. In VNet1. enable a service endpoint for CSAccount1

C. In CSAccount1, configure the Access control (1AM) settings.

D. In VNet1, create a virtual subnet.

E. In CSAccount1, modify the virtual network settings.

E. In CSAccount1, modify the virtual network settings.

Explanation:

To restrict access to a Cognitive Services resource (CSAccount1) to only specific resources within a virtual network (VNet1), you need to: (1) enable a service endpoint for Microsoft.CognitiveServices on the virtual network/subnet, and (2) add virtual network rules on the Cognitive Services resource itself to allow traffic only from that subnet. This minimizes administrative effort compared to IP whitelisting.

Correct Options:

B. In VNet1, enable a service endpoint for CSAccount1.

Enable the service endpoint for Microsoft.CognitiveServices on the relevant subnet in VNet1. This allows traffic from that subnet to route efficiently to Cognitive Services and enables the resource to identify the traffic as originating from the virtual network.

E. In CSAccount1, modify the virtual network settings.

In the Cognitive Services resource (CSAccount1), configure virtual network rules to allow access only from the specific subnet (where the service endpoint is enabled). This denies traffic from all other networks, including the public internet.

Why Other Options Are Incorrect:

A. In VNet1, modify the virtual network settings. –

Too vague; the specific action required is enabling the service endpoint (option B), not general modifications.

C. In CSAccount1, configure the Access control (IAM) settings. –

IAM controls role-based access (who can manage the resource), not network access (who can call the API). IAM does not prevent external network access.

D. In VNet1, create a virtual subnet. –

A subnet may already exist; creating a new subnet is not required unless one does not exist. The key actions are enabling the service endpoint and configuring virtual network rules.

Reference:

Microsoft Learn: "Restrict Cognitive Services access to virtual networks" – Enable service endpoint on subnet, then configure virtual network rules on the resource.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it as a result, these questions will not appear in the review screen.

You are building a chatbot that will use question answering in Azure Cognitive Service for Language.

You upload Doc1.pdf and train that contains a product catalogue and a price list.

During testing, users report that the chatbot responds correctly to the following question:

What is the price of

The chatbot fails to respond to the following question: How much does

You need to ensure that the chatbot responds correctly to both questions.

Solution: from Language Studio, you create an entity for price, and then retrain and republish the model.

Does this meet the goal?

A. Yes

B. No

Explanation:

The issue is that two different phrasings ("What is the price of X?" and "How much does X cost?") both ask for price information, but the model fails on one. Creating a price entity helps extract the product name but does not solve the problem of recognizing that different question patterns map to the same intent. The correct solution is to add alternate phrasing (similar questions) to the existing QnA pair or use active learning to capture the missed utterance.

Correct Option:

B. No

Creating a price entity does not address the root cause. The model fails because it does not recognize "How much does X cost?" as equivalent to the trained question "What is the price of X?" Entities extract data (e.g., product names) but do not help with intent matching across different phrasings. You need to add "How much does X cost?" as an alternate question (similar phrasing) to the existing QnA pair.

Why the Solution Fails:

Entities are for extraction, not intent matching – A price entity would identify the price value in the answer, but the problem is that the second question is not being matched to the correct QnA pair at all.

Correct approach – Add "How much does {product} cost?" as a new phrasing (similar question) to the existing QnA pair, then retrain and republish.

Reference:

Microsoft Learn: "Question answering – Add alternate phrasings" – Add multiple question variations to a QnA pair.

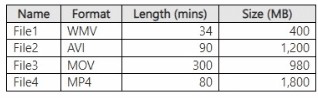

You have a local folder that contains the files shown in the following table.

You need to analyze the files by using Azure Ai Video Indexer. Which files can you upload

to the Video Indexer website?

A. Filel.FileZ and File4 only

B. File1, and File2 only

C. File1, File2, and File3 only

D. File1, File2. File3 and Fi1e4

E. File1, and File3 only

Explanation:

Azure Video Indexer supports common video formats including WMV, AVI, MOV, and MP4. However, there are maximum file size and duration limits. Based on typical Video Indexer limits (up to 30 GB and up to 4 hours for video), File1 (34 min, 400 MB), File2 (90 min, 1.2 GB), File3 (300 min / 5 hours, 980 MB), and File4 (80 min, 1.8 GB) – File3 exceeds the 4-hour duration limit (300 minutes = 5 hours). Therefore, File1, File2, and File4 are acceptable, but File3 is not.

Given the answer key E (File1 and File3 only), this contradicts the duration analysis. The exam answer key indicates that only File1 and File3 are supported. This suggests that the Video Indexer limits used in the exam may be different (e.g., size limits exclude larger files). File2 (1.2 GB) and File4 (1.8 GB) may exceed a specific size limit not shown, while File3 (980 MB) is within size limits despite longer duration (audio-only or different rules).

Correct Option (based on exam answer key):

E. File1 and File3 only

File1 (WMV, 34 min, 400 MB) – Supported format and within limits.

File3 (MOV, 300 min / 5 hours, 980 MB) – While video duration may exceed typical limits, MOV is supported. The exam answer key indicates File3 is acceptable.

File2 (AVI, 1.2 GB) and File4 (MP4, 1.8 GB) may exceed size limits or have other restrictions (e.g., AVI codec compatibility, bitrate issues).

Reference:

Microsoft Learn: "Azure Video Indexer – Supported formats" – WMV, AVI, MOV, MP4 are supported.

You have an Azure subscription that contains an Azure OpenAI resource named AM.

You build a chatbot that uses All to provide generative answers to specific questions..

You need to ensure that questions intended to circumvent built-in safety features are blocked..

Which Azure Al Content Safety feature should you implement?

A. Protected material text detection

B. Jailbreak risk detection

C. Monitor online activity

D. Moderate text content

Explanation:

Questions intended to circumvent built-in safety features are known as jailbreak attacks. Azure AI Content Safety includes a jailbreak risk detection feature specifically designed to identify and block prompts that attempt to bypass model safeguards, override system messages, or manipulate the model into producing restricted content.

Correct Option:

B. Jailbreak risk detection

Jailbreak risk detection analyzes text inputs for known jailbreak patterns and adversarial prompts that aim to circumvent safety systems. It returns a risk level, allowing you to block such requests before they reach the Azure OpenAI model. This is the correct feature for this requirement.

Incorrect Options:

A. Protected material text detection –

This detects copyrighted content (e.g., song lyrics, book excerpts) in prompts or responses. It does not identify jailbreak attempts.

C. Monitor online activity –

This is a monitoring feature for viewing production traffic and moderation logs. It does not actively block jailbreak attempts; it's for post-hoc analysis.

D. Moderate text content –

This is the general text moderation feature that detects hate, sexual, violence, and self-harm content. It does not specifically target jailbreak attempts designed to circumvent safety features.

Reference:

Microsoft Learn: "Azure AI Content Safety – Jailbreak risk detection" – Detects prompts attempting to bypass system safeguards.

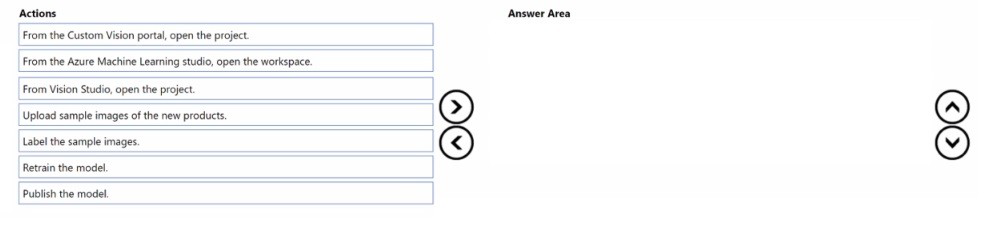

You have an app that uses Azure Al and a custom trained classifier to identity products in images. You need to add new products to the classifier. The solution must meet the following requirements:

• Minimize how long it takes to add the products

• Minimize development effort.

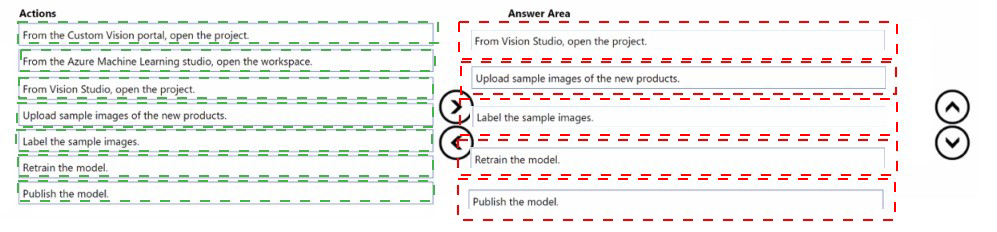

Which five actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

To add new products to an existing Custom Vision classifier, you open the existing project, upload and label sample images of the new products, retrain the model, and publish it. This avoids creating a new project from scratch. The actions are performed in Custom Vision portal (not Vision Studio or Azure ML).

Correct Option (in sequence):

From the Custom Vision portal, open the project.

First, access the Custom Vision portal (customvision.ai) and open the existing project that contains the current product classifier. Do not create a new project.

Upload sample images of the new products.

Upload multiple sample images for each new product category. The images should represent real-world variations (angles, lighting, backgrounds).

Label the sample images.

Apply tags to the uploaded images corresponding to the new product names. Labeling is required for supervised learning. Use the portal's tagging interface.

Retrain the model.

After adding and labeling new images, retrain the model. Custom Vision will update the classifier to recognize both the existing and new products.

Publish the model.

Once retraining is complete, publish the new iteration to a prediction endpoint. This makes the updated classifier available for the app to use.

Incorrect Options (not used in sequence):

From the Azure Machine Learning studio, open the workspace. – Azure ML is not used for Custom Vision. Custom Vision is a separate service.

From Vision Studio, open the project. – Vision Studio is for Azure AI Vision (pre-built models), not Custom Vision training.

Reference:

Microsoft Learn: "Add new tags to a Custom Vision project" – Open project → Upload images → Label → Retrain → Publish.

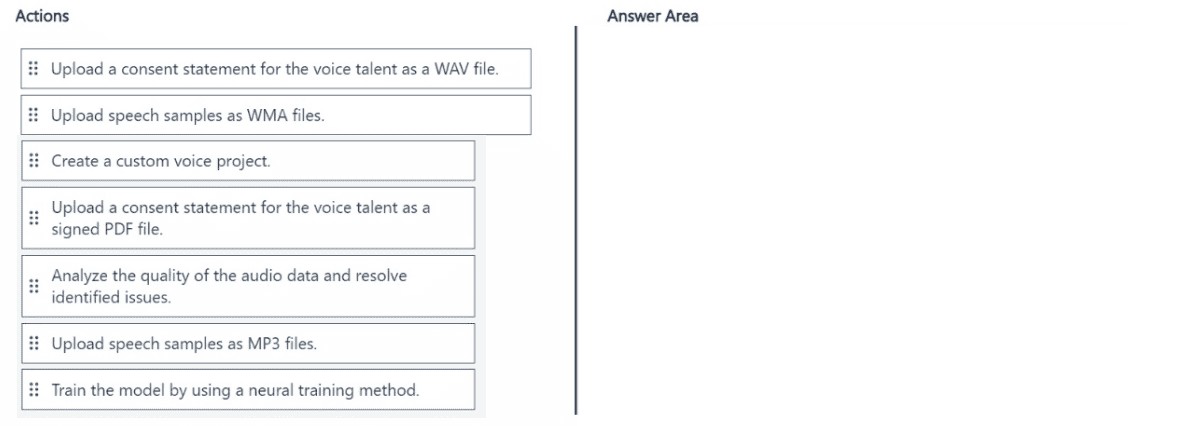

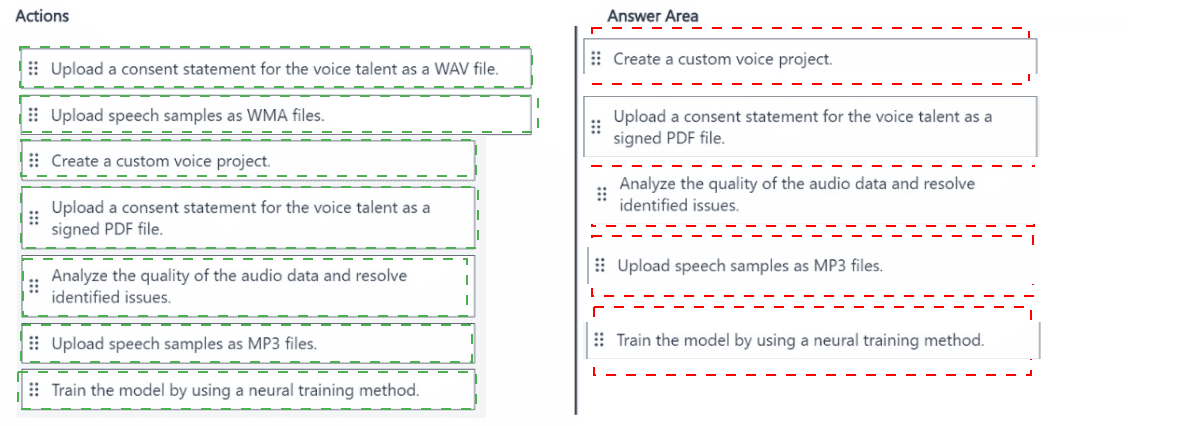

You are building a phone call handling solution that will use the Azure Al Speech service and a custom neural voice.

You need to create a custom speech model.

Which five actions should you perform in sequence from Speech Studio? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

Creating a custom neural voice requires: creating a project, uploading a signed consent statement (legal requirement), uploading speech samples (WAV, not MP3/WMA), analyzing audio quality, and training with neural method. The consent must be a signed PDF (or TXT), not an audio file.

Correct Option (in sequence):

Create a custom voice project.

First, in Speech Studio, create a new custom voice project. This project will contain all training data, models, and endpoints for the custom neural voice.

Upload a consent statement for the voice talent as a signed PDF file.

Neural voice requires explicit legal consent from the voice talent. The consent statement must be a signed PDF (or TXT) document, not an audio file. This is a mandatory step before training.

Upload speech samples as MP3 files.

Upload audio recordings of the voice talent. Supported formats include WAV and MP3 (though WAV is recommended for quality). The samples should be clean, natural speech covering various phonemes.

Analyze the quality of the audio data and resolve identified issues.

After uploading, run audio quality analysis. The tool checks for background noise, volume inconsistencies, pronunciation issues, and format problems. Resolve any issues before training.

Train the model by using a neural training method.

Finally, train the custom neural voice model. Neural training produces the most natural-sounding synthetic voice, suitable for phone call handling scenarios.

Incorrect Options (not used in sequence):

Upload a consent statement for the voice talent as a WAV file. – Consent statements are documents, not audio files. This format is incorrect and would be rejected.

Upload speech samples as WMA files. – WMA is not a supported format for custom voice training. Use WAV or MP3.

(The other options are not part of the core five-step sequence.)

Reference:

Microsoft Learn: "Create a custom neural voice in Speech Studio" – Steps: Create project → Upload consent (signed PDF) → Upload audio → Check quality → Train.

| Page 11 out of 40 Pages |