Topic 2, Adventure Works

Case study

This is a case study. Case studies are not timed separately. You can use as much

exam time as you would like to complete each case. However, there may be additional

case studies and sections on this exam. You must manage your time to ensure that you

are able to complete all questions included on this exam in the time provided.

To answer the questions included in a case study, you will need to reference information

that is provided in the case study. Case studies might contain exhibits and other resources

that provide more information about the scenario that is described in the case study. Each

question is independent of the other questions in this case study.

At the end of this case study, a review screen will appear. This screen allows you to review

your answers and to make changes before you move to the next section of the exam. After

you begin a new section, you cannot return to this section.

To start the case study

To display the first question in this case study, click the Next button. Use the buttons in the

left pane to explore the content of the case study before you answer the questions. Clicking

these buttons displays information such as business requirements, existing environment,

and problem statements. If the case study has an All Information tab, note that the

information displayed is identical to the information displayed on the subsequent tabs.

When you are ready to answer a question, click the Question button to return to the

question.

Background

Current environment

Adventure Works Cycles wants to replace their paper-based bicycle manufacturing

business with an efficient paperless solution. The company has one manufacturing plant in

Seattle that produces bicycle parts, assembles bicycles, and distributes finished bicycles to

the Pacific Northwest.

Adventure Works Cycles has a retail location that performs bicycle repair and warranty

repair work. The company has six maintenance vans that repair bicycles at various events

and residences.

Adventure Works Cycles recently deployed Dynamics 365 Finance and Dynamics 365

Manufacturing in a Microsoft-hosted environment for financials and manufacturing. The

company plans to leverage the Microsoft Power Platform to migrate all of their distribution

and retail workloads to Dynamics 365 Unified Operations.

The customer uses Dynamics 365 Sales. Dynamics 365 Customer Service and Dynamics

365 Field Service.

Retail store information

Adventure Works Cycle has one legal entity, four warehouses, and six field service

technicians.

Warehouse counting is performed manually by using a counting journal. All

warehouse boxes and items are barcoded.

The Adventure Works Cycles retail location performs bicycle inspections and

performance tune-ups.

Technicians use paper forms to document the bicycle inspection performed before

a tune-up and any additional work performed on the bicycle.

Adventure Works Cycles uses a Power Apps app for local bike fairs to attract new

customers.

A canvas app is being developed to capture customer information when customers

check in at the retail location. The app has the following features:

Technology

Requirements

A plug-in for Dynamics 365 Sales automatically calculated the total billed time from

all activities on a particular customer account, including sales representative visits,

phone calls, email correspondence, and repair time compared with hours spent.

A shipping API displays shipping rates and tracking information on sales orders.

The contract allows for 3,000 calls per month.

Ecommerce orders are processed in batch daily by using a manual import of sales

orders in Dynamics 365 Finance.

Microsoft Teams is used for all collaboration.

All testing and problem diagnostics are performed in a copy of the production

environment.

Customer satisfaction surveys are recorded with Microsoft Forms Pro. Survey

replies from customers are sent to a generic mailbox.

Automation

A text message must be automatically sent to a customer to confirm an

appointment and to notify when a technician is on route that includes their location.

Ecommerce sales orders must be integrated into Dynamics 365 Finance and then

exported to Azure every night.

A text alert must be sent to employees scheduled to assist in the repair area of the

retail store if the number of repair check-ins exceeds eight.

Submitted customer surveys must generate an email to the correct department.

Approval and follow-up must occur within a week.

Reporting

The warehouse manager’s dashboard must contain warehouse counting variance

information.

A warehouse manager needs to quickly view warehouse KPIs by using a mobile

device.

Power BI must be used for reporting across the organization.

User experience

Warehouse counting must be performed by using a mobile app that scans

barcodes on boxes.

All customer repairs must be tracked in the system no matter where they occur.

Qualified leads must be collected from local bike fairs.

Issues

Warehouse counting must be performed by using a mobile app that scans

barcodes on boxes.

All customer repairs must be tracked in the system no matter where they occur.

Qualified leads must be collected from local bike fairs.

Internal

User1 reports receives an intermittent plug-in error when viewing the total bill

customer time.

User2 reports that Azure consumption for API calls has increased significantly to

100 calls per minute in the last month.

User2 reports that sales orders have increased.

User5 receives the error message: ‘Endpoint unavailable’ during a test of the

technician dispatch ISV solution.

The parts department manager who is the approver for the department is currently

on sabbatical.

External

CustomerB reports that the check-in app returned only one search result for their

last name, which is not the correct name.

Nine customers arrive in the repair area of the retail store, but no texts were sent

to scheduled employees.

Customers report that the response time from the information email listed on the

Adventure Works Cycles website is greater than five days.

CustomerC requested additional information from the parts department through

the customer survey and has not received a response one week later.

A company has a Common Data Service (CDS) environment.

All accounts in the system with a relationship type of Customer set must have an account

number. A plug-in has been developed.

When a Customer is updated with a relationship type, the plug-in sets the account number

if not provided by the user.

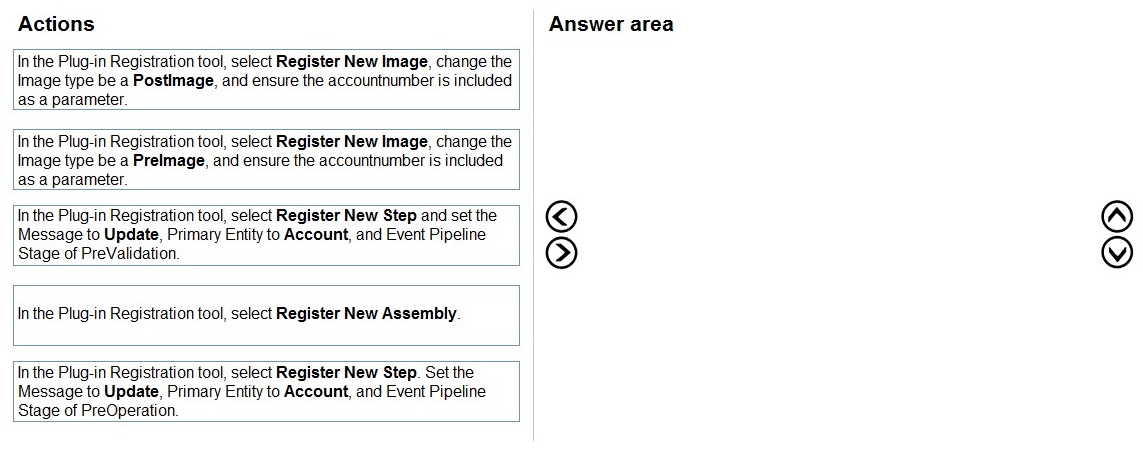

You need to register the plug-in.

Which three actions should you perform in sequence? To answer, move the appropriate

actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

This question assesses your knowledge of plug-in registration in Dataverse. The scenario requires a plug-in that sets an account number automatically when a Customer (Account) is updated with a specific relationship type, but only if the user did not provide one. To accomplish this, the plug-in needs to execute before the update operation saves to the database (PreOperation) and must have access to the current values to determine if an account number was provided.

Correct Sequence:

In the Plug-in Registration tool, select Register New Assembly.

In the Plug-in Registration tool, select Register New Step. Set the Message to Update, Primary Entity to Account, and Event Pipeline Stage of PreOperation.

In the Plug-in Registration tool, select Register New Image, change the Image type to be a PreImage, and ensure the accountnumber is included as a parameter.

Explanation of Sequence:

Register New Assembly: The first step is always to register the compiled plug-in assembly (.dll) that contains your custom code. This makes the plug-in available in the Dataverse environment.

Register New Step with PreOperation: Registering a step defines when and for what event your plug-in executes. The PreOperation stage (executes after main system operation but before database write) is correct because the plug-in needs to modify the account number before it is saved to the database.

Register New Image as PreImage: A PreImage captures the entity's state before the update occurs. This is essential because the plug-in needs to check whether the accountnumber field originally had a value to determine if the user provided one during this update.

Why this sequence works:

Assembly registration must happen before you can register steps

PreOperation stage ensures the plug-in runs before the data is committed, allowing modifications

PreImage provides access to the original values to compare with the incoming update

Why other options are incorrect:

PostImage: Would capture values after the update, too late for the plug-in to modify the data

PreValidation: Runs too early, before basic validation, and might not have all necessary context

Register New Image before step registration: You must register the step first, then add images to that specific step

Reference:

Register a plug-in, Event execution pipeline, PreOperation stage, PreImage and PostImage - Microsoft Learn

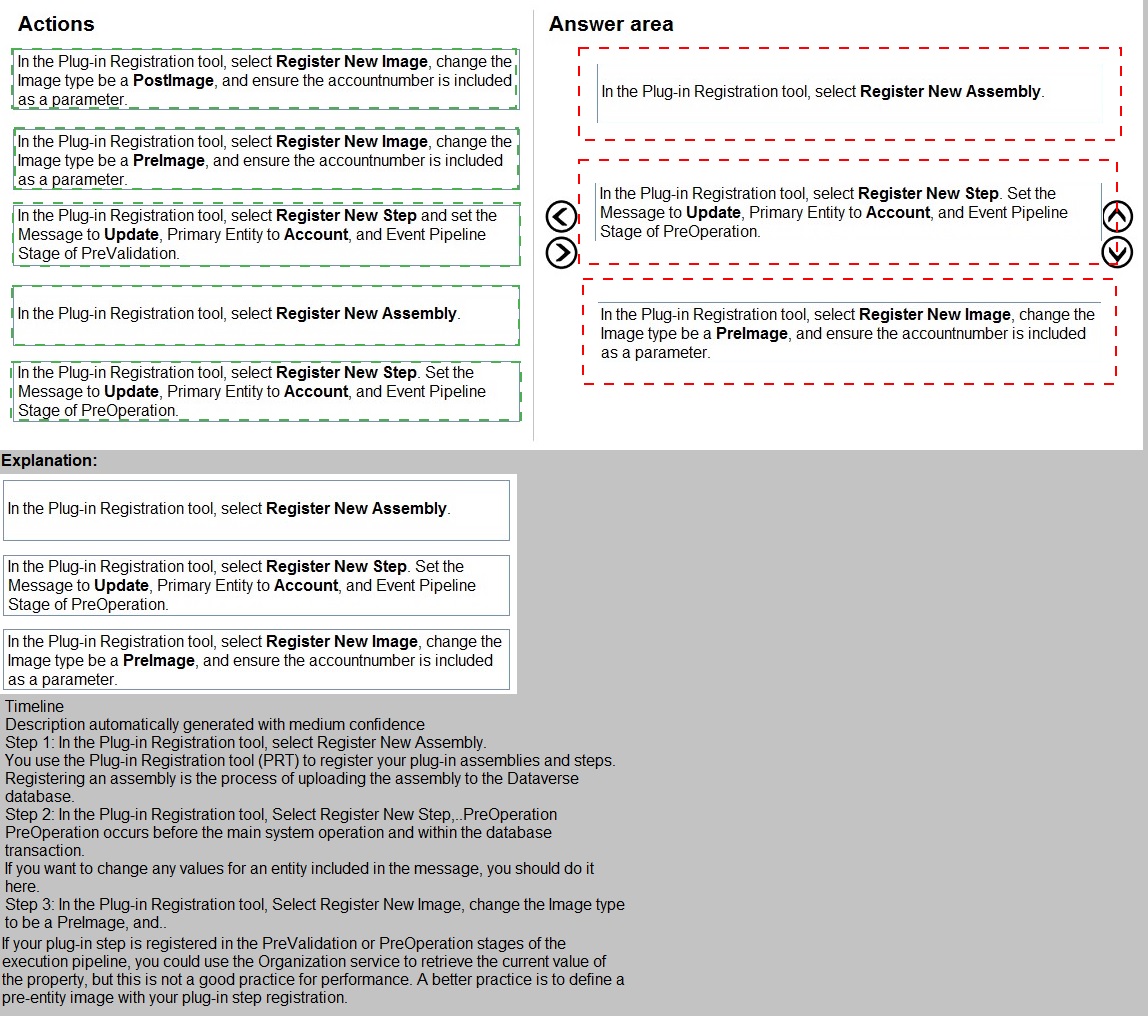

Teachers in a school district use Azure skill bots to teach specific classes. Students sign

into an online portal to submit completed homework to their teacher for review. Students

use a Power Virtual Agents chatbot to request help from teachers.

You need to incorporate the skill bot for each class into the homework bot.

Which three actions should you perform in sequence? To answer, move the appropriate

actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

This question tests your understanding of integrating skill bots with a Power Virtual Agents chatbot. In this scenario, multiple Azure skill bots (for specific classes) need to be incorporated into a main homework bot (Power Virtual Agents chatbot). The process requires proper registration and configuration to enable the skill bot to be called from within the Power Virtual Agents bot.

Correct Sequence:

Register the skill bot in Azure Active Directory.

Create a manifest for the skill bot.

Register the skill bot in Power Virtual Agents.

Explanation of Sequence:

Register the skill bot in Azure Active Directory: The skill bot must first be registered as an application in Azure AD. This creates a service principal and generates the necessary App ID and client credentials that will be used for authentication between bots.

Create a manifest for the skill bot: A skill manifest is a JSON file that describes the skill bot's capabilities, endpoints, and available actions. This manifest is what the Power Virtual Agents bot will consume to understand how to interact with the skill.

Register the skill bot in Power Virtual Agents: Finally, you add the skill to the Power Virtual Agents homework bot using the manifest and Azure AD registration details. This makes the skill bot's capabilities available to be called from within the homework bot's conversations.

Why this sequence is correct:

Azure AD registration must happen first to establish identity and security credentials

The manifest creation depends on having the bot registered and knowing its endpoints and App ID

Registering in Power Virtual Agents requires both the manifest and the Azure AD registration information

Why other options are incorrect:

Register the homework bot in Azure Active Directory: The homework bot (Power Virtual Agents) already has its own identity; this step is not required for skill integration

Create a manifest for the homework bot: Manifests are for skill bots being exposed, not for the consuming bot

Register the homework bot in Power Virtual Agents: The homework bot is already the Power Virtual Agents bot; this step is meaningless

Reference:

Use skill bots in Power Virtual Agents, Register a bot as a skill, Create a skill manifest - Microsoft Learn

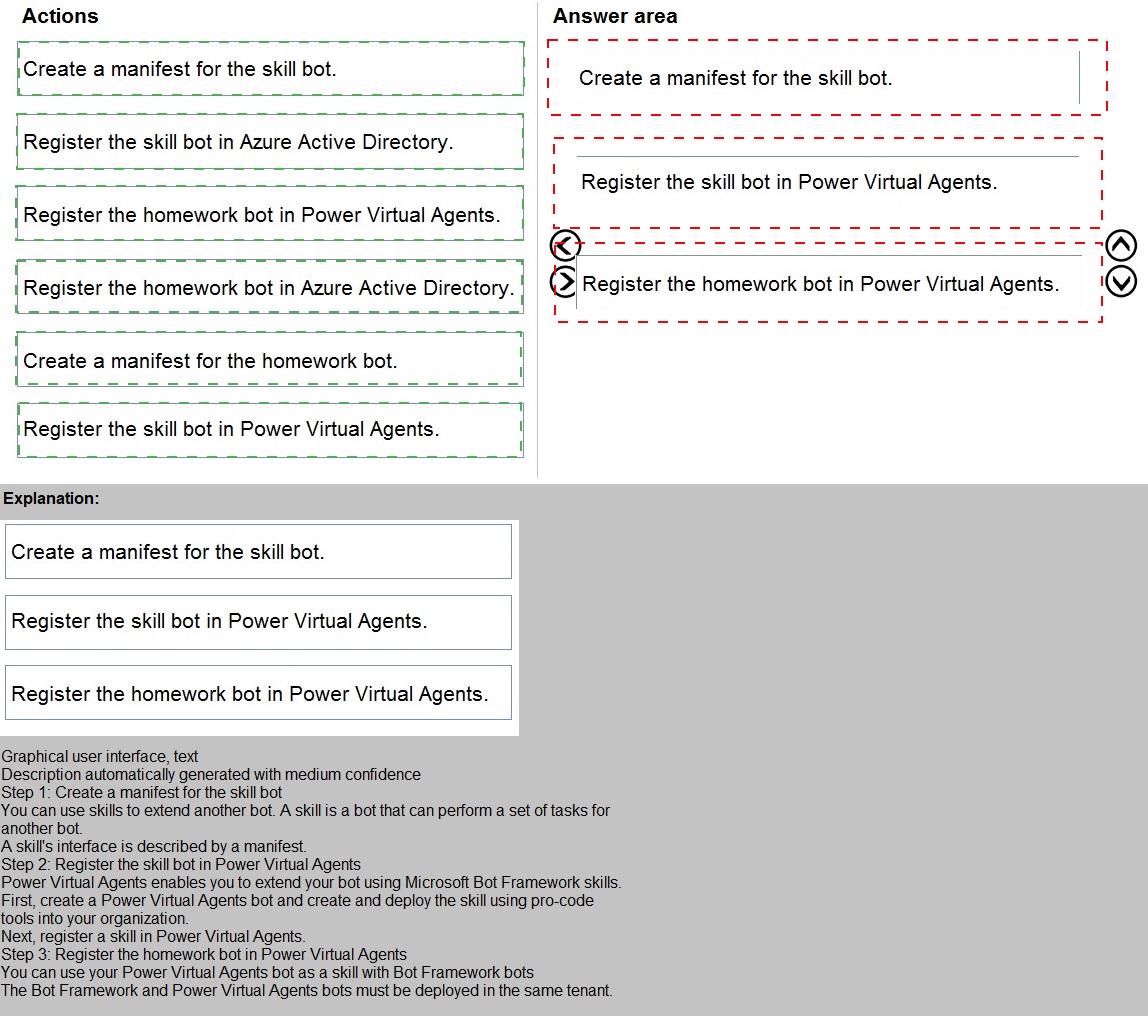

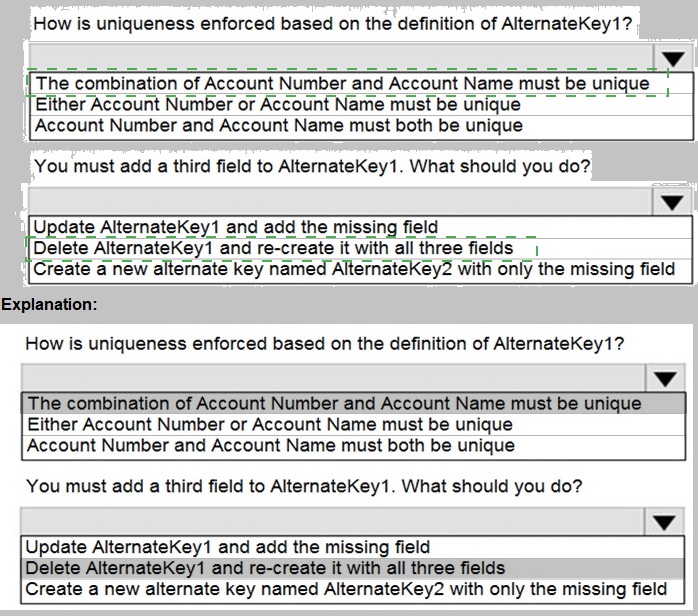

You create an alternate key named AlternateKey1 on the Account entity. The definition for AlternateKey1 is shown in the following exhibit:

Explanation:

This question tests your understanding of alternate keys in Dataverse. Alternate keys provide a way to uniquely identify records using natural keys instead of the system GUID. The exhibit shows that AlternateKey1 is defined with two fields, and you need to understand how uniqueness is enforced with multiple fields, as well as how to modify an existing alternate key when additional fields need to be included.

Answers:

How is uniqueness enforced based on the definition of AlternateKey1?

The combination of Account Number and Account Name must be unique

You must add a third field to AlternateKey1. What should you do?

Delete AlternateKey1 and re-create it with all three fields

Explanation of Correct Options:

For question 1: The combination of Account Number and Account Name must be unique

When an alternate key contains multiple fields, uniqueness is enforced on the combination of values across all fields in the key. This means that no two records can have the same values for both Account Number AND Account Name simultaneously. However, individual records could have duplicate Account Numbers if their Account Names differ, and vice versa. This composite key approach allows for natural business rules where uniqueness requires multiple identifying attributes.

For question 2: Delete AlternateKey1 and re-create it with all three fields

Once an alternate key is created and activated in Dataverse, it cannot be modified directly. This is because the system generates database indexes and enforces uniqueness constraints based on the key definition. To change the fields included in an alternate key, you must delete the existing key and create a new one with the desired fields. Attempting to update the existing key is not supported by the platform.

Why other options are incorrect for question 1:

Either Account Number or Account Name must be unique: This describes an OR condition, which is not how composite keys work. The system does not require either field to be individually unique.

Account Number and Account Name must both be unique: This describes two separate unique constraints on individual fields, which is different from a composite key enforcing uniqueness on the combination.

Why other options are incorrect for question 2:

Update AlternateKey1 and add the missing field: Alternate keys cannot be modified after creation; they must be deleted and recreated

Create a new alternate key named AlternateKey2 with only the missing field: This would create a separate key on just the third field, not a composite key combining all three fields as required

Reference:

Define alternate keys to reference records, Manage alternate keys - Microsoft Learn

A manufacturing company uses a Common Data Service (CDS) environment to manage their parts inventory across two warehouses modeled as business units and named WH1 and WH2.

Data from the two warehouses is processed separately for each part that has its inventory quantities updates.

The company must automate this process, pushing inventory updates from orders submitted to the warehouses.

You need to build the automation using Power Automate flows against the CDS database.

You must achieve this goal by using the least amount of administrative effort.

Which flow or flows should you recommend?

A. Two automated flows with scope Business Unit, with triggers on Create/Update/Delete on orders.

B. Two automated flows with scope Business Unit, with triggers on Create/Update/Delete and each flow filtering updates from each business unit.

C. Two scheduled flows, each querying and updating the parts included in orders from each business unit.

D. One scheduled flow, querying the parts included in orders in both business units.

E. One automated flow, querying the orders in both business units.

F. Two scheduled flows, each querying the orders from each business unit.

G. Two automated flows with scope Organization, with triggers on Create/Update/Delete and filters on WH1 and WH2.

H. Two automated flow with scope Business Unit, with triggers on Create/Update/Delete on orders and filters on WH1 and WH2.

Explanation:

This question assesses your ability to design efficient Power Automate solutions in Dataverse with consideration for security boundaries (business units) and minimal administrative effort. The scenario involves two warehouses modeled as business units with separate data processing requirements. The key is to trigger automation when orders are created or updated, but only process updates relevant to each specific warehouse.

Correct Option:

H. Two automated flows with scope Business Unit, with triggers on Create/Update/Delete on orders and filters on WH1 and WH2.

This solution meets all requirements with the least administrative effort because:

Automated flows trigger instantly when orders are created/updated, eliminating the latency of scheduled flows

Business Unit scope ensures the flows run with the security context of each warehouse, respecting data isolation

Filters on WH1 and WH2 ensure each flow only processes orders belonging to its respective warehouse

Two flows (one per warehouse) keep the processing logic separate and maintainable

Why other options are incorrect:

A. Two automated flows with scope Business Unit, with triggers on Create/Update/Delete on orders:

Missing the crucial filters on WH1 and WH2, so each flow would trigger for all orders regardless of warehouse, causing duplicate processing and potential security issues.

B. Two automated flows with scope Business Unit, with triggers on Create/Update/Delete and each flow filtering updates from each business unit:

This is essentially option H but vaguely worded; H is the precise answer with proper terminology.

C. Two scheduled flows, each querying and updating the parts included in orders from each business unit:

Scheduled flows introduce latency and inefficiency; they query periodically rather than responding immediately to changes.

D. One scheduled flow, querying the parts included in orders in both business units:

Single flow violates business unit isolation, runs on schedule (not real-time), and would need complex logic to separate warehouse processing.

E. One automated flow, querying the orders in both business units:

Single automated flow with Organization scope would have access to all orders but would require complex conditional logic and might violate business unit security boundaries.

F. Two scheduled flows, each querying the orders from each business unit:

Scheduled flows introduce latency and are less efficient than event-driven automated flows.

G. Two automated flows with scope Organization, with triggers on Create/Update/Delete and filters on WH1 and WH2:

Organization scope gives flows access to data across all business units, which violates the principle of least privilege and could create security concerns.

Reference:

Business units in Dataverse, Scope of flows, Use triggers and actions in Power Automate - Microsoft Learn

A create a model-driven app. You run Solution checker. The tool displays the following

error:

Solution checker fails to export solutions with model-driven app components.

You need to resolve the issue.

What should you do?

A. Manually export the solution before running Solution checker

B. Assign the Environment Maker security role to the Power Apps Checker application user

C. Assign the System Administrator security role to your user ID

D. Disable the Power Apps Checker application user

E. Assign the Environment Maker security role to your user ID

Explanation:

This question tests your understanding of Solution Checker permissions and application users in Power Platform. Solution Checker is a tool that analyzes solutions against best practices. The error indicates a failure when exporting solutions with model-driven app components. This typically relates to permission issues with the Power Apps Checker application user, which performs the analysis.

Correct Option:

B. Assign the Environment Maker security role to the Power Apps Checker application user

The Power Apps Checker application user is a special system application principal that needs appropriate permissions to access and analyze solution components. When Solution Checker runs, it operates as this application user, not as your user account. Assigning the Environment Maker security role to this application user grants it sufficient privileges to export and analyze solutions containing model-driven app components.

Why this solution works:

The Power Apps Checker application user must have read access to solution components

Environment Maker role provides the necessary permissions to access and export solutions

This is a common resolution for Solution Checker permission-related errors

The application user exists in the environment specifically for the checker service

Why other options are incorrect:

A. Manually export the solution before running Solution checker:

Solution Checker needs to export the solution automatically during analysis. Manual export bypasses the checker's integration and defeats the purpose of automated analysis. This would not resolve the underlying permission issue.

C. Assign the System Administrator security role to your user ID:

Your user permissions are not the issue because Solution Checker runs as the application user, not as you. Elevating your privileges does not affect the application user's permissions.

D. Disable the Power Apps Checker application user:

Disabling the application user would completely break Solution Checker functionality, as there would be no identity under which the checker service can run and access resources.

E. Assign the Environment Maker security role to your user ID:

Similar to option C, this addresses your user permissions rather than the application user that actually performs the solution analysis. The checker service runs independently of your user context.

Reference:

Use Solution Checker to validate your solutions, Solution Checker application user - Microsoft Learn

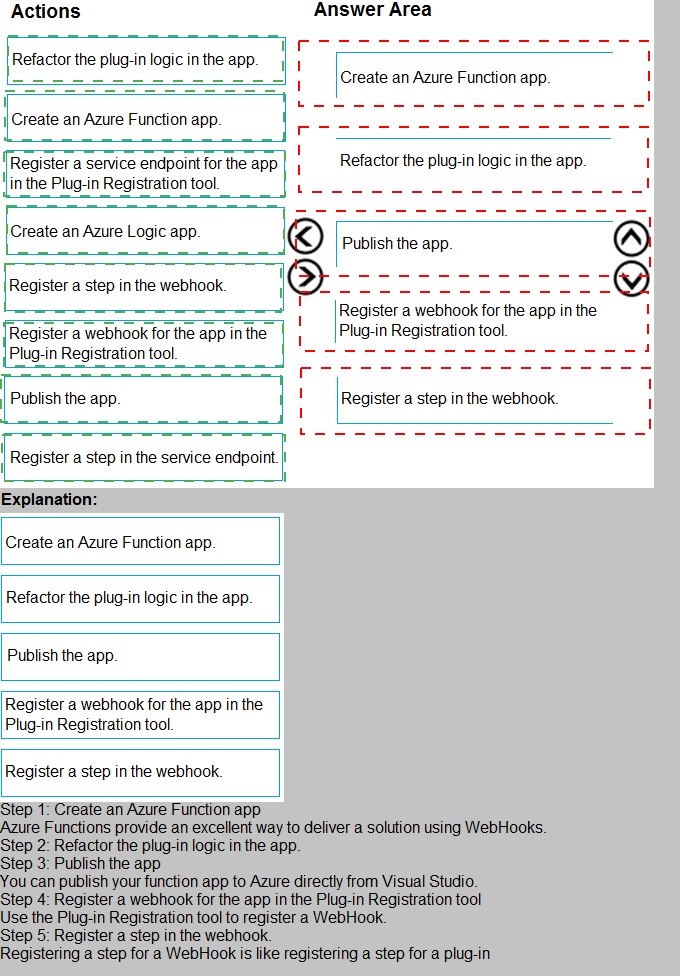

An organization uses plug-in to retrieve specific information from legacy data stores each

time a new order is submitted.

You review the Common Data Service analytics page. The average plug-in execution time

is increasing.

You need to replace the plug-in with another component, reusing as much of the current

plug-in code as possible.

Which five actions should you perform in sequence? To answer, move the appropriate

actions from the list of actions to the answer area and arrange them in the correct order

Explanation:

This question tests your knowledge of migrating long-running plug-in logic to more suitable Azure-based integrations. When plug-in execution time increases, it impacts Dataverse performance because plug-ins run synchronously within the platform's transaction scope. The scenario requires replacing the plug-in with an alternative component while reusing existing code. Azure Functions provide an ideal solution as they support C# code reuse and run asynchronously outside Dataverse.

Correct Sequence:

Create an Azure Function app.

Publish the app.

Register a webhook for the app in the Plug-in Registration tool.

Register a step in the webhook.

Refactor the plug-in logic in the app.

Explanation of Sequence:

Create an Azure Function app: First, you need to create the Azure Function that will host your refactored code. Azure Functions support C# and allow you to reuse most of your existing plug-in logic with minimal changes.

Publish the app: After developing the function, publish it to Azure so it becomes accessible via an HTTPS endpoint that Dataverse can call.

Register a webhook for the app in the Plug-in Registration tool: Webhooks are the recommended way to call external services from Dataverse. Registering a webhook creates a record in Dataverse that points to your Azure Function's endpoint.

Register a step in the webhook: This step configures when the webhook should be triggered (e.g., on Create of Order). This is analogous to registering a step for a plug-in.

Refactor the plug-in logic in the app: Finally, you migrate the existing plug-in code into the Azure Function. Notice that refactoring happens last because you need the function and webhook infrastructure in place first.

Why this order is correct:

The Azure Function must exist and be published before you can register it as a webhook

Webhook registration must precede step registration because the step references the webhook

Refactoring code comes after infrastructure is set up, though in practice development might be iterative

Why other options are incorrect:

Create an Azure Logic App: Logic Apps are no-code/low-code tools and would not allow reusing existing plug-in C# code

Register a service endpoint: Service endpoints are for Azure Service Bus, not for Azure Functions

Register a step in the service endpoint: This applies only if you used a service endpoint instead of a webhook

Any sequence placing code refactoring before infrastructure setup would be impractical

Reference:

Use webhooks with Dataverse, Azure Functions integration, Register a webhook - Microsoft Learn

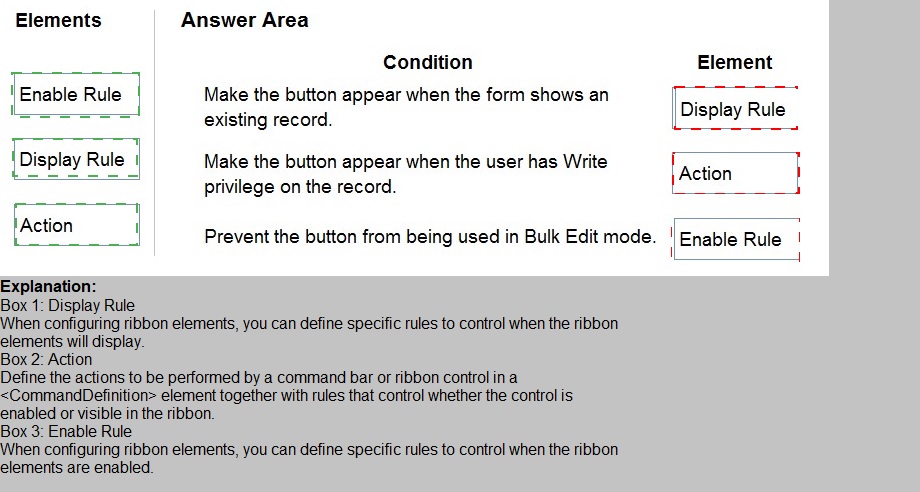

A company has a model-driven app.

A form that validates the date entered requires a custom button. The button must be

available only under certain conditions.

You need to define the CommandDefinition in the RibbonDiffXML to meet the conditions for

the button.

Which elements should you use? To answer, drag the appropriate elements to the correct

conditions. Each element may be used once, more than once, or not at all. You may need

to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Explanation:

This question tests your knowledge of ribbon customization in model-driven apps using RibbonDiffXML. The CommandDefinition element contains rules that control when and how a button appears or behaves. Three types of rules are commonly used: Enable Rules (control whether the button is enabled/disabled), Display Rules (control whether the button is visible/hidden), and Actions (define what happens when clicked). The conditions described relate to visibility and enablement states.

Explanation of Correct Options:

Display Rule for "Make the button appear when the form shows an existing record":

Display rules control the visibility of a button on the ribbon. The condition "when the form shows an existing record" determines whether the button should be visible at all. This is a visibility condition, not an enablement condition. If the form is showing a new record, the button should be hidden entirely using a Display Rule with a FormState rule checking for "Existing."

Enable Rule for "Make the button appear when the user has Write privilege on the record":

Despite the wording "appear," this condition actually controls whether the button is enabled or disabled based on user privileges. Enable rules determine if the button can be clicked. If the user lacks Write privilege, the button should be visible but disabled (grayed out). This is implemented with an Enable Rule containing a Privilege rule checking for Write permission.

Enable Rule for "Prevent the button from being used in Bulk Edit mode":

This condition explicitly controls whether the button can be used (clicked) in Bulk Edit mode. The button should be disabled when the form is in Bulk Edit mode, which is implemented using an Enable Rule with an EntityRule checking the context. This ensures the button remains visible but non-functional in that mode.

Why other elements are not used:

Action is not used for any of these conditions because actions define what happens when the button is clicked, not when or how the button appears or behaves. Actions contain command code (JavaScript) that executes upon button click.

Reference:

Define ribbon commands, Command elements and rules, EnableRule and DisplayRule - Microsoft Learn

You plan to create a canvas app to manage large sets of records. Users will filter and sort

the data.

You must implement delegation in the canvas app to mitigate potential performance issues.

You need to recommend data sources for the app.

Which two data sources should you recommend? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

A. SQL Server

B. Common Data Service

C. Azure Data Factory

D. Azure Table Storage

C. Azure Data Factory

Explanation:

This question tests your understanding of delegation in Power Apps canvas apps. Delegation is the process where expression processing is offloaded to the data source rather than performed locally on the device. This is critical when working with large datasets because without delegation, Power Apps can only retrieve the first 500-2000 records (depending on settings) and perform filtering/sorting locally, causing performance issues and incomplete results.

Correct Options:

A. SQL Server:

SQL Server fully supports delegation for key functions like Filter, Sort, and LookUp. When connecting to SQL Server, Power Apps translates canvas app expressions into T-SQL queries that execute on the server, returning only the relevant results to the app. This ensures optimal performance even with millions of records. SQL Server supports delegation for most common data operations including string functions, arithmetic, and date comparisons.

C. Common Data Service (Dataverse):

Dataverse is the primary recommended data source for enterprise canvas apps with delegation support. It provides comprehensive delegation capabilities for Filter, Sort, and many other functions. Dataverse processes queries server-side, returning only the necessary records to the client. It supports delegation for most operators including contains, starts with, and comparison operations on supported data types.

Why these options are correct:

Both provide server-side query processing capabilities essential for delegation

Both maintain performance when working with large datasets

Both support the filtering and sorting operations mentioned in the requirements

Both are enterprise-grade data sources designed for scale

Incorrect Options:

B. Azure Data Factory:

Azure Data Factory is an ETL and data integration service, not a real-time data source for canvas apps. It cannot serve as a direct data source for delegation because it's designed for moving and transforming data between systems, not for powering interactive applications.

D. Azure Table Storage:

While Azure Table Storage can store large amounts of data, it has limited delegation support in Power Apps. It does not support many common filtering operations (like contains, starts with) and has restrictions on sorting and pagination that make it unsuitable for the scenario described.

Reference:

Delegation overview in Power Apps, Delegation for supported data sources, SQL Server delegation, Common Data Service delegation - Microsoft Learn

As part of the month-end financial closing process, a company uses a batch job to copy all

orders into a staging database.

The staging database is used to calculate any outstanding amounts owed by clients, and

must process all historical data.

You need to ensure that only the data affected during the month is included in the

integration process.

What are two possible ways to achieve this goal? Each correct answer presents a

complete solution.

NOTE: Each correct selection is worth one point.

A. Use change tracking on the orders and run the integration to retrieve new orders and the orders that have the total amount changed in the last month.

B. Create a system view with the orders that have the Modified On field in the last month and run the integration on this subset.

C. Use change tracking on the order lines and run the integration every week and retrieve only the order lines that have been created or deleted in the last month.

D. Create a system view with the order lines that have the Modified On field in the last month and run the integration on this subset.

D. Create a system view with the order lines that have the Modified On field in the last month and run the integration on this subset.

Explanation:

This question assesses your ability to design efficient data integration strategies in Dataverse for month-end financial processes. The requirement is to process only data affected during the current month from a staging database that contains all historical orders. You need to identify two valid approaches that ensure only relevant data (new or modified records from the last month) is included in the integration, minimizing processing time and resource usage.

Correct Options:

C. Use change tracking on the order lines and run the integration every week and retrieve only the order lines that have been created or deleted in the last month.

Change tracking is a native Dataverse feature that efficiently identifies records that have been created, updated, or deleted since a previous synchronization. By enabling change tracking on order lines and running weekly integrations, you can retrieve only the incremental changes from the last month. This approach is efficient because it only returns modified records rather than scanning entire tables, and it properly handles deletions, which views cannot detect.

D. Create a system view with the order lines that have the Modified On field in the last month and run the integration on this subset.

System views provide a filtered subset of data based on specified criteria. Creating a view that filters order lines where Modified On falls within the last month gives you a dynamic subset that automatically updates as records are modified. This approach is simple to implement and works well when you need to process records based on date ranges, though it cannot detect deleted records like change tracking can.

Why these options are correct:

Both options filter data to include only records affected in the last month

Both work with order lines, which is appropriate because outstanding amounts are calculated from order line details

Both provide mechanisms (change tracking or date-filtered views) to identify relevant records

Incorrect Options:

A. Use change tracking on the orders and run the integration to retrieve new orders and the orders that have the total amount changed in the last month:

Change tracking on orders would only detect changes at the order header level. If an order line changes (quantity, price, etc.), the order header's Modified On field might update, but you would need to retrieve all order lines for that order to recalculate amounts, potentially pulling unnecessary data. Additionally, change tracking detects all changes, not specifically total amount changes.

B. Create a system view with the orders that have the Modified On field in the last month and run the integration on this subset:

Similar to option A, this operates at the order header level. If an order line changes, the order header's Modified On updates, but you would need to retrieve all associated order lines to recalculate correctly, potentially including many unchanged line items. This is less efficient than working directly with order lines.

Reference:

Change tracking for tables, Create and edit views, Manage data synchronization - Microsoft Learn

A company uses Common Data Service rollup fields to calculate insurance exposure and

risk profiles for customers.

Users report that the system does not update values for the rollup fields when new

insurance policies are written.

You need to recalculate the value of the rollup fields immediately after a policy is created.

What should you do?

A. Create a plug-in that uses the update method for the rollup field. Configure a step on the Create event for the policy entity for this plug-in.

B. Update the Mass Calculate Rollup Field job to trigger when a new policy record is created.

C. Change the frequency of the Calculate Rollup Field recurring job from every hour to every five minutes.

D. Create new fields on the customer entity for insurance exposure and risk. Write a plug-in that is triggered whenever a new policy record is created.

Explanation:

This question tests your understanding of how rollup fields work in Dataverse. Rollup fields are calculated values that aggregate data from related records, but they do not update instantly by default. Instead, they are processed by the Calculate Rollup Field recurring system job, which runs asynchronously on a scheduled basis. Users reporting that values don't update immediately after policy creation indicates the default schedule is too slow.

Correct Option:

C. Change the frequency of the Calculate Rollup Field recurring job from every hour to every five minutes.

The Calculate Rollup Field job is a system job that processes all rollup field calculations in the environment. By default, it runs hourly. Changing its frequency to every five minutes reduces the delay between policy creation and rollup field updates. This is the simplest and most appropriate solution when immediate updates are needed, as it leverages the existing rollup infrastructure without custom development.

Why this solution works:

Rollup fields depend on the system job for recalculation

Changing job frequency affects all rollup fields, not just insurance-related ones

No custom code or complex configuration required

Five-minute intervals provide near-real-time updates for most business scenarios

Why other options are incorrect:

A. Create a plug-in that uses the update method for the rollup field:

Rollup fields are calculated by the system and cannot be directly updated via plug-ins. Attempting to update a rollup field programmatically will fail because they are read-only calculated values. This approach misunderstands how rollup fields work.

B. Update the Mass Calculate Rollup Field job to trigger when a new policy record is created:

The Mass Calculate Rollup Field job is for manually recalculating rollup fields for specific records, not for continuous automatic updates. It cannot be configured to trigger on record creation events. This job is typically used for one-time bulk recalculations.

D. Create new fields on the customer entity for insurance exposure and risk:

This abandons the rollup field approach entirely. While a plug-in could calculate values and store them in standard fields, this solution requires custom development and duplicates functionality that rollup fields already provide. It also loses the benefits of rollup fields, such as automatic maintenance and query capabilities.

Reference:

Define rollup fields, Rollup fields FAQ, System jobs for rollup fields - Microsoft Learn

| Page 2 out of 21 Pages |