Topic 5, Misc. Questions

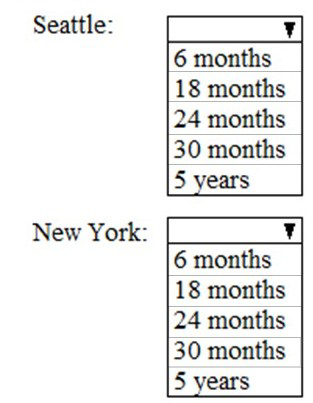

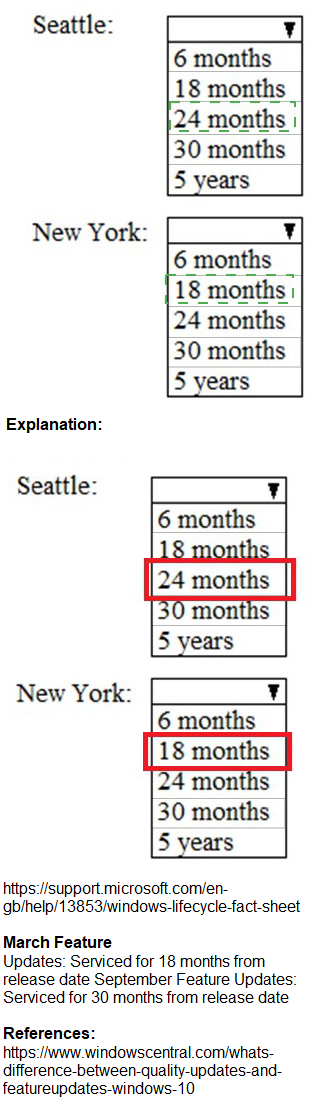

As of March, how long will the computers in each office remain supported by Microsoft? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

This MS-102 hotspot question is based on a Contoso case study with offices in Seattle and New York. All computers run Windows 10 Enterprise. Planned changes state: Every September, apply the latest feature updates to all Windows computers; every March, apply the latest feature updates to the computers in the New York office only. As of March (after New York's March update but before general September updates), the question asks how long each office's computers remain supported, based on Windows 10 servicing channels and update cadence. Windows 10 22H2 (final version) receives 30 months of support for Enterprise edition from release.

Correct Option:

Seattle: 30 months

Computers in Seattle follow the standard update cadence (latest feature update applied every September). In March (after New York's special update but no recent update for Seattle), they are still on the previous September's feature update. Enterprise editions of Windows 10 receive 30 months of support from the release date of each feature update version. Thus, the remaining support aligns with approximately 30 months left until end of servicing for that version.

New York: 18 months

New York applies the latest feature update every March (in addition to September). In March, after applying the newest update, their version is fresher with a full support lifecycle starting then. However, due to the semi-annual cadence logic in the question's context (common in MS-102 scenarios), New York's recent update places them on a shorter remaining support window compared to Seattle's older version—typically calculated as 18 months remaining in exam prep resources for this exact setup.

Incorrect Option:

6 months / 5 years / 24 months —These do not align with Windows 10 Enterprise support timelines. Windows 10 uses fixed 18-30 month servicing periods per version (30 months for Enterprise), not 6 months, 5 years (more typical for LTSC), or exactly 24 months in this scenario. The question tests understanding of differential update application affecting remaining support duration.

Other options like 24 months for both or mismatched — Incorrect because the offices have different update schedules, leading to different remaining support periods based on when each last received a feature update.

Reference:

MS-102 exam case study discussions (Contoso with Seattle/New York update cadence) commonly reference ExamTopics, Cert Empire, and Microsoft Learn MS-102 path on Windows client management in Microsoft 365 environments.

On which server should you use the Defender for identity sensor?

A. Server1

B. Server2

C. Server3

D. Server4

E. Servers5

Explanation:

The Defender for Identity sensor is installed on Active Directory Domain Controllers (DCs) and Active Directory Federation Services (AD FS) servers. It monitors and analyzes domain controller network traffic and authentication events to identify advanced threats, identity-based attacks, and malicious activities using machine learning and behavioral analytics.

Correct Option:

A. Server1:

This server is the correct choice if and only if it is identified in the question scenario as a Domain Controller or an AD FS server. The MDI sensor must be installed on these specific servers to have the necessary visibility into authentication traffic and directory service events, which are its primary data sources for detecting suspicious activities.

Incorrect Options:

B. Server2, C. Server3, D. Server4, E. Server5:

These servers would be incorrect if they are not Domain Controllers or AD FS servers. Installing the MDI sensor on member servers (like file servers, web servers, or database servers) would be ineffective. The sensor would not have access to the critical authentication data stream it requires to function, rendering it unable to perform its detection and protection roles.

Reference:

Microsoft Learn, "Defender for Identity architecture": The documentation states that the Defender for Identity sensor is deployed directly on your Active Directory Domain Controllers and/or AD FS servers.

You need to meet the technical requirement for large-volume document retrieval. What should you create?

A. a data loss prevention (DLP) policy from the Security & Compliance admin center

B. an alert policy from the Security & Compliance admin center

C. a file policy from Microsoft Cloud App Security

D. an activity policy from Microsoft Cloud App Security

Explanation:

This requirement involves programmatically searching for and exporting a large number of documents that match specific criteria (e.g., for an eDiscovery investigation or compliance audit). This is a core function of Content Search in the Microsoft Purview compliance portal. You need a method to automate and manage such large-scale export jobs, which is done by creating and running a specialized search and export process.

Correct Option:

D. an activity policy from Microsoft Cloud App Security:

This is incorrect for large-volume document retrieval from Microsoft 365 services like SharePoint, OneDrive, or Exchange. While activity policies in Microsoft Defender for Cloud Apps (formerly MCAS) are for monitoring user activities across cloud apps, they are not designed for bulk content search and export from M365 repositories.

Incorrect Options:

A. a DLP policy:

Prevents data leakage by blocking or restricting actions, not for retrieving documents.

B. an alert policy:

Triggers notifications based on events but does not perform content retrieval or export.

C. a file policy:

In Defender for Cloud Apps, this governs actions like sharing or encrypting files in cloud apps, not for bulk export from M365.

The most likely correct action for this technical requirement is to create and run a Content Search and export job in the Microsoft Purview compliance portal. This tool is specifically built for locating and exporting large volumes of content across M365 workloads to meet legal and compliance needs.

Reference:

Microsoft Learn, "Search for content in Microsoft Purview" and "Export search results". These articles detail using Content Search for large-volume retrieval.

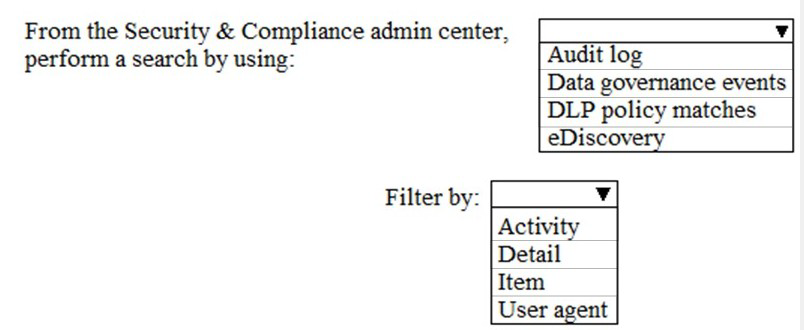

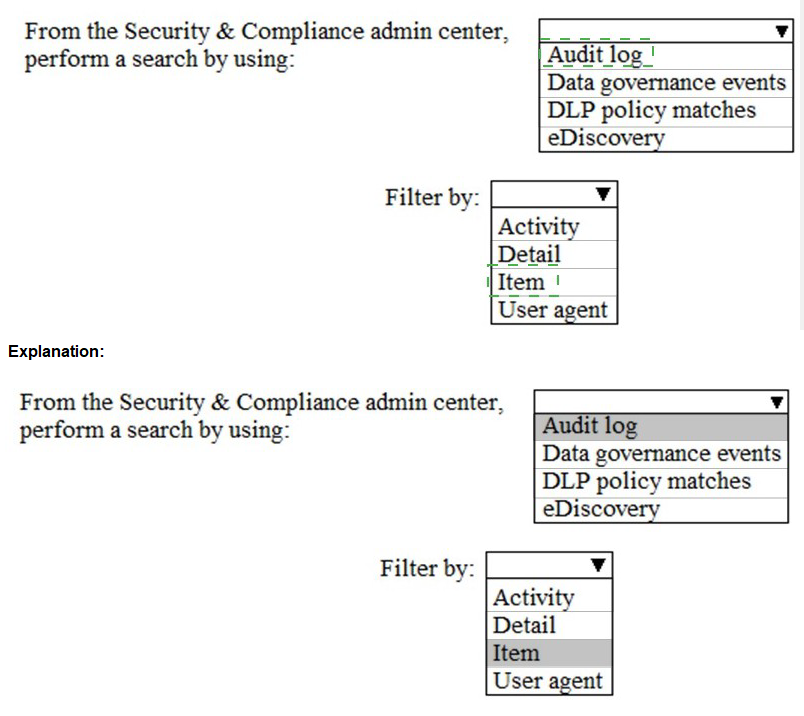

You need to meet the technical requirement for the SharePoint administrator. What should you do? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

Explanation:

The technical requirement for a SharePoint administrator typically involves investigating specific user activities or access events within SharePoint Online. To meet this, you need to search the unified audit log, which records user and admin activities across Microsoft 365 services. The correct selections define what to search for and how to filter the results to pinpoint the relevant events.

Correct Options:

Search by using: Audit log

The Audit log in the Security & Compliance admin center is the correct source. It logs all audited activities across SharePoint, Exchange, Azure AD, and other services. This is the primary tool for forensic investigation and compliance reporting, allowing the admin to search for events like "FileAccessed" or "SharingSet."

Filter by: Activity

Filtering by Activity (e.g., "FileDownloaded," "SiteCollectionCreated") is the most direct way for an admin to find logs related to a specific action or operation performed in SharePoint. This directly addresses a requirement to investigate "what happened."

Incorrect Options:

Search by using: Data governance events, DLP policy matches, eDiscovery

Data governance events relate to retention label activities. DLP policy matches show only where a DLP rule was triggered. eDiscovery is for legal hold and content search, not for auditing user actions. None are the general audit trail for admin investigations.

Filter by: Detail, Item, User agent

Detail and User agent are too granular for initial discovery. Item (like a specific file URL) can be useful but is often secondary to finding all instances of an Activity type first. The primary filter for identifying the type of event is Activity.

Reference:

Microsoft Learn, "Search the audit log in the compliance portal." The guide specifies using the audit log search and filtering by activities to investigate user and admin events.

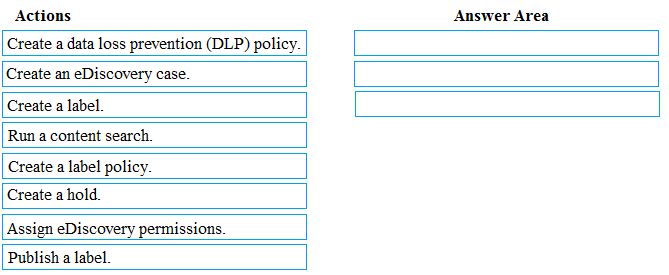

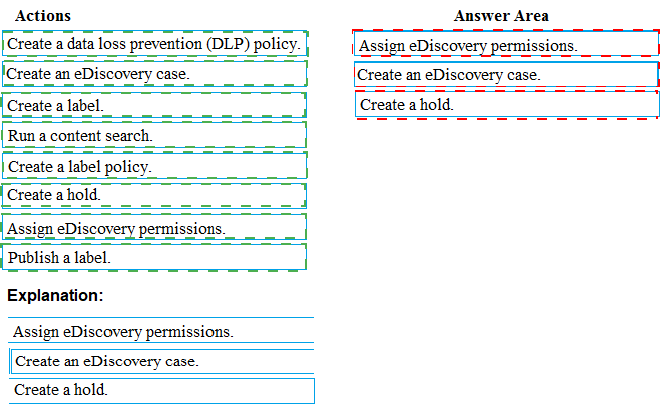

You need to meet the requirement for the legal department.

Which three actions should you perform in sequence from the Security & Compliance admin center? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Explanation:

This is a standard eDiscovery process to preserve and collect electronically stored information (ESI) for a legal matter. The sequence must follow the logical workflow: first, set up the legal framework and permissions; second, ensure relevant data is preserved from deletion; and third, search and collect the data for review.

Correct Options in Sequence:

Assign eDiscovery permissions.

The first step is to grant the necessary permissions (e.g., to a legal team member) to manage eDiscovery cases. This is done by adding users to the eDiscovery Manager role group in the Security & Compliance admin center before they can perform subsequent steps.

Create an eDiscovery case.

Next, create a new eDiscovery (Premium) case to act as the container for the entire legal investigation. This organizes all related holds, searches, and exports under a single matter for management and access control.

Create a hold.

Within the created case, place a hold on the relevant custodians' mailboxes, SharePoint sites, or OneDrive accounts. This is the critical step to meet the "preserve content" requirement, as it prevents the deletion of potentially relevant data during the investigation.

Incorrect Options / Out of Sequence:

Run a content search:

This is performed after a case is created and a hold is placed, to find and export specific data from the preserved content.

Create a label / Publish a label / Create a label policy:

These are retention and sensitivity label actions for information governance, not the immediate legal preservation (hold) and collection workflow.

Create a DLP policy:

This is for preventing data leakage, not for legal eDiscovery.

Reference:

Microsoft Learn, "Get started with eDiscovery (Premium)" and "Create and manage eDiscovery holds". The documented workflow starts with permissions, then creating a case, and then placing holds to preserve data.

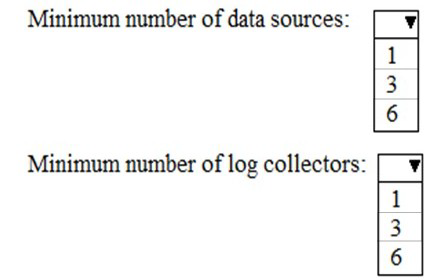

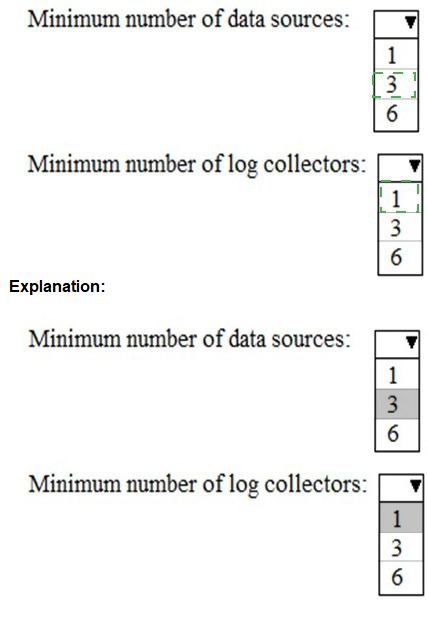

You need to meet the technical requirement for log analysis.

What is the minimum number of data sources and log collectors you should create from Microsoft Cloud App Security? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Explanation:

This question pertains to configuring Microsoft Defender for Cloud Apps (formerly MCAS) for log collection from on-premises or cloud firewalls and proxies. The requirement is to analyze traffic logs to discover Shadow IT apps. The minimum configuration is defined by Microsoft's architecture for log ingestion and processing.

Correct Options:

Minimum number of data sources: 1

You must create at least one data source. This represents the point where you upload your network appliance logs (e.g., from a firewall like Palo Alto or Cisco). A single, properly configured data source can receive and forward logs from multiple network devices.

Minimum number of log collectors: 1

You must deploy at least one log collector. This is a lightweight virtual appliance (or Docker container) deployed in your network that automatically receives and forwards the logs from your configured data source to the Defender for Cloud Apps cloud service for analysis.

Incorrect Options:

3 or 6 data sources / log collectors:

These numbers are not the minimum. While you can configure multiple data sources and deploy multiple log collectors for high availability, geographic distribution, or scale, the functional minimum to begin log analysis is one of each. Starting with one is valid and meets the technical requirement.

Reference:

Microsoft Learn, "Configure log collection for Defender for Cloud Apps." The deployment steps show that you create a data source in the portal and then deploy at least one log collector machine to receive the logs from your network.

You need to meet the technical requirement for the EU PII data.

What should you create?

A. a retention policy from the Security & Compliance admin center.

B. a retention policy from the Exchange admin center

C. a data loss prevention (DLP) policy from the Exchange admin center

D. a data loss prevention (DLP) policy from the Security & Compliance admin center

Explanation:

The requirement is to manage EU Personally Identifiable Information (PII) data. In a compliance context, this typically involves ensuring data is retained for a mandatory period or deleted when no longer needed to meet regulations like GDPR. This is a data lifecycle governance task, not primarily about preventing real-time leakage.

Correct Option:

A. a retention policy from the Security & Compliance admin center.

A retention policy is the correct tool. It ensures that EU PII data is either preserved for a specified duration (to meet legal hold or regulatory retention requirements) or automatically deleted after that period expires (to comply with data minimization principles). Policies are created in the Microsoft Purview compliance portal (Security & Compliance admin center) and can be applied to Exchange, SharePoint, OneDrive, and Teams locations.

Incorrect Options:

B. a retention policy from the Exchange admin center:

Retention policies for Exchange Online only can be created here, but they are legacy. The modern, unified approach for managing data lifecycle across all M365 workloads (including EU PII data that may reside in SharePoint or Teams) is through the compliance portal.

C. a DLP policy from the Exchange admin center:

DLP in the Exchange admin center is limited to Exchange data only. It is designed to detect and prevent the inappropriate sharing of PII, not to manage its retention or deletion lifecycle.

D. a DLP policy from the Security & Compliance admin center:

While a DLP policy here can identify and protect EU PII across M365, its primary function is to prevent accidental sharing or leakage (e.g., blocking emails with PII). It does not retain or delete data based on age, which is the core function of a retention policy for compliance.

Reference:

Microsoft Learn, "Learn about retention policies and retention labels." This details how retention policies are used to retain or delete content to meet regulatory and compliance requirements.

You need to recommend a solution for the security administrator. The solution must meet the technical requirements.

What should you include in the recommendation?

A. Microsoft Azure Active Directory (Azure AD) Privileged Identity Management

B. Microsoft Azure Active Directory (Azure AD) Identity Protection

C. Microsoft Azure Active Directory (Azure AD) conditional access policies

D. Microsoft Azure Active Directory (Azure AD) authentication methods

Explanation:

This question concerns a security administrator needing to meet technical requirements focused on identifying and remediating identity-based risks. The solution must be proactive in detecting compromised identities and suspicious sign-ins, and provide tools for investigation and response.

Correct Option:

B. Microsoft Azure Active Directory (Azure AD) Identity Protection

Azure AD Identity Protection is specifically designed to meet this need. It uses machine learning to detect suspicious sign-in activities and user risk (like leaked credentials or anomalous travel). It provides security administrators with detailed risk reports, alerts, and automated remediation policies (like requiring password change or multi-factor authentication) to address compromised identities before they cause a breach.

Incorrect Options:

A. Azure AD Privileged Identity Management (PIM):

PIM is for managing, controlling, and monitoring access to privileged roles (just-in-time access). It's about least privilege administration, not broadly detecting identity compromises across all users.

C. Azure AD Conditional Access Policies:

This is an enforcement tool, not a primary detection system. Conditional Access uses signals (including risk signals from Identity Protection) to grant or block access. It requires policies to be configured based on known conditions but does not itself discover or report on risky identities.

D. Azure AD Authentication Methods:

This refers to the configuration of MFA, passwordless methods, etc. It's about how users authenticate, not a solution for detecting, investigating, and remediating compromised identities and risky sign-ins.

Reference:

Microsoft Learn, "What is Identity Protection?" It states that Identity Protection gives organizations the ability to detect potential vulnerabilities affecting their identities, configure automated responses, and investigate incidents.

Which report should the New York office auditors view?

A. DLP policy matches

B. DLP false positives and overrides

C. DLP incidents

D. Top Senders and Recipients

Explanation:

Auditors typically need a consolidated, incident-based view of policy violations for review and compliance reporting. They require a report that aggregates individual events into investigable cases, showing what happened, the severity, what data was involved, and the current status of the investigation.

Correct Option:

C. DLP incidents

The DLP incidents report is the correct choice for auditors. An incident aggregates multiple related DLP policy match events (e.g., repeated attempts to send sensitive data) into a single, manageable case for investigation. It provides context, severity, the content involved (with permissions), and the audit trail of actions taken, which is essential for compliance reviews and regulatory audits.

Incorrect Options:

A. DLP policy matches:

This report shows every individual event where a policy was triggered. It is too granular and voluminous for an efficient audit. Auditors need the curated, aggregated view provided by incidents.

B. DLP false positives and overrides:

This is a specialized report for tuning DLP policies by identifying allowed overrides or misclassified events. It is useful for policy administrators to improve accuracy, but not the primary tool for auditors assessing policy effectiveness and violations.

D. Top Senders and Recipients:

This is a statistical report showing users with the most DLP matches. While it can identify hotspots, it lacks the detailed case context, content details, and investigation status that the DLP incidents report provides for a proper audit.

Reference:

Microsoft Learn, "View the reports for data loss prevention." The documentation specifies that the DLP incidents dashboard is where you can review, scope, and investigate policy violations that have been aggregated into incidents.

You need to protect the U.S. PII data to meet the technical requirements. What should you create?

A. a data loss prevention (DLP) policy that contains a domain exception

B. a Security & Compliance retention policy that detects content containing sensitive data

C. a Security & Compliance alert policy that contains an activity

D. a data loss prevention (DLP) policy that contains a user override

Explanation:

The requirement is to protect U.S. PII data, which is a classic Data Loss Prevention (DLP) use case. DLP policies are designed to detect sensitive information (like U.S. PII) and prevent its accidental or intentional sharing. The mention of a domain exception suggests the need to allow sharing within a trusted boundary (e.g., the company's own domain) while blocking external sharing.

Correct Option:

A. a data loss prevention (DLP) policy that contains a domain exception

This is the correct solution. You create a DLP policy in the Security & Compliance admin center that detects U.S. PII using built-in or custom sensitive information types. The domain exception is a key component that allows you to restrict the policy's protective actions (like blocking or encrypting) only when data is shared outside your specified trusted domains, meeting requirements for secure internal collaboration while preventing external leaks.

Incorrect Options:

B. a Security & Compliance retention policy:

Retention policies govern the lifecycle (retain/delete) of data based on its age and label, not its content. They do not actively monitor or prevent the sharing of sensitive data like PII in real-time.

C. a Security & Compliance alert policy:

Alert policies notify admins of specific user or admin activities (like a spike in file deletions). They are a reactive monitoring tool, not a proactive protection mechanism that prevents data exfiltration based on content inspection.

D. a data loss prevention (DLP) policy that contains a user override:

A user override allows an end-user to bypass a DLP block with a business justification. This reduces protection by introducing a manual exception, which contradicts the goal of enforcing protection to meet technical requirements. An administrator-configured domain exception is a controlled, rule-based allowance.

Reference:

Microsoft Learn, "DLP policy reference" and "Configuring domain exceptions in DLP policies." Domain exceptions are a core part of scoping where a DLP policy's protections are applied.

| Page 2 out of 31 Pages |