Topic 3, Mix Questions

You have an enterprise data warehouse in Azure Synapse Analytics.

You need to monitor the data warehouse to identify whether you must scale up to a higher

service level to accommodate the current workloads

Which is the best metric to monitor?

More than one answer choice may achieve the goal. Select the BEST answer.

A.

Data 10 percentage

B.

CPU percentage

C.

DWU used

D.

DWU percentage

DWU percentage

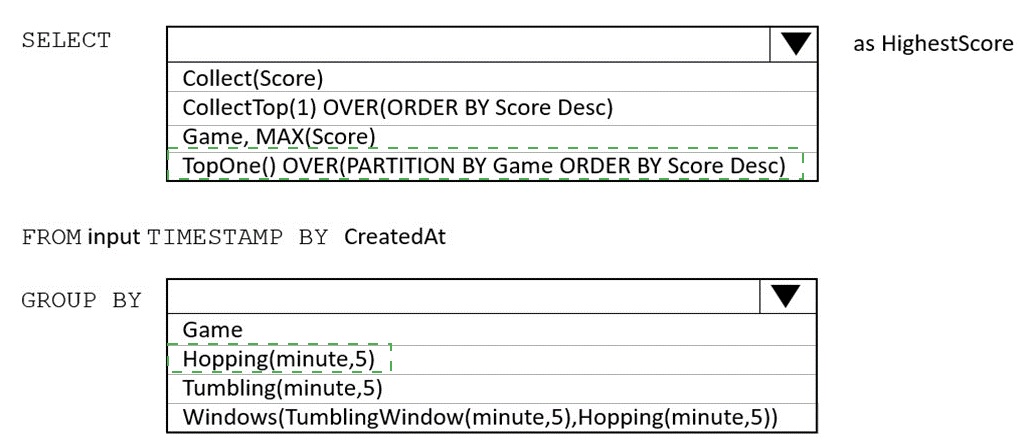

You are building an Azure Stream Analytics job to retrieve game data.

You need to ensure that the job returns the highest scoring record for each five-minute time interval of each game. How should you complete the Stream Analytics query? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

You have an Azure data factory.

You need to examine the pipeline failures from the last 180 flays.

What should you use?

A.

the Activity tog blade for the Data Factory resource

B.

Azure Data Factory activity runs in Azure Monitor

C.

Pipeline runs in the Azure Data Factory user experience

D.

the Resource health blade for the Data Factory resource

Azure Data Factory activity runs in Azure Monitor

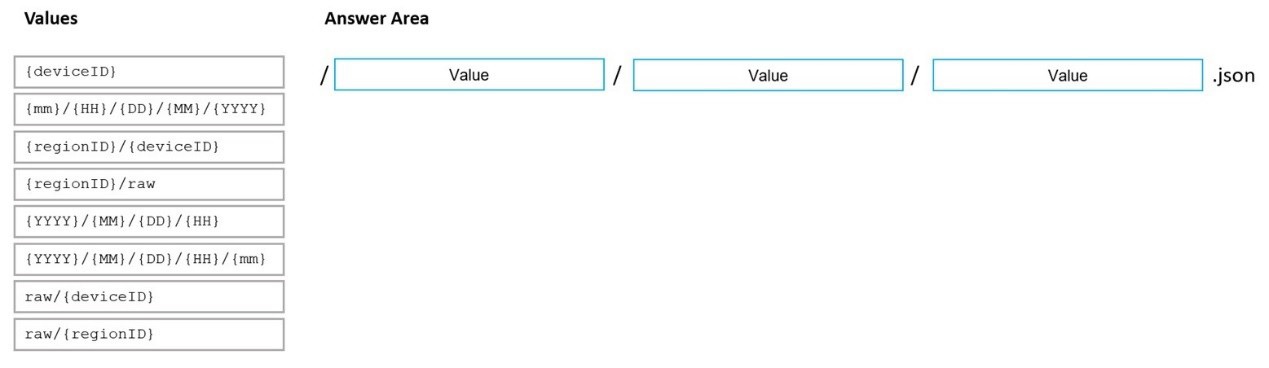

You are designing an Azure Data Lake Storage Gen2 structure for telemetry data from 25 million devices distributed across seven key geographical regions. Each minute, the devices will send a JSON payload of metrics to Azure Event Hubs. You need to recommend a folder structure for the data. The solution must meet the following requirements:

Data engineers from each region must be able to build their own pipelines for the data of their respective region only.

The data must be processed at least once every 15 minutes for inclusion in Azure Synapse Analytics serverless SQL pools.

How should you recommend completing the structure? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.  NOTE: Each correct selection is worth one point.

NOTE: Each correct selection is worth one point.

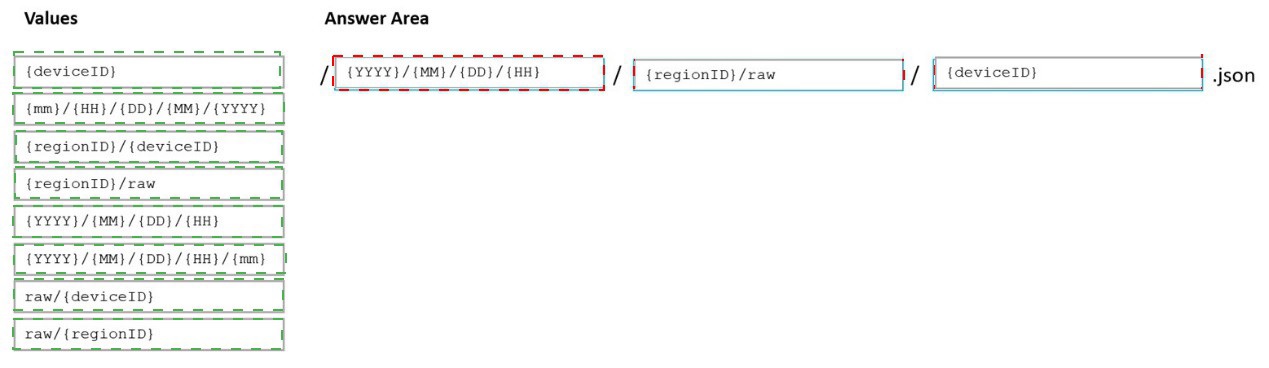

You have an Azure Data Factory pipeline that has the activities shown in the following exhibit.

You are designing an Azure Synapse solution that will provide a query interface for the data stored in an Azure Storage account. The storage account is only accessible from a virtual network.

You need to recommend an authentication mechanism to ensure that the solution can access the source data. What should you recommend?

A.

a managed identity

B.

anonymous public read access

C.

a shared key

a managed identity

Explanation:

Managed Identity authentication is required when your storage account is attached to a

VNet.

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-data-warehouse/quickstartbulk-

load-copy-tsql-examples

You have an Azure Synapse Analytics SQL pool named Pool1 on a logical Microsoft SQL server named Server1.

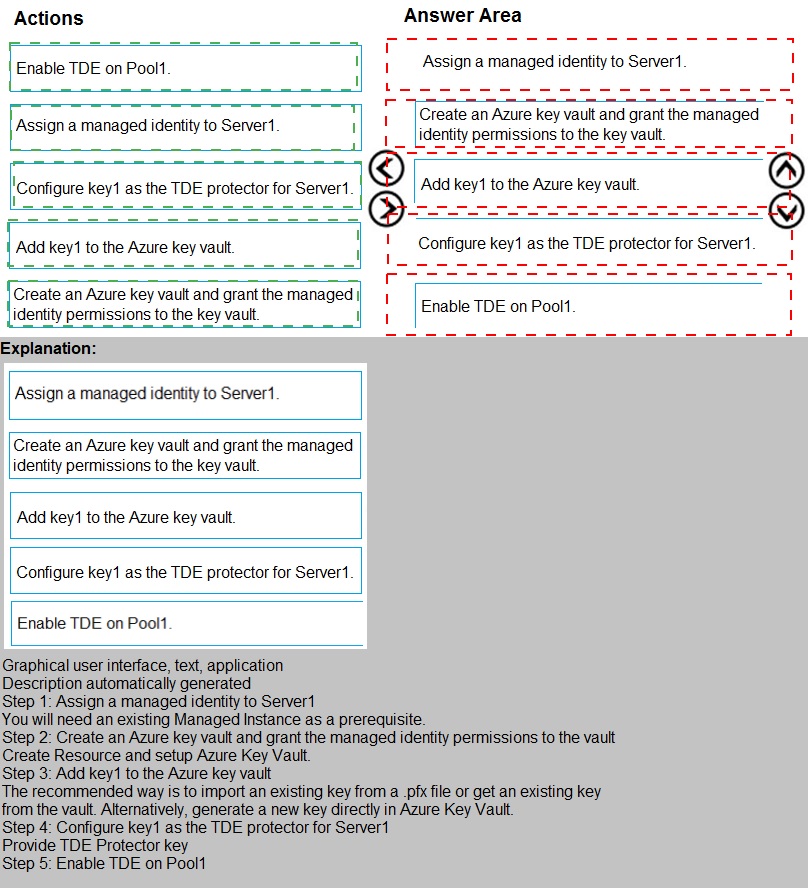

You need to implement Transparent Data Encryption (TDE) on Pool1 by using a custom key named key1.Which five actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

You have an Azure Synapse Analytics dedicated SQL pool mat contains a table named dbo.Users. You need to prevent a group of users from reading user email addresses from dbo.Users. What should you use?

A.

row-level security

B.

column-level security

C.

Dynamic data masking

D.

Transparent Data Encryption (TDD

column-level security

You have an Azure data solution that contains an enterprise data warehouse in Azure Synapse Analytics named DW1.

Several users execute ad hoc queries to DW1 concurrently.

You regularly perform automated data loads to DW1.

You need to ensure that the automated data loads have enough memory available to complete quickly and successfully when the adhoc queries run.

What should you do?

A.

Hash distribute the large fact tables in DW1 before performing the automated data loads.

B.

Assign a smaller resource class to the automated data load queries.

C.

Assign a larger resource class to the automated data load queries.

D.

Create sampled statistics for every column in each table of DW1.

Assign a larger resource class to the automated data load queries.

Explanation:

The performance capacity of a query is determined by the user's resource class. Resource

classes are pre-determined resource limits in Synapse SQL pool that govern compute

resources and concurrency for query execution.

Resource classes can help you configure resources for your queries by setting limits on the

number of queries that run concurrently and on the compute-resources assigned to each

query. There's a trade-off between memory and concurrency.

Smaller resource classes reduce the maximum memory per query, but increase

concurrency.

Larger resource classes increase the maximum memory per query, but reduce

concurrency.

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-data-warehouse/resourceclasses-

for-workload-management

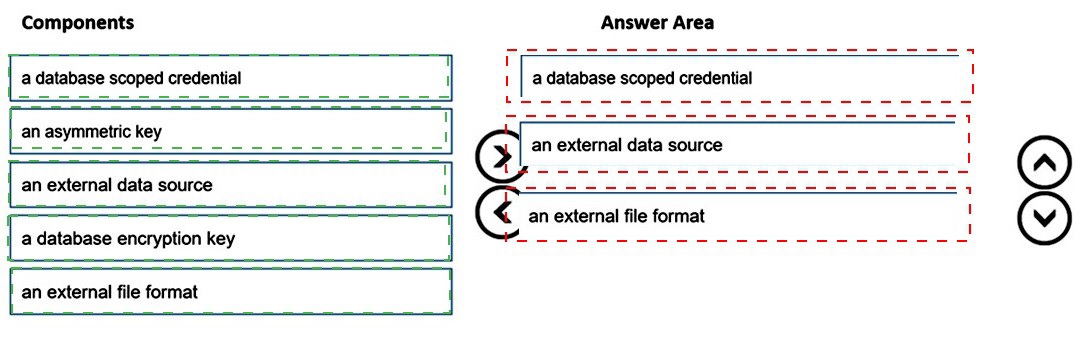

You are responsible for providing access to an Azure Data Lake Storage Gen2 account. Your user account has contributor access to the storage account, and you have the application ID and access key. You plan to use PolyBase to load data into an enterprise data warehouse in Azure Synapse Analytics.You need to configure PolyBase to connect the data warehouse to storage account.

Which three components should you create in sequence? To answer, move the appropriate components from the list of components to the answer area and arrange them in the correct order.

| Page 6 out of 21 Pages |