Topic 3, Mix Questions

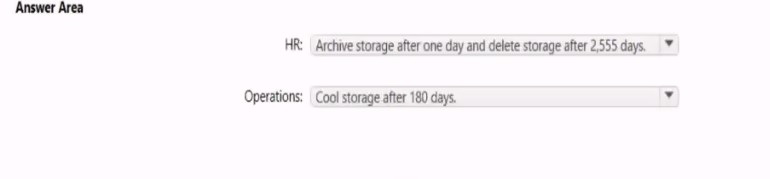

You are designing an Azure Data Lake Storage Gen2 container to store data for the human

resources (HR) department and the operations department at your company. You have the

following data access requirements:

• After initial processing, the HR department data will be retained for seven years.

• The operations department data will be accessed frequently for the first six months, and

then accessed once per month.

You need to design a data retention solution to meet the access requirements. The solution

must minimize storage costs.

Answer: See the answer in explanation.

Explanation:

Answer is below

You have an Azure Synapse Analytics workspace named WS1 that contains an Apache Spark pool named Pool1. You plan to create a database named D61 in Pool1.You need to ensure that when tables are created in DB1, the tables are available automatically as external tables to the built-in serverless SQL pod.

Which format should you use for the tables in DB1?

A.

Parquet

B.

CSV

C.

ORC

D.

JSON

Parquet

Explanation:

Serverless SQL pool can automatically synchronize metadata from Apache Spark. A

serverless SQL pool database will be created for each database existing in serverless

Apache Spark pools.

For each Spark external table based on Parquet or CSV and located in Azure Storage, an

external table is created in a serverless SQL pool database.

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql/develop-storage-files-sparktables

You have an Azure Data Factory pipeline that is triggered hourly.

The pipeline has had 100% success for the past seven days.

The pipeline execution fails, and two retries that occur 15 minutes apart also fail. The third failure returns the following error. What is a possible cause of the error?

A.

From 06.00 to 07:00 on January 10.2021 there was no data in w1/bikes/CARBON.

B.

The parameter used to generate year.2021/month=0/day=10/hour=06 was incorrect

C.

From 06:00 to 07:00 on January 10,2021 the file format of data wi/BiKES/CARBON was

incorrect

From 06:00 to 07:00 on January 10,2021 the file format of data wi/BiKES/CARBON was

incorrect

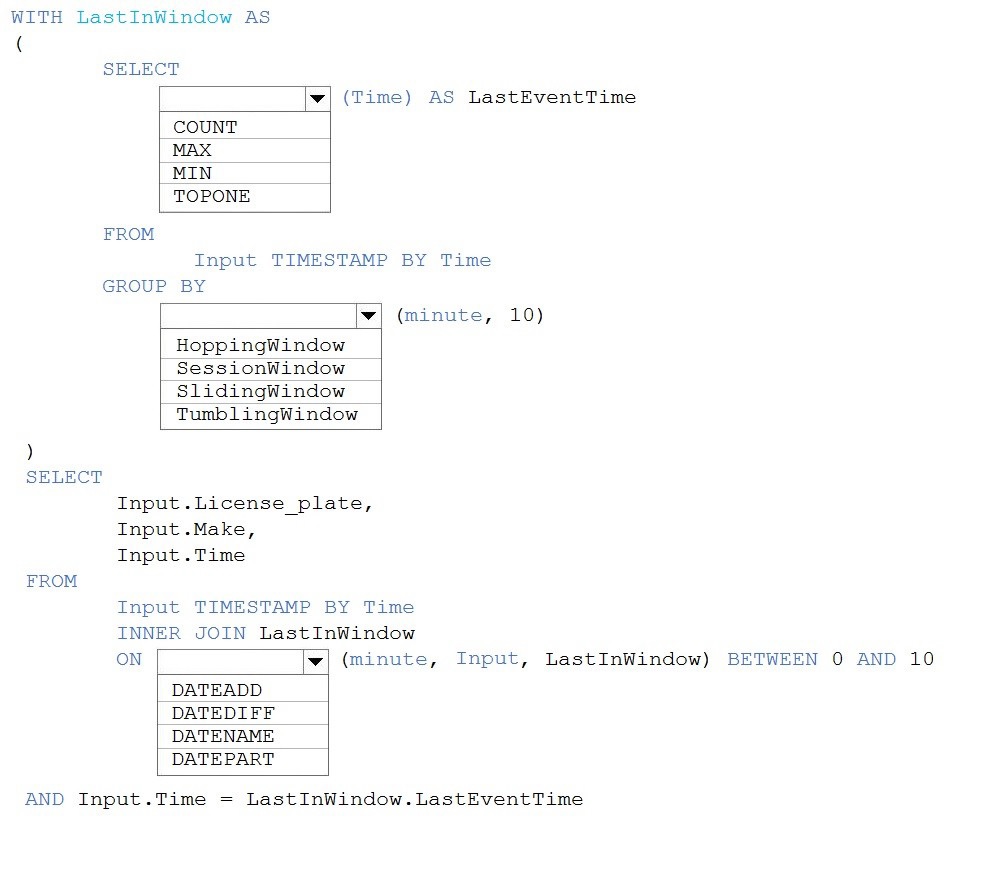

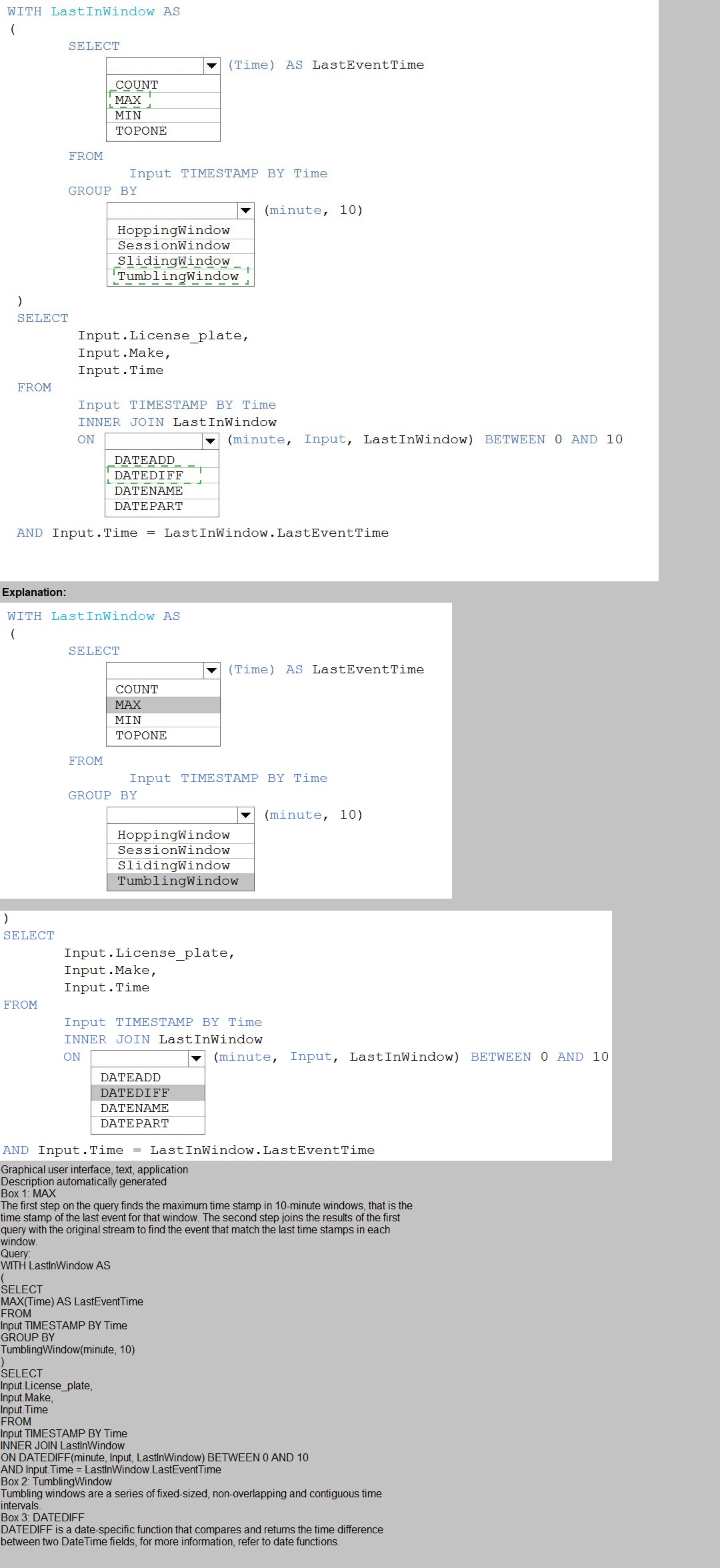

You are processing streaming data from vehicles that pass through a toll booth.

You need to use Azure Stream Analytics to return the license plate, vehicle make, and hour the last vehicle passed during each 10-minute window.

How should you complete the query? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

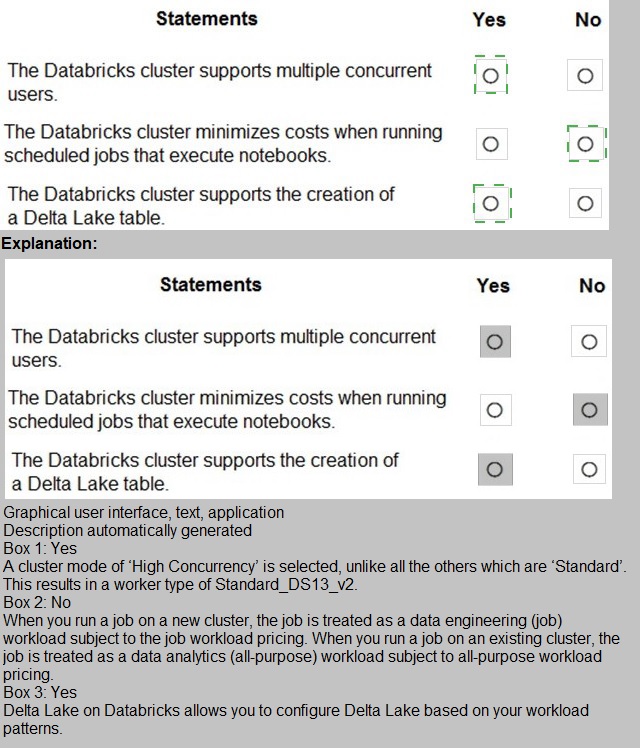

The following code segment is used to create an Azure Databricks cluster.

You are planning a solution to aggregate streaming data that originates in Apache Kafka and is output to Azure Data Lake Storage Gen2. The developers who will implement the stream processing solution use Java, Which service should you recommend using to process the streaming data?

A.

Azure Data Factory

B.

Azure Stream Analytics

C.

Azure Databricks

D.

Azure Event Hubs

Azure Databricks

Explanation: https://docs.microsoft.com/en-us/azure/architecture/data-guide/technologychoices/

stream-processing

You have an Azure Synapse Analytics Apache Spark pool named Pool1.

You plan to load JSON files from an Azure Data Lake Storage Gen2 container into the

tables in Pool1. The structure and data types vary by file.

You need to load the files into the tables. The solution must maintain the source data types.

What should you do?

A.

Use a Get Metadata activity in Azure Data Factory.

B.

Use a Conditional Split transformation in an Azure Synapse data flow.

C.

Load the data by using the OPEHROwset Transact-SQL command in an Azure Synapse Anarytics serverless SQL pool.

D.

Load the data by using PySpark

Load the data by using the OPEHROwset Transact-SQL command in an Azure Synapse Anarytics serverless SQL pool.

You use Azure Data Lake Storage Gen2 to store data that data scientists and data

engineers will query by using Azure Databricks interactive notebooks. Users will have

access only to the Data Lake Storage folders that relate to the projects on which they work.

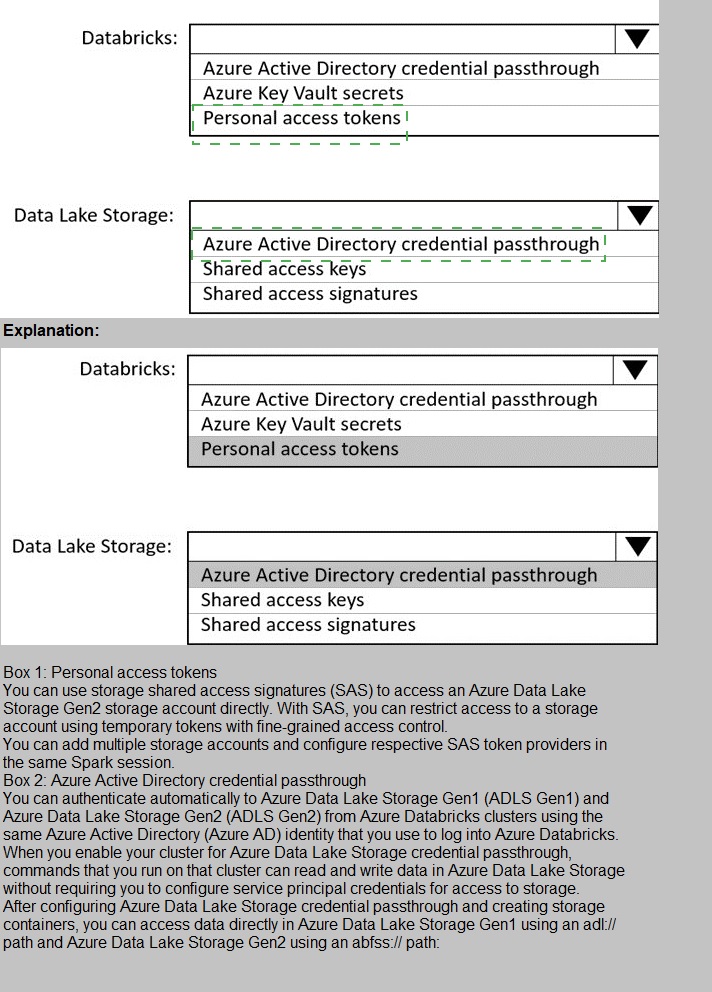

You need to recommend which authentication methods to use for Databricks and Data

Lake Storage to provide the users with the appropriate access. The solution must minimize

administrative effort and development effort.

Which authentication method should you recommend for each Azure service? To answer,

select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You build a data warehouse in an Azure Synapse Analytics dedicated SQL pool.

Analysts write a complex SELECT query that contains multiple JOIN and CASE statements to transform data for use in inventory reports. The inventory reports will use the data and additional WHERE parameters depending on the report. The reports will be produced once

daily. You need to implement a solution to make the dataset available for the reports. The solution must minimize query times.

What should you implement?

A.

a materialized view

B.

a replicated table

C.

in ordered clustered columnstore index

D.

result set chaching

a materialized view

Explanation:

Materialized views for dedicated SQL pools in Azure Synapse provide a low maintenance method for complex analytical queries to get fast performance without any query change.

Note: When result set caching is enabled, dedicated SQL pool automatically caches query

results in the user database for repetitive use. This allows subsequent query executions to

get results directly from the persisted cache so recomputation is not needed. Result set

caching improves query performance and reduces compute resource usage. In addition,

queries using cached results set do not use any concurrency slots and thus do not count

against existing concurrency limits.

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-datawarehouse/

performance-tuning-materialized-views

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-datawarehouse/

performance-tuning-result-set-caching

You have an Azure Data Factory version 2 (V2) resource named Df1. Df1 contains a linked

service.

You have an Azure Key vault named vault1 that contains an encryption key named key1.

You need to encrypt Df1 by using key1.

What should you do first?

A.

Add a private endpoint connection to vaul 1.

B.

Enable Azure role-based access control on vault 1.

C.

Remove the linked service from Df1.

D.

Create a self-hosted integration runtime.

Remove the linked service from Df1.

Explanation:

Linked services are much like connection strings, which define the connection information needed for Data Factory to connect to external resources.

Reference:

https://docs.microsoft.com/en-us/azure/data-factory/enable-customer-managed-key

https://docs.microsoft.com/en-us/azure/data-factory/concepts-linked-services

https://docs.microsoft.com/en-us/azure/data-factory/create-self-hosted-integration-runtime

| Page 5 out of 21 Pages |