Topic 3, Mix Questions

You are designing an Azure Synapse Analytics workspace.

You need to recommend a solution to provide double encryption of all the data at rest. Which two components should you include in the recommendation? Each coned answer presents part of the solution NOTE: Each correct selection is worth one point.

A.

an X509 certificate

B.

an RSA key

C.

an Azure key vault that has purge protection enabled

D.

an Azure virtual network that has a network security group (NSG)

E.

an Azure Policy initiative

an X509 certificate

D.

an Azure virtual network that has a network security group (NSG)

You have an Azure Data Lake Storage Gen2 account named adls2 that is protected by a virtual network You are designing a SQL pool in Azure Synapse that will use adls2 as a source. What should you use to authenticate to adls2?

A.

a shared access signature (SAS)

B.

a managed identity

C.

a shared key

D.

an Azure Active Directory (Azure AD) user

a managed identity

Explanation:

Managed identity for Azure resources is a feature of Azure Active Directory. The feature

provides Azure services with an automatically managed identity in Azure AD. You can use

the Managed Identity capability to authenticate to any service that support Azure AD

authentication.

Managed Identity authentication is required when your storage account is attached to a

VNet.

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-data-warehouse/quickstartbulk-

load-copy-tsql-examples

You have an Azure Data Lake Storage account that has a virtual network service endpoint configured. You plan to use Azure Data Factory to extract data from the Data Lake Storage account. The data will then be loaded to a data warehouse in Azure Synapse Analytics by using PolyBase. Which authentication method should you use to access Data Lake Storage?

A.

shared access key authentication

B.

managed identity authentication

C.

account key authentication

D.

service principal authentication

managed identity authentication

Reference:

https://docs.microsoft.com/en-us/azure/data-factory/connector-azure-sql-datawarehouse#

use-polybase-to-load-data-into-azure-sql-data-warehouse

You are designing a star schema for a dataset that contains records of online orders. Each

record includes an order date, an order due date, and an order ship date.

You need to ensure that the design provides the fastest query times of the records when

querying for arbitrary date ranges and aggregating by fiscal calendar attributes.

Which two actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

A.

Create a date dimension table that has a DateTime key.

B.

Use built-in SQL functions to extract date attributes.

C.

Create a date dimension table that has an integer key in the format of yyyymmdd.

D.

In the fact table, use integer columns for the date fields.

E.

Use DateTime columns for the date fields.

Use built-in SQL functions to extract date attributes.

D.

In the fact table, use integer columns for the date fields.

You have a SQL pool in Azure Synapse.

You discover that some queries fail or take a long time to complete.

You need to monitor for transactions that have rolled back.

Which dynamic management view should you query?

A.

sys.dm_pdw_request_steps

B.

sys.dm_pdw_nodes_tran_database_transactions

C.

sys.dm_pdw_waits

D.

sys.dm_pdw_exec_sessions

sys.dm_pdw_nodes_tran_database_transactions

Explanation:

You can use Dynamic Management Views (DMVs) to monitor your workload including

investigating query execution in SQL pool.

If your queries are failing or taking a long time to proceed, you can check and monitor if you

have any transactions rolling back.

Example:

- Monitor rollback

SELECT

SUM(CASE WHEN t.database_transaction_next_undo_lsn IS NOT NULL THEN 1 ELSE 0

END),

t.pdw_node_id,

nod.[type]

FROM sys.dm_pdw_nodes_tran_database_transactions t

JOIN sys.dm_pdw_nodes nod ON t.pdw_node_id = nod.pdw_node_id

GROUP BY t.pdw_node_id, nod.[type]

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-data-warehouse/sql-datawarehouse-

manage-monitor#monitor-transaction-log-rollback

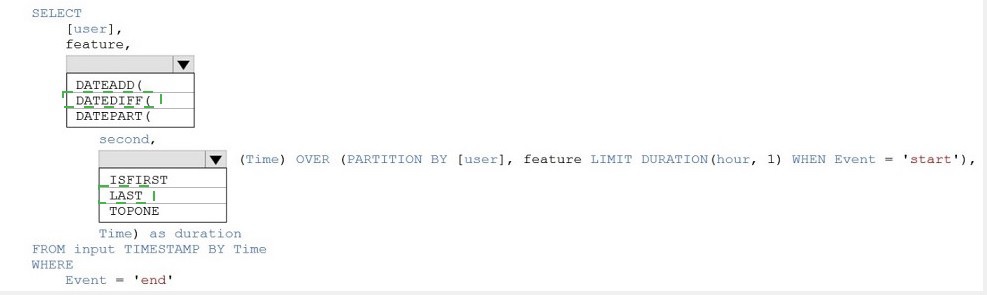

You are building an Azure Stream Analytics job to identify how much time a user spends

interacting with a feature on a webpage.

The job receives events based on user actions on the webpage. Each row of data

represents an event. Each event has a type of either 'start' or 'end'.

You need to calculate the duration between start and end events.

How should you complete the query? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

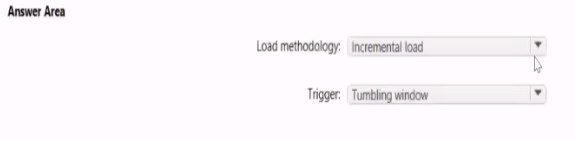

You have an Azure Storage account that generates 200.000 new files daily. The file names have a format of (YYY)/(MM)/(DD)/|HH])/(CustornerID).csv.

You need to design an Azure Data Factory solution that will toad new data from the storage account to an Azure Data lake once hourly. The solution must minimize load times and

costs.How should you configure the solution? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point

Answer: See the answer below in explanation.

Explanation:

The storage account container view is shown in the Refdata exhibit. (Click the Refdata tab.)

You need to configure the Stream Analytics job to pick up the new reference data. What

should you configure? To answer, select the appropriate options in the answer area NOTE:

Each correct selection is worth one point.

Answer: See the answer below in explanation.

Explanation:

Answer as below

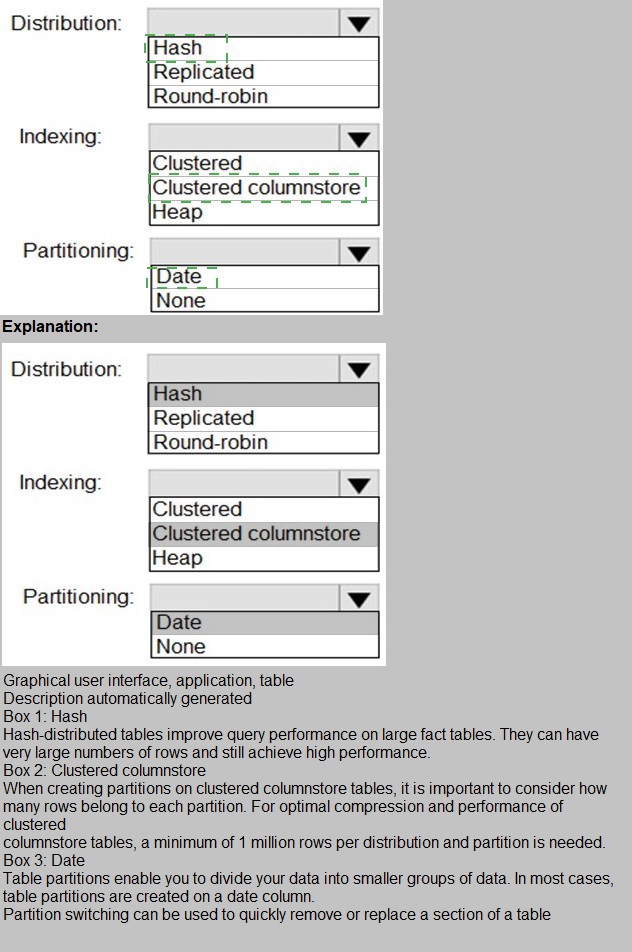

You have a SQL pool in Azure Synapse.

You plan to load data from Azure Blob storage to a staging table. Approximately 1 million

rows of data will be loaded daily. The table will be truncated before each daily load.

You need to create the staging table. The solution must minimize how long it takes to load

the data to the staging table.

How should you configure the table? To answer, select the appropriate options in the

answer area.

NOTE: Each correct selection is worth one point.

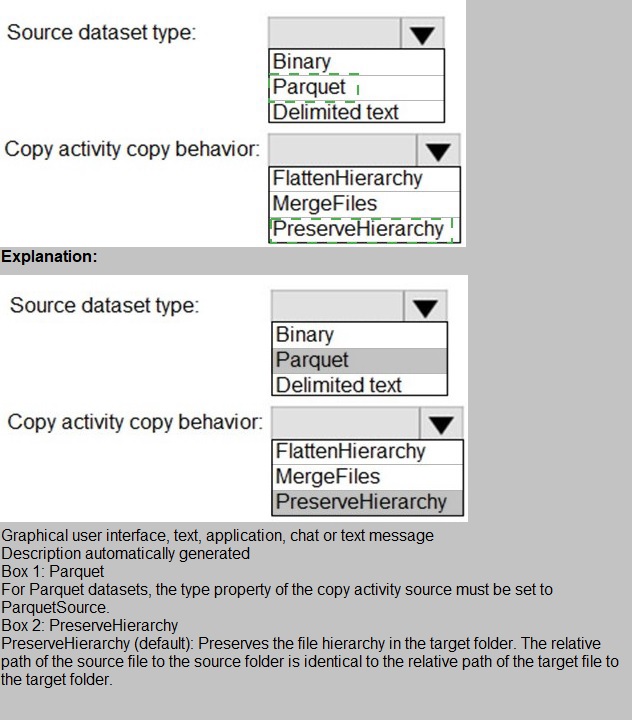

You have two Azure Storage accounts named Storage1 and Storage2. Each account holds one container and has the hierarchical namespace enabled. The system has files that contain data stored in the Apache Parquet format.

You need to copy folders and files from Storage1 to Storage2 by using a Data Factory copy activity. The solution must meet the following requirements:

No transformations must be performed. The original folder structure must be retained. Minimize time required to perform the copy activity. How should you configure the copy activity? To answer, select the appropriate options in

the answer area.  NOTE: Each correct selection is worth one point.

NOTE: Each correct selection is worth one point.

| Page 4 out of 21 Pages |