Topic 3: City Power & Light

Case study

This is a case study. Case studies are not timed separately. You can use as much

exam time as you would like to complete each case. However, there may be additional

case studies and sections on this exam. You must manage your time to ensure that you

are able to complete all questions included on this exam in the time provided.

To answer the questions included in a case study, you will need to reference information

that is provided in the case study. Case studies might contain exhibits and other resources

that provide more information about the scenario that is described in the case study. Each

question is independent of the other questions in this case study.

At the end of this case study, a review screen will appear. This screen allows you to review

your answers and to make changes before you move to the next section of the exam. After

you begin a new section, you cannot return to this section.

To start the case study

To display the first question in this case study, click the Next button. Use the buttons in the

left pane to explore the content of the case study before you answer the questions. Clicking

these buttons displays information such as business requirements, existing environment,

and problem statements. When you are ready to answer a question, click the Question

button to return to the question.

Background

City Power & Light company provides electrical infrastructure monitoring solutions for

homes and businesses. The company is migrating solutions to Azure.

Current environment

Architecture overview

The company has a public website located at http://www.cpandl.com/. The site is a singlepage

web application that runs in Azure App Service on Linux. The website uses files

stored in Azure Storage and cached in Azure Content Delivery Network (CDN) to serve

static content.

API Management and Azure Function App functions are used to process and store data in

Azure Database for PostgreSQL. API Management is used to broker communications to

the Azure Function app functions for Logic app integration. Logic apps are used to

orchestrate the data processing while Service Bus and Event Grid handle messaging and

events.

The solution uses Application Insights, Azure Monitor, and Azure Key Vault.

Architecture diagram

The company has several applications and services that support their business. The

company plans to implement serverless computing where possible. The overall architecture

is shown below.

User authentication

The following steps detail the user authentication process:

The user selects Sign in in the website.

The browser redirects the user to the Azure Active Directory (Azure AD) sign in

page.

The user signs in.

Azure AD redirects the user’s session back to the web application. The URL

includes an access token.

The web application calls an API and includes the access token in the

authentication header. The application ID is sent as the audience (‘aud’) claim in

the access token.

The back-end API validates the access token.

Requirements

Corporate website

Communications and content must be secured by using SSL.

Communications must use HTTPS.

Data must be replicated to a secondary region and three availability zones.

Data storage costs must be minimized.

Azure Database for PostgreSQL

The database connection string is stored in Azure Key Vault with the following attributes:

Azure Key Vault name: cpandlkeyvault

Secret name: PostgreSQLConn

Id: 80df3e46ffcd4f1cb187f79905e9a1e8

The connection information is updated frequently. The application must always use the

latest information to connect to the database.

Azure Service Bus and Azure Event Grid

Azure Event Grid must use Azure Service Bus for queue-based load leveling.

Events in Azure Event Grid must be routed directly to Service Bus queues for use

in buffering.

Events from Azure Service Bus and other Azure services must continue to be

routed to Azure Event Grid for processing.

Security

All SSL certificates and credentials must be stored in Azure Key Vault.

File access must restrict access by IP, protocol, and Azure AD rights.

All user accounts and processes must receive only those privileges which are

essential to perform their intended function.

Compliance

Auditing of the file updates and transfers must be enabled to comply with General Data

Protection Regulation (GDPR). The file updates must be read-only, stored in the order in

which they occurred, include only create, update, delete, and copy operations, and be

retained for compliance reasons.

Issues

Corporate website

While testing the site, the following error message displays:

CryptographicException: The system cannot find the file specified.

Function app

You perform local testing for the RequestUserApproval function. The following error

message displays:

'Timeout value of 00:10:00 exceeded by function: RequestUserApproval'

The same error message displays when you test the function in an Azure development

environment when you run the following Kusto query:

FunctionAppLogs

| where FunctionName = = "RequestUserApproval"

Logic app

You test the Logic app in a development environment. The following error message

displays:

'400 Bad Request'

Troubleshooting of the error shows an HttpTrigger action to call the RequestUserApproval

function.

Code

Corporate website

Security.cs:

You ate designing a small app that will receive web requests containing encoded

geographic coordinates. Calls to the app will occur infrequently.

Which compute solution should you recommend?

A. Azure Functions

B. Azure App Service

C. Azure Batch

D. Azure API Management

Explanation:

This question tests your knowledge of choosing the right Azure compute service based on workload characteristics. The requirements specify a small app that receives web requests with geographic coordinates and is called infrequently. Azure Functions is designed for event-driven, infrequent workloads with automatic scaling and consumption-based pricing — making it the optimal choice for this scenario.

Correct Option:

B. Azure App Service — ❌ Incorrect

Azure App Service is a fully managed platform for hosting web applications. It runs continuously, even when idle, and incurs ongoing costs regardless of usage. For an infrequently called small app, App Service would result in unnecessary cost and over-provisioning compared to a serverless alternative.

Incorrect Option — ✅ The correct choice is A:

A. Azure Functions

Azure Functions is a serverless compute service ideal for infrequent, event-driven workloads. It scales automatically and charges only when the function executes. The app receives web requests (HTTP triggers) containing geographic coordinates — a perfect match for Azure Functions. It minimizes cost and operational overhead for low-usage scenarios.

C. Azure Batch

Azure Batch is designed for large-scale parallel and high-performance computing (HPC) workloads, such as rendering or financial modeling. It is complex to set up and not intended for handling lightweight, infrequent web requests. This is severe overkill for the described app.

D. Azure API Management

Azure API Management is not a compute hosting service. It is used to publish, secure, and analyze APIs. While it can frontend Azure Functions or App Service, it does not execute application logic itself and cannot replace a compute runtime.

Reference:

Azure Functions overview

Choose an Azure compute service

Azure Functions hosting options

You develop and add several functions to an Azure Function app that uses the latest runtime host. The functions contain several REST API endpoints secured by using SSL. The Azure Function app runs in a Consumption plan.

You must send an alert when any of the function endpoints are unavailable or responding too slowly. You need to monitor the availability and responsiveness of the functions. What should you do?

A. Create a URL ping test.

B. Create a timer triggered function that calls TrackAvailability() and send the results to Application Insights.

C. Create a timer triggered function that calls GetMetric("Request Size") and send the results to Application Insights.

D. Add a new diagnostic setting to the Azure Function app. Enable the FunctionAppLogs and Send to Log Analytics options.

Explanation:

This question tests your knowledge of monitoring availability and responsiveness of HTTP-triggered Azure Functions. Application Insights provides availability monitoring through URL ping tests and the TrackAvailability() API. Since the function endpoints are secured with SSL and the app runs on a Consumption plan, creating a timer-triggered function that calls TrackAvailability() is the most flexible and cost-effective solution for simulating and tracking end-to-end availability from within Azure.

Correct Option:

B. Create a timer triggered function that calls TrackAvailability() and send the results to Application Insights.

TrackAvailability() is the Application Insights API for custom availability tests. By creating a timer-triggered function that calls each REST API endpoint and tracks success/failure and response time, you can monitor availability and performance without relying on external ping test tools. This approach works securely within Azure and integrates directly with Application Insights availability blades and alerts.

Incorrect Option:

A. Create a URL ping test.

URL ping tests are a feature of Application Insights availability monitoring. However, they require the function endpoints to be publicly accessible and are configured outside the function app. While valid, this option is less customizable than TrackAvailability() and not the programmatic approach implied by the requirement.

C. Create a timer triggered function that calls GetMetric("Request Size") and send the results to Application Insights.

GetMetric() is used for capturing custom metrics (e.g., request size, processing time), not availability or responsiveness. This does not monitor whether endpoints are unavailable or responding slowly — it captures dimensional metrics, not availability test results.

D. Add a new diagnostic setting to the Azure Function app. Enable the FunctionAppLogs and Send to Log Analytics options.

This setting streams function execution logs and diagnostic data to Log Analytics. While useful for troubleshooting and analyzing execution history, it does not proactively monitor endpoint availability or responsiveness. It is not an availability testing solution.

Reference:

Monitor Azure Functions with Application Insights

Custom availability tests with TrackAvailability

Application Insights availability monitoring

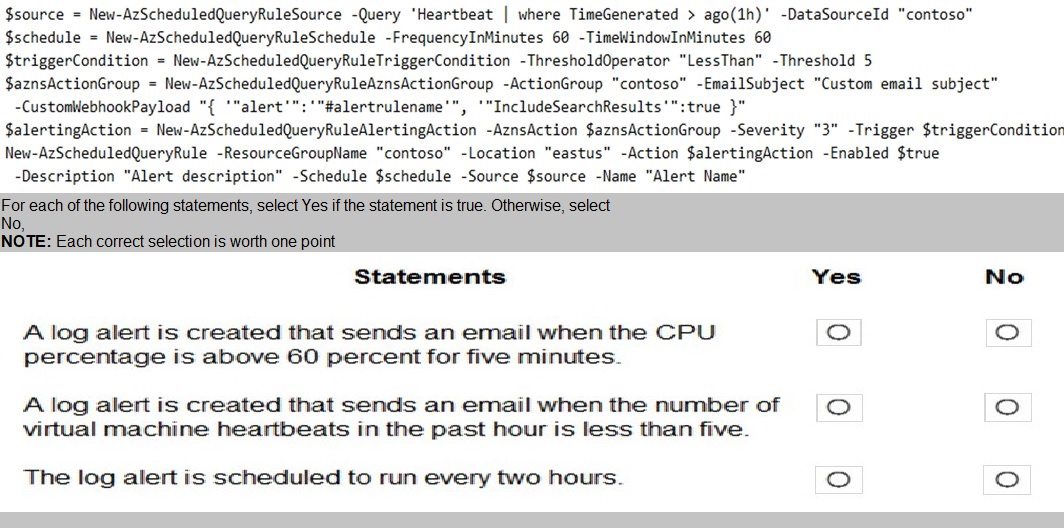

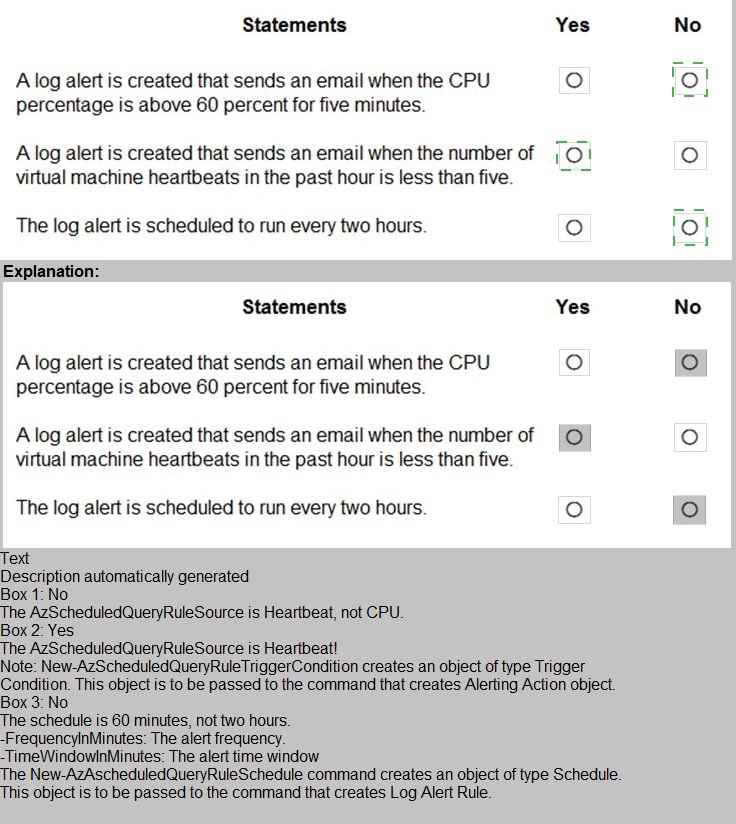

You create the following PowerShell script:

Explanation:

This question tests your ability to interpret Azure PowerShell commands for creating scheduled query rules (log alerts) . The script uses specific parameters to define the query, frequency, threshold, and action. Each statement must be evaluated against the actual configuration in the script.

Statement 1:

A log alert is created that sends an email when the CPU percentage is above 60 percent for five minutes.

❌ No

The query in the script is:

Heartbeat | where TimeGenerated > ago(1h)

This queries heartbeat data from Azure Monitor, not CPU percentage. The alert is based on heartbeat count, not performance counters. Additionally, the threshold operator is LessThan with a threshold of 5, and the time window is 60 minutes, not 5 minutes. This statement is completely incorrect.

Statement 2:

A log alert is created that sends an email when the number of virtual machine heartbeats in the past hour is less than five.

✅ Yes

The script queries heartbeat records from the last hour (ago(1h)). The trigger condition uses -ThresholdOperator "LessThan" -Threshold 5, meaning the alert fires when fewer than 5 heartbeat records are returned. The action group includes an email subject, so an email is sent. This matches the statement exactly.

Statement 3:

The log alert is scheduled to run every two hours.

❌ No

The schedule is defined as:

-FrequencyInMinutes 60 -TimeWindowInMinutes 60

This means the alert runs every 60 minutes (1 hour), not every 2 hours. The query looks back 60 minutes, and the evaluation frequency is also 60 minutes. There is no configuration for a 2-hour interval.

Reference:

New-AzScheduledQueryRule

ScheduledQueryRuleSource

Log alerts in Azure Monitor

Heartbeat table in Azure Monitor

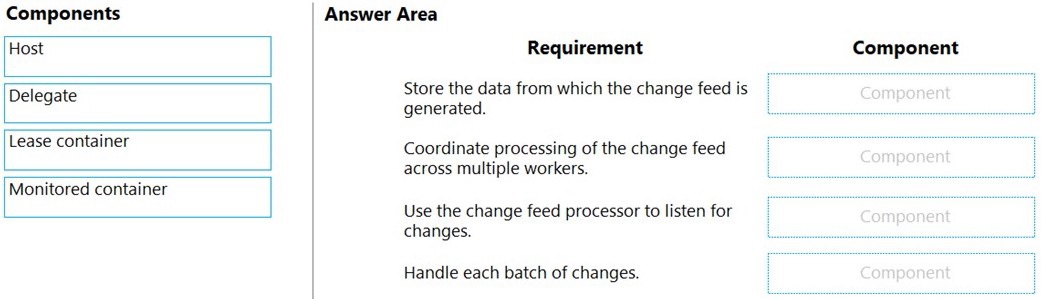

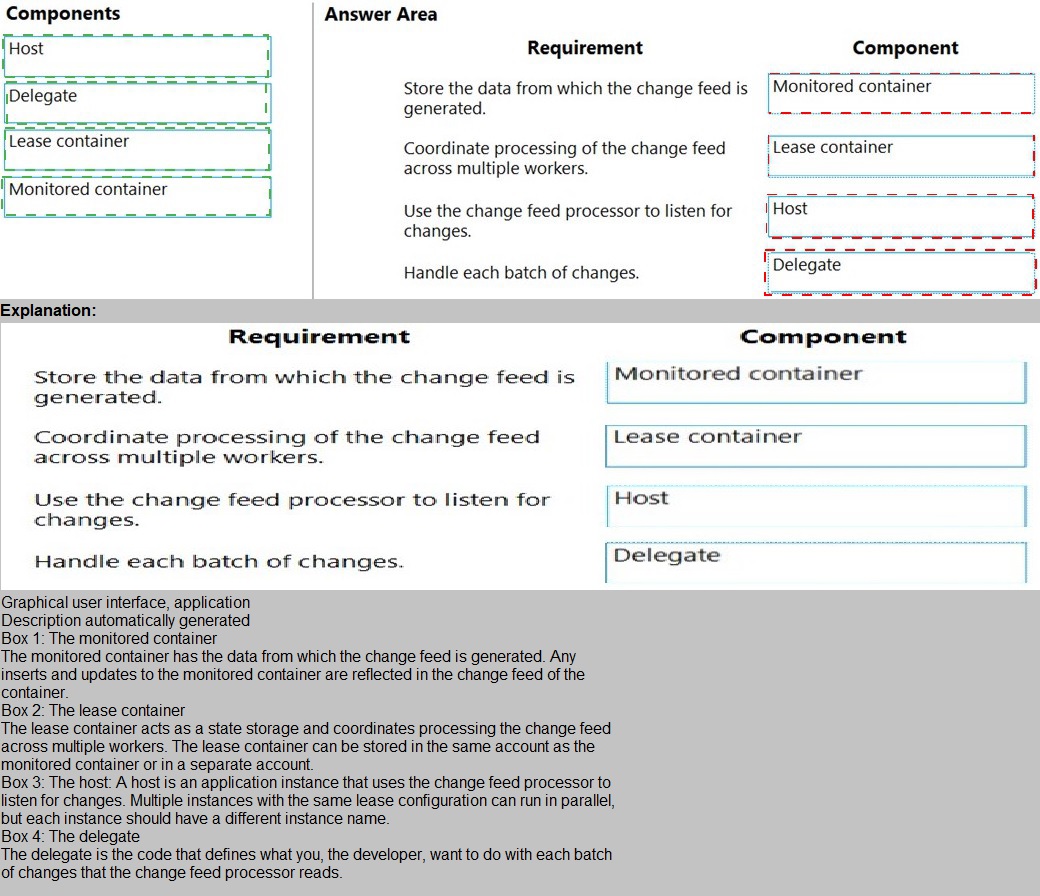

You develop an Azure solution that uses Cosmos DB.

The current Cosmos DB container must be replicated and must use a partition key that is

optimized for queries.

You need to implement a change feed processor solution.

Which change feed processor components should you use? To answer, drag the

appropriate components to the correct requirements. Each component may be used once,

more than once, or not at all. You may need to drag the split bar between panes or scroll to

view the content.

NOTE: Each correct selection is worth one point.

Explanation:

This question tests your knowledge of the Azure Cosmos DB Change Feed Processor architecture. The Change Feed Processor uses a lease container to manage state and distribution across multiple worker instances, a monitored container as the source of the change feed, and the host (or delegate/compute instance) to execute the change handling logic. Each component has a specific role in the processing pipeline.

Detailed Explanation:

1. Store the data from which the change feed is generated.

✅ Monitored container

The monitored container is the source container where your application data resides. The change feed listens for inserts and updates on this container. This is the container being replicated and optimized for queries as described in the scenario.

2. Coordinate processing of the change feed across multiple workers.

✅ Lease container

The lease container stores processing state, including leases per partition. It coordinates workload distribution across multiple change feed processor instances, ensuring each partition is processed by only one worker at a time and enabling checkpointing.

3. Use the change feed processor to listen for changes.

✅ Host

The host is an instance of the change feed processor library running in your compute environment (e.g., Azure Function, VM, App Service). It listens to the monitored container and orchestrates delegate execution.

4. Handle each batch of changes.

✅ Delegate

The delegate is your custom business logic (code) that processes each batch of changes received from the change feed. It is invoked by the host and contains the logic to handle the replicated data.

Incorrect Component Mappings:

Host is not the component that stores data or coordinates leases — it runs the processor.

Lease container is not the source of changes — it stores metadata.

Delegate is not the listener — it is the handler invoked by the host.

Monitored container does not coordinate workers — it is the data source.

Reference:

Change Feed Processor in Azure Cosmos DB

Change Feed Processor components

Working with the change feed processor

You ate developing an application that allows users to find musicians that ate looking for

work. The application must store information about musicians, the instruments that they

play, and other related data.

The application must also allow users to determine which musicians have played together,

including groups of three or more musicians that have performed together at a specific

location.

Which Azure Cosmos D6 API should you use for the application?

A. Core

B. MongoDB

C. Cassandra

D. Gremlin

Explanation:

The application requires storing information about musicians, instruments, and performance relationships, including the ability to query complex relationships like groups of three or more musicians who have performed together at specific locations. This type of relationship-heavy querying with traversal requirements is ideally suited for a graph database. Azure Cosmos DB's Gremlin API provides graph database capabilities that excel at modeling and querying highly connected data with complex relationships.

Correct Option:

D. Gremlin.

The Gremlin API in Azure Cosmos DB is designed specifically for graph database workloads. It implements the Apache TinkerPop graph traversal language, allowing you to model musicians and performances as vertices (nodes) and their relationships (played together, performed at location) as edges. Graph queries can easily traverse these relationships to find musicians who have played together in groups of three or more at specific locations—a complex relational query that would be cumbersome in document or key-value stores.

Incorrect Option:

A. Core (SQL).

The Core API provides document database functionality with SQL-like querying. While it can store musician and performance data, querying for complex relationships like "musicians who have played together in groups of three at a specific location" would require multiple joins or denormalization strategies. Document databases are not optimized for deep relationship traversal queries.

B. MongoDB.

The MongoDB API provides document database capabilities with MongoDB-compatible query syntax. Similar to Core API, it excels at storing and retrieving document-oriented data but lacks native graph traversal capabilities. Querying for musicians who have played together in groups of three would require complex aggregation pipelines and manual relationship management.

C. Cassandra.

The Cassandra API provides wide-column database capabilities with Cassandra-compatible query syntax. It is optimized for high-volume write operations and simple key-value lookups but is not designed for complex relational queries or graph traversals. Finding musicians who have performed together in groups would be extremely difficult and inefficient in this model.

Reference:

Microsoft Docs - "Introduction to Azure Cosmos DB Gremlin API" / "Graph data modeling for Azure Cosmos DB"

You are designing a multi-tiered application that will be hosted on Azure virtual machines.

The virtual machines will run Windows Server. Front-end servers will be accessible from

the Internet over port 443. The other servers will NOT be directly accessible over the

internet

You need to recommend a solution to manage the virtual machines that meets the

following requirement

• Allows the virtual machine to be administered by using Remote Desktop.

• Minimizes the exposure of the virtual machines on the Internet Which Azure service

should you recommend?

A. Azure Bastion

B. Service Endpoint

C. Azure Private Link

D. Azure Front Door

Explanation:

This question tests your knowledge of secure remote administration for Azure virtual machines. The requirements are: administer VMs via Remote Desktop, minimize internet exposure, and ensure only front-end servers are accessible from the internet on port 443. Azure Bastion provides browser-based RDP/SSH connectivity directly through the Azure portal without requiring public IPs on the VMs, meeting both administration and security requirements.

Correct Option:

C. Azure Private Link — ❌ Incorrect

Azure Private Link enables private connectivity to Azure PaaS services (like Storage, SQL Database) via private endpoints. It does not provide Remote Desktop access to virtual machines. This service is unrelated to VM administration and does not solve the RDP requirement.

Incorrect Option — ✅ The correct choice is A:

A. Azure Bastion

Azure Bastion is a fully managed PaaS service that provides secure and seamless RDP/SSH connectivity to virtual machines directly in the Azure portal over TLS. It eliminates the need for public IP addresses, NSG exposure, or jump boxes. VMs remain completely isolated from the internet while administrators connect securely. This meets both requirements perfectly.

B. Service Endpoint

Service endpoints provide private and secure connectivity from a virtual network to Azure PaaS services (e.g., Storage, SQL). They do not enable Remote Desktop access to VMs. This is irrelevant to the requirement.

D. Azure Front Door

Azure Front Door is a global load balancer and application delivery service for web applications. It operates at Layer 7 and is designed for HTTP/HTTPS traffic to public endpoints. It does not provide RDP access to backend VMs and does not address secure administration.

Reference:

What is Azure Bastion?

Secure RDP/SSH access to VMs

Azure Bastion FAQ

You develop a solution that uses Azure Virtual Machines (VMs).

The VMs contain code that must access resources in an Azure resource group. You grant the VM access to the resource group in Resource Manager.

You need to obtain an access token that uses the VMs system-assigned managed identity. Which two actions should you perform? Each correct answer presents part of the solution.

A. Use PowerShell on a remote machine to make a request to the local managed identity for Azure resources endpoint.

B. Use PowerShell on the VM to make a request to the local managed identity for Azure resources endpoint.

C. From the code on the VM. call Azure Resource Manager using an access token.

D. From the code on the VM. call Azure Resource Manager using a SAS token.

E. From the code on the VM. generate a user delegation SAS token.

C. From the code on the VM. call Azure Resource Manager using an access token.

Explanation:

This question tests your knowledge of acquiring and using access tokens with Azure VM system-assigned managed identities. Managed identities provide an automatically managed service principal in Azure AD. To access Azure Resource Manager, the VM must request a token from the local managed identity endpoint and then use that token to call Azure Resource Manager. Both steps are required and must be performed on the VM itself.

Correct Option:

B. Use PowerShell on the VM to make a request to the local managed identity for Azure resources endpoint.

The managed identity endpoint (169.254.169.254/metadata/identity/oauth2/token) is accessible only from within the VM. You must run PowerShell or code directly on the VM to request an access token. Remote machines cannot access this endpoint. This is the first step to obtain the token.

C. From the code on the VM, call Azure Resource Manager using an access token.

After obtaining the access token, you must include it in the Authorization header as a bearer token when calling Azure Resource Manager REST APIs. This grants the VM access to resources in the resource group it was granted permissions to. This is the second required action.

Incorrect Option:

A. Use PowerShell on a remote machine to make a request to the local managed identity endpoint.

❌ The managed identity endpoint is internal to the VM and is not accessible from remote machines. This action will fail. Tokens can only be acquired from within the VM itself.

D. From the code on the VM, call Azure Resource Manager using a SAS token.

❌ SAS tokens are used to authorize requests to Azure Storage, not Azure Resource Manager. ARM uses Azure AD OAuth 2.0 tokens (access tokens) obtained via managed identity or service principal.

E. From the code on the VM, generate a user delegation SAS token.

❌ User delegation SAS tokens are used for Blob Storage access and are signed with Azure AD credentials. This does not grant access to Azure Resource Manager and is unrelated to the requirement of managing resource group resources via ARM.

Reference:

How to use managed identities for Azure resources on an Azure VM to acquire an access token

Managed identity endpoints

Call Azure Resource Manager with managed identity

You are developing a solution that will use a multi-partitioned Azure Cosmos DB database.

You plan to use the latest Azure Cosmos DB SDK for development.

The solution must meet the following requirements:

Send insert and update operations to an Azure Blob storage account.

Process changes to all partitions immediately.

Allow parallelization of change processing.

You need to process the Azure Cosmos DB operations.

What are two possible ways to achieve this goal? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

A. Create an Azure App Service API and implement the change feed estimator of the SDK. Scale the API by using multiple Azure App Service instances.

B. Create a background job in an Azure Kubernetes Service and implement the change feed feature of the SDK.

C. Create an Azure Function to use a trigger for Azure Cosmos DB. Configure the trigger to connect to the container.

D. Create an Azure Function that uses a Feedlterator object that processes the change feed by using the pull model on the container. Use a FeedRange objext to parallelize the processing of the change feed across multiple functions.

D. Create an Azure Function that uses a Feedlterator object that processes the change feed by using the pull model on the container. Use a FeedRange objext to parallelize the processing of the change feed across multiple functions.

Explanation:

This question tests your knowledge of change feed processing options in Azure Cosmos DB using the latest SDK. The requirements include immediate change processing, parallelization, and sending operations to Blob Storage. Both Azure Functions Cosmos DB trigger (push model) and change feed pull model with FeedIterator/FeedRange are valid, production-ready solutions for consuming the change feed across multiple partitions and workers.

Correct Option:

C. Create an Azure Function to use a trigger for Azure Cosmos DB. Configure the trigger to connect to the container.

✅ The Azure Functions Cosmos DB trigger uses the change feed processor internally. It automatically distributes processing across partitions, scales with function instances, and processes changes immediately as they occur. This is the recommended "push model" approach for serverless change feed consumption.

D. Create an Azure Function that uses a FeedIterator object that processes the change feed by using the pull model on the container. Use a FeedRange object to parallelize the processing of the change feed across multiple functions.

✅ The pull model (introduced in the latest SDK) gives you fine-grained control over change feed processing. You can use FeedRange to distribute work across multiple functions or workers, each reading changes from specific partitions. This supports parallelization and immediate processing while allowing custom checkpointing and scaling logic.

Incorrect Option:

A. Create an Azure App Service API and implement the change feed estimator of the SDK. Scale the API by using multiple Azure App Service instances.

❌ The change feed estimator is used to monitor processor lag, not to process change feed events. It reports how many pending changes exist but does not read or handle the changes themselves. This does not meet the requirement to process insert/update operations.

B. Create a background job in an Azure Kubernetes Service and implement the change feed feature of the SDK.

❌ While technically possible, this is not a recommended or straightforward solution for the given requirements. It requires manual management of leases, scaling, and deployment. Compared to Azure Functions or the pull model, this introduces unnecessary complexity and operational overhead. The question asks for "possible ways" — but the SDK-native, simpler, and more maintainable solutions are C and D.

Reference:

Change feed in Azure Cosmos DB – Push model (Azure Functions)

Change feed in Azure Cosmos DB – Pull model

FeedRange and parallel processing

Change feed estimator

You develop and deploy an Azure Logic app that calls an Azure Function app. The Azure

Function app includes an OpenAPl (Swagger) definition and uses an Azure Blob storage

account. All resources are secured by using Azure Active Directory (Azure AD).

The Azure Logic app must securely access the Azure Blob storage account. Azure AD

resources must remain if the Azure Logic app is deleted.

You need to secure the Azure Logic app.

What should you do?

A. Create an Azure AD custom role and assign role-based access controls.

B. Create an Azure AD custom role and assign the role to the Azure Blob storage account.

C. Create an Azure Key Vault and issue a client certificate.

D. Create a user-assigned managed identity and assign role-based access controls.

E. Create a system-assigned managed identity and issue a client certificate.

Explanation:

This question tests your knowledge of securing Azure Logic App access to Azure Blob Storage using Azure AD authentication. The key requirements are: secure access to Blob Storage, Azure AD-based authentication, and identity persistence even after the Logic App is deleted. A user-assigned managed identity exists independently of the application lifecycle, unlike a system-assigned managed identity which is tied to the resource and deleted with it.

Correct Option:

D. Create a user-assigned managed identity and assign role-based access controls.

A user-assigned managed identity is created as a standalone Azure resource. It can be assigned to the Logic App for authentication to Azure Blob Storage. RBAC roles (e.g., Storage Blob Data Contributor) are assigned to this identity. If the Logic App is deleted, the user-assigned managed identity remains and can be reassigned to other resources, meeting the persistence requirement.

Incorrect Option:

A. Create an Azure AD custom role and assign role-based access controls.

A custom role defines a set of permissions but is not an identity. It cannot be assigned to a Logic App for authentication. The Logic App needs an identity (managed identity or service principal) to assume the role.

B. Create an Azure AD custom role and assign the role to the Azure Blob storage account.

Roles are assigned to identities (users, groups, service principals, managed identities), not to storage accounts. Storage accounts have access keys and Azure AD roles assigned to callers, not the resource itself. This is conceptually incorrect.

C. Create an Azure Key Vault and issue a client certificate.

While certificates can be used for authentication, this approach requires storing and rotating secrets, and does not leverage Azure AD managed identities. It also does not meet the "Azure AD resources must remain" requirement in a simple, persistent manner.

E. Create a system-assigned managed identity and issue a client certificate.

A system-assigned managed identity is tied to the Logic App lifecycle — it is deleted when the Logic App is deleted. This violates the requirement that Azure AD resources persist after Logic App deletion. Issuing a client certificate is also unnecessary and more complex than RBAC with managed identity.

Reference:

Managed identities with Azure Logic Apps

User-assigned managed identity

Authenticate Logic App to Blob Storage using managed identity

You have an application that includes an Azure Web app and several Azure Function apps.

Application secrets including connection strings and certificates are stored in Azure Key

Vault.

Secrets must not be stored in the application or application runtime environment. Changes

to Azure Active Directory (Azure AD) must be minimized.

You need to design the approach to loading application secrets.

What should you do?

A. Create a single user-assigned Managed Identity with permission to access Key Vault and configure each App Service to use that Managed Identity.

B. Create a single Azure AD Service Principal with permission to access Key Vault and use a client secret from within the App Services to access Key Vault.

C. Create a system assigned Managed Identity in each App Service with permission to access Key Vault.

D. Create an Azure AD Service Principal with Permissions to access Key Vault for each App Service and use a certificate from within the App Services to access Key Vault

Explanation:

This question tests your knowledge of securely loading application secrets from Azure Key Vault with minimal Azure AD administrative overhead. The requirements are: no secrets stored in the application/runtime, minimize Azure AD changes, and support for multiple Azure Web App and Function App instances. A system-assigned managed identity per App Service meets these requirements — each identity is automatically created with the resource, requires no credential management, and is deleted when the resource is deleted, minimizing long-lived Azure AD objects.

Correct Option:

C. Create a system assigned Managed Identity in each App Service with permission to access Key Vault.

Each Azure Web App and Function App receives its own system-assigned managed identity automatically when enabled. You grant each identity specific permissions to Key Vault (e.g., Get, List secrets). This approach requires no Azure AD application registrations, no client secrets or certificates, and no manual credential rotation. Changes to Azure AD are minimal and fully managed by Azure.

Incorrect Option:

A. Create a single user-assigned Managed Identity with permission to access Key Vault and configure each App Service to use that Managed Identity.

While technically possible, this approach requires creating a separate Azure AD resource (user-assigned identity) and assigning it to each App Service. It does not minimize Azure AD changes compared to system-assigned identities, which are created and deleted automatically with the resources.

B. Create a single Azure AD Service Principal with permission to access Key Vault and use a client secret from within the App Services to access Key Vault.

This requires creating an Azure AD application registration, generating and storing a client secret, and distributing that secret to each App Service. Storing secrets violates the requirement, and managing a shared secret across multiple services increases security risk and administrative overhead.

D. Create an Azure AD Service Principal with Permissions to access Key Vault for each App Service and use a certificate from within the App Services to access Key Vault.

Creating individual service principals for each App Service generates multiple Azure AD objects and requires managing certificates (issuance, renewal, deployment). This significantly increases Azure AD changes and administrative complexity compared to system-assigned managed identities.

Reference:

What are managed identities for Azure resources?

Use Key Vault references for App Service and Azure Functions

System-assigned vs user-assigned managed identities

| Page 3 out of 28 Pages |